Best AI Tools for Facebook Ads That Marketers Actually Use

A practical look at AI tools for Facebook ads, how they help with creatives, testing, and optimization, plus what to expect from each.

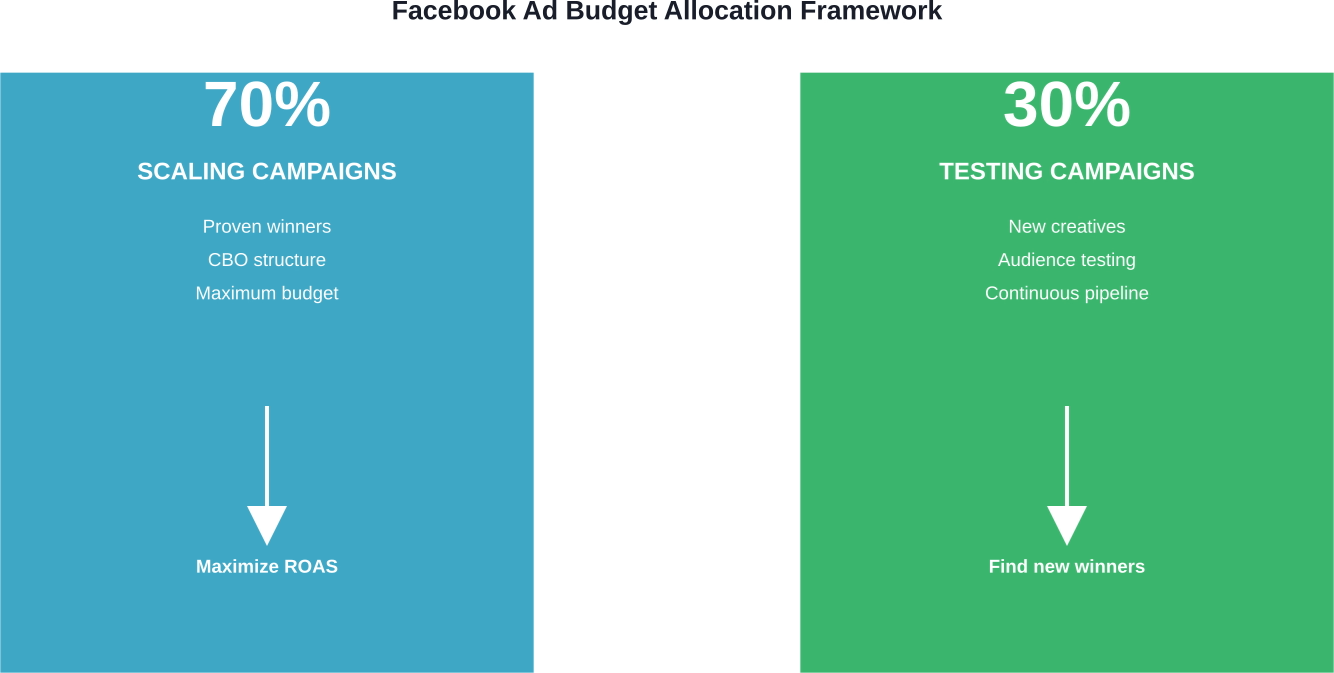

Testing and scaling Facebook ads requires a systematic approach: run controlled tests with sufficient budget and time (tests require at least 48-72 hours minimum before initial performance assessment, though 5-7 days is recommended for scaling decisions), identify winners based on clear KPIs, then scale gradually using proven methods like 20% daily budget increases or audience expansion. The key is maintaining a continuous testing framework while allocating 70% of budget to proven campaigns and 30% to ongoing creative experimentation.

The lifespan of successful Facebook ads has become increasingly short. Even campaigns delivering exceptional results today can see performance drop dramatically within weeks. This reality makes testing and scaling a continuous necessity rather than a one-time project.

The challenge lies in finding that balance. Test too aggressively and the budget disappears without meaningful results. Scale too quickly and profitable campaigns collapse under increased spend. But get the formula right? That's when Facebook ads become a reliable growth engine.

Here's what actually works in 2026.

Think of Facebook advertising as a two-phase operation. Testing identifies what works. Scaling amplifies those winners. Sounds simple, but most advertisers blur these phases together and wonder why performance suffers.

The fundamental principle is this: always be testing. Not occasionally. Not when campaigns decline. Always. The most successful Facebook advertisers maintain dedicated testing budgets running continuously, separate from their scaling efforts.

Campaign structures should reflect this division. Based on available data, a proven approach allocates 70% of total ad budget to scaling campaigns using Campaign Budget Optimization (CBO), while the remaining 30% funds ongoing testing campaigns, also using CBO. This split ensures resources flow primarily toward proven performers while maintaining a steady pipeline of new creative assets.

CBO automatically shifts budget toward best-performing creatives within a campaign. This reduces risk for smaller brands while accelerating feedback cycles. The system learns faster when it controls budget allocation rather than dividing spend evenly across all ad sets regardless of performance.

Scaling Facebook ads usually depends on finding a few winners and pushing the budget behind them, but a lot of that process is built on trial and error. You end up spending a significant part of the budget just to identify what not to scale.

Extuitive moves part of that work earlier. It simulates how people respond to your creatives before launch, helping you compare variations and focus on the ones more likely to hold performance as spend increases. That means fewer weak ads entering your campaigns and a cleaner path to scaling. If you want to waste less budget on testing and scale with more confidence, run your creatives through Extuitive before increasing spend.

Testing works only when variables are controlled. Change multiple elements simultaneously and the data becomes meaningless. Which element drove the improvement? Which caused the decline? There's no way to know.

The golden rule: test one element at a time. Creative variations in one campaign. Audience segments in another. Never both together.

Underfunded tests produce unreliable data. The minimum viable testing budget depends on the cost per result in the specific account. As a baseline, tests need sufficient budget to generate at least 50-100 conversion events (clicks, add-to-carts, or purchases depending on the optimization goal) before drawing conclusions.

For most accounts, this translates to daily budgets between $20-$100 per ad set during testing. Lower budgets extend the testing window beyond practical limits. Higher budgets accelerate learning but risk excessive spend on underperformers.

Patience matters here. Once tests launch, avoid touching them for 24-48 hours minimum. Meta's algorithm needs time to optimize delivery. Early performance rarely reflects final results.

Creative determines success more than any other variable. The same offer, audience, and budget can produce vastly different results based solely on the ad creative used.

Creative testing should examine:

But again, test these elements separately. Running five completely different ad concepts simultaneously makes it impossible to identify which specific elements resonate.

A more effective approach tests variations of a single concept. Start with one core message and visual direction, then create 3-4 versions changing only headlines. Identify the winner. Next test round changes only the visual while keeping the winning headline. This iterative process builds understanding of what works.

Audience testing follows similar principles. The goal is identifying which customer segments respond most favorably to the offer.

Common audience testing approaches include:

Lookalike audiences deserve special attention. They leverage Meta Pixel data to find new customers resembling existing ones. Start with 1% lookalikes (the closest match to the source audience) and test progressively broader percentages. The expanded reach of 3-5% lookalikes often maintains acceptable performance while accessing larger audience pools.

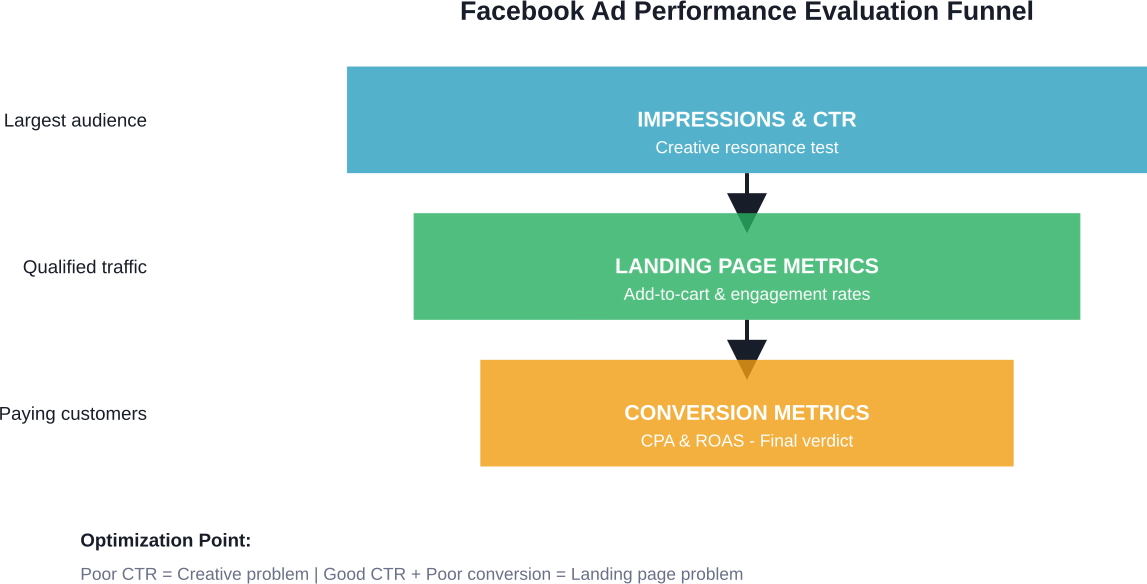

Data without context is noise. Picking winners requires clear key performance indicators established before tests begin.

The specific metrics that matter depend on business objectives. E-commerce brands typically prioritize return on ad spend (ROAS) or cost per purchase. Lead generation businesses focus on cost per lead and lead quality. App-based companies track cost per install and user engagement rates.

Here's what many experts suggest for evaluation timeframes: Tests require at least 48-72 hours minimum before initial performance assessment and 50 conversion events, though 5-7 days is recommended for scaling decisions. Performance during the first 24 hours is almost always misleading as the algorithm explores delivery options.

When evaluating results, consider these benchmarks:

They are:

Don't ignore the funnel metrics that predict conversion performance:

A campaign with excellent CTR but poor conversion rates signals a disconnect between ad messaging and landing page experience. That's a different problem requiring a different solution than creative optimization.

Scaling is where most advertisers stumble. They find a winning campaign generating a 5:1 ROAS at $50 daily spend, immediately increase the budget to $500, and watch ROAS collapse to 1.5:1. What happened?

Dramatic budget changes reset the algorithm's learning. The delivery system that is optimized for $50/day performance doesn't translate directly to $500/day performance. Audience saturation accelerates. Frequency climbs. Performance degrades.

Successful scaling happens gradually using one of several proven methods.

This approach increases spending within existing campaign structures. According to testing data, advertisers can safely increase budgets by at least 20% per day without resetting the learning phase. This means a campaign spending $100 daily can increase to $120, then $144, then $173, and so on.

You can safely increase the budget by at least 20% per day without resetting the learning phase. Monitor performance closely during scaling and adjust the pace based on KPI performance.

Generally speaking, waiting 3-4 days between budget increases gives the algorithm time to adjust and provides statistically relevant performance data at the new spend level.

Rather than increasing budget in existing campaigns, horizontal scaling reaches new audiences with proven creative.

Effective horizontal scaling strategies include:

This controversial method creates identical copies of winning campaigns. Results vary significantly, with some advertisers reporting sustained performance while others see duplicates cannibalize original campaign results.

When testing campaign duplication, create copies with distinct audiences to minimize overlap. Don't simply duplicate the same campaign three times targeting identical audiences. The campaigns will compete against each other in Meta's auction system.

Even winning creative fatigues over time. Ad frequency (average number of times each person sees the ad) climbs, response rates drop, and costs increase.

Maintaining performance during scaling requires continuous creative refresh. This doesn't mean completely new concepts every week. Variations of proven winners often outperform entirely new approaches.

If a specific video ad drives strong results, create new versions with different hooks, different testimonials, or different background music. The core concept remains, but the execution varies enough to combat creative fatigue.

Understanding what works matters. But knowing what breaks campaigns is equally valuable:

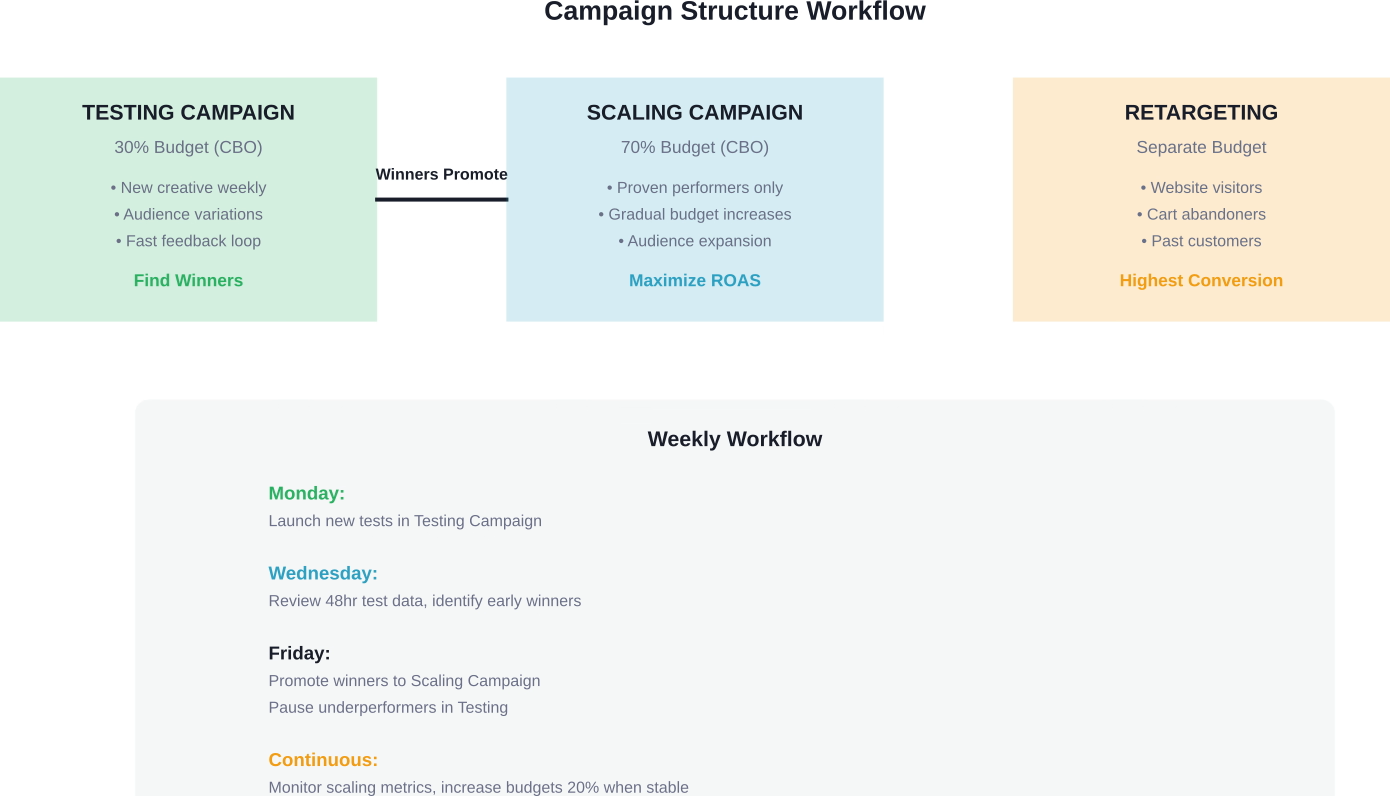

Structure determines workflow efficiency. The right campaign architecture separates testing from scaling while enabling smooth promotion of winners.

Based on data from successful advertisers, simplified structures outperform complex hierarchies in 2026. Here's a proven framework:

This three-campaign structure provides clarity. Testing happens in one place. Scaling happens in another. Retargeting operates independently. No confusion about campaign objectives or performance expectations.

Budget allocation determines results. Too much in testing wastes money on underperformers. Too little prevents discovering new winners before current campaigns decline.

The 70/30 split mentioned earlier provides a starting framework, but flexibility matters. Accounts in growth mode might shift to 60/40, investing more in testing to fuel expansion. Mature accounts with proven performers might move to 80/20, emphasizing scaling over exploration.

Real talk: total budget size affects strategy. An account spending $1,000 monthly approaches testing differently than one spending $50,000 monthly.

For smaller budgets ($1,000-$5,000 monthly), focus testing narrowly. Test one or two new creatives weekly rather than broad audience experimentation. The limited budget can't support parallel tests across multiple variables.

Medium budgets ($5,000-$20,000 monthly) enable more sophisticated testing. Multiple creative variations can run simultaneously. Audience segmentation becomes viable. The testing campaign might contain 5-8 active ad sets.

Larger budgets ($20,000+ monthly) should maintain aggressive testing programs. With substantial scaling budgets at stake, the testing pipeline must continuously produce new winners. These accounts can run 10-15 concurrent tests exploring creative audiences, formats, and markets.

Everything discussed requires data. Meta Pixel provides that data by tracking visitor behavior on websites. The pixel fires events when visitors view pages, add products to carts, initiate checkouts, or complete purchases.

This data powers optimization and lookalike audience creation. Without proper pixel implementation, testing becomes guesswork and scaling becomes impossible.

Critical pixel events to configure:

For lead generation, key events include:

Verify pixel implementation using Meta's Pixel Helper browser extension. Proper tracking is non-negotiable for effective testing and scaling.

Not every campaign deserves continued investment. Knowing when to stop testing an approach saves a significant budget.

Generally speaking, if a test shows poor performance after spending 2-3x the target cost per acquisition without generating results, pause it. A campaign targeting $20 CPA that spends $60 without conversions probably won't improve.

On the flip side, don't pause campaigns too quickly. Those first 24 hours rarely predict final performance. Give tests adequate time and budget before making decisions.

For scaling campaigns, declining performance doesn't always mean pause. First troubleshoot:

Address the root cause rather than reflexively pausing campaigns. Sometimes a creative refresh or budget reduction restores performance better than starting over.

Testing and scaling must happen within legal boundaries. The FTC has specific requirements for advertising disclosures, particularly when working with influencers or making endorsement claims.

According to FTC guidance, material connections between advertisers and endorsers must be clearly disclosed. If an influencer is paid to promote a product or receives free products for review, that relationship must be obvious to consumers. Disclosures should be clear, conspicuous, and placed where consumers will see them before making purchase decisions.

Meta’s advertising policies generally prohibit targeting ads to children under 13, and in many regions (including the EU/UK under GDPR/AADC), ad targeting for minors under 18 is severely restricted.

The FTC imposed a $5 billion penalty on Facebook in July 2019 related to privacy practices, demonstrating the serious consequences of regulatory violations. The settlement also established new compliance requirements for third-party developers and advertisers regarding data access.

When testing ad claims, ensure they can be substantiated. The FTC prohibits deceptive advertising, including unsubstantiated performance claims or misleading testimonials. Test variations of messaging, but keep all claims truthful and supportable.

Testing and scaling don't happen in a vacuum. Market conditions affect performance dramatically.

During high-competition periods (Black Friday, Cyber Monday, holiday shopping season), ad costs increase significantly as advertisers compete for limited attention. Testing during these periods often produces inflated cost data that doesn't reflect normal market conditions.

The better approach tests before peak seasons to identify winners, then scales aggressively during high-demand periods. A campaign proven successful in October scales into November and December when purchase intent peaks.

Post-holiday periods (January-February) typically see reduced competition and lower costs. This creates ideal testing conditions. The budget goes further, allowing more extensive experimentation without excessive spend.

Industry-specific seasonality matters too. Fitness products peak in January (New Year's resolutions) and May-June (summer preparation). Tax preparation services peak January-April. Gardening products peak March-July. Understanding these patterns allows strategic timing of testing and scaling efforts.

Businesses with diverse product catalogs face additional complexity. Should each product get separate campaigns? Can successful creative from one product apply to others?

The answer depends on product relationships. Closely related products often share audience overlap and can use similar creative approaches. A clothing retailer might find that creative winning for dresses also performs well for tops and accessories with minimal modification.

Unrelated products typically need independent testing. A company selling both fitness equipment and office furniture serves different audiences with different pain points. Testing and scaling strategies should be separate.

For large catalogs, prioritize testing on highest-margin or best-selling products. Prove the model with core offerings before expanding to the full catalog.

The Facebook advertising landscape changes constantly. Algorithms update shift delivery patterns. Privacy changes affect tracking capabilities. Competition evolves. What worked perfectly six months ago might underperform today.

That's exactly why continuous testing matters so much. The framework outlined here—dedicated testing budgets, controlled variable testing, gradual scaling, and continuous optimization—remains effective regardless of platform changes.

Start with the fundamentals. Allocate 30% of budget to ongoing testing. Test one variable at a time. Give tests an adequate budget and time before making decisions. Identify winners using clear KPIs. Scale those winners gradually using 20% budget increases or audience expansion.

Most importantly, never stop testing. The moment testing stops is the moment the campaign pipeline begins to dry up. Those winning campaigns currently driving results will eventually decline. When they do, what replaces them?

Advertisers who maintain systematic testing programs always have an answer to that question. They've got three new creative variations launching next week. They're testing two new audience segments. They're experimenting with video formats alongside static images.

Testing isn't the project. Scaling isn't the destination. They're both ongoing processes that compound over time. Each test teaches something about what resonates with the target audience. Each scaling success proves what works at volume. That accumulated knowledge becomes the competitive advantage.

The framework is here. The methods are proven. Implementation is what separates advertisers who struggle with Facebook ads from those who scale them profitably. Start testing, identify what works, scale strategically, and repeat the cycle continuously.