Which AI Tools Actually Help Lower CPA on Facebook Ads

A practical look at AI tools that help reduce Facebook Ads CPA through better creatives, targeting, and smarter decisions.

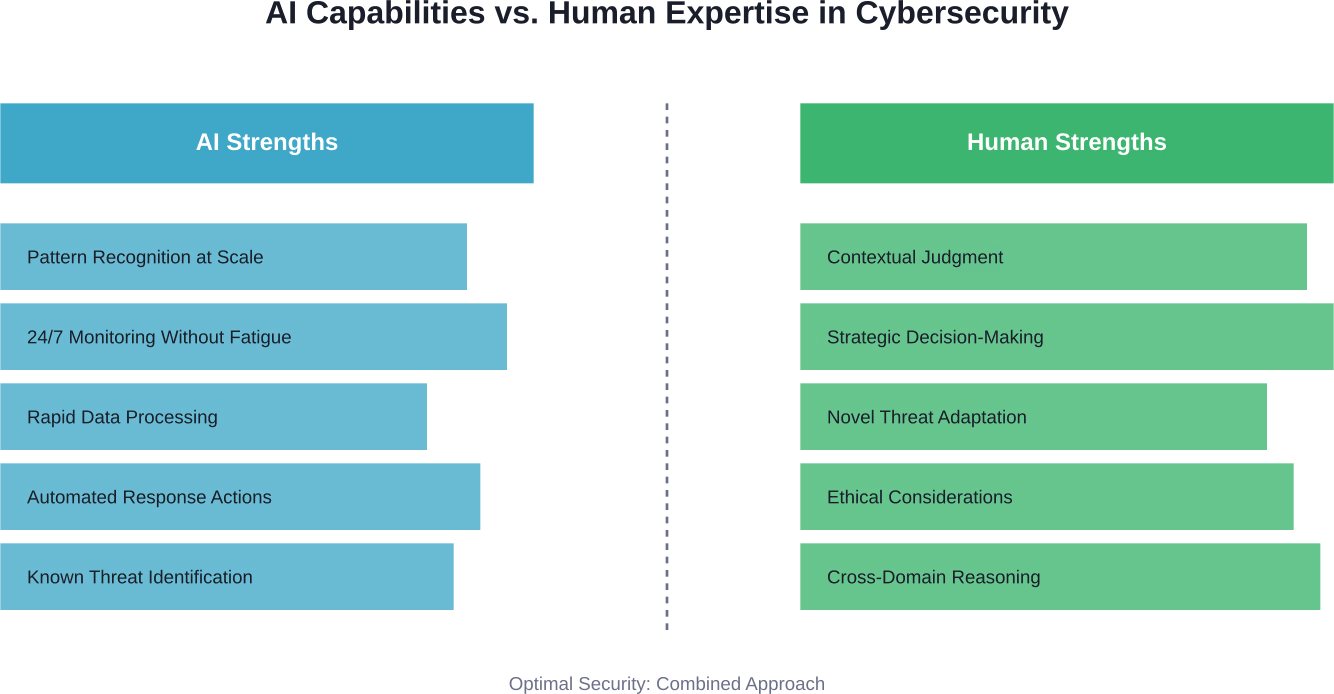

AI will not take over cybersecurity completely but will fundamentally transform how security teams operate. While AI excels at automating threat detection, analyzing massive datasets, and responding to known attack patterns, human expertise remains essential for strategic decision-making, contextual judgment, ethical oversight, and adapting to novel threats that fall outside training data.

The question keeps surfacing in board rooms and cybersecurity conferences: will artificial intelligence eventually replace the professionals who defend our digital infrastructure?

It's not an idle concern. AI-powered attacks are already happening. According to SANS Institute research, threat actors now use AI to generate malicious code through "vibe coding" — iteratively refining attacks by feeding errors back into AI models. The barriers to entry have dropped dramatically.

But here's the thing — while AI is transforming both sides of the cybersecurity arms race, the reality is far more nuanced than simple replacement narratives suggest.

AI has already embedded itself deeply into modern security operations. The Cybersecurity and Infrastructure Security Agency (CISA) openly documents how AI tools supplement their cyber defense mission, from spotting network anomalies to drafting public messaging.

These aren't experimental applications. They're production systems handling real threats.

According to Syracuse University's iSchool, 95% of users agree that AI-powered cybersecurity solutions improve the speed and efficiency of prevention, detection, response, and recovery. That's not hype — that's measurable operational impact.

The generative AI cybersecurity market is expected to grow almost tenfold between 2024 and 2034. Organizations are investing heavily because the technology delivers tangible results in specific domains.

AI demonstrates clear advantages in several security functions:

Real talk: these capabilities are transforming how security teams operate. Faster mean time to respond (MTTR), more focused threat hunting, proactive defense postures — the benefits are substantial.

Despite rapid advances, several fundamental limitations prevent AI from fully replacing human cybersecurity professionals.

AI systems excel at pattern matching but struggle with context. When an AI flags unusual database access at 3 AM, it doesn't know that the CFO is preparing an emergency board presentation. Human analysts understand organizational context, business priorities, and operational nuances that no training dataset can capture.

David Cass, a cybersecurity instructor at Harvard Extension School and CISO at GSR, highlighted this through consulting experience: companies have lost substantial amounts in under 30 minutes due to attacks that exploited contextual gaps AI systems couldn't understand.

Here's where things get interesting. AI defenses rely on training data — patterns learned from past attacks. But sophisticated threat actors specifically design attacks to evade pattern-based detection.

The December 17, 2025 congressional testimony documented the first autonomous AI-powered nation-state attack by a Chinese Communist Party-sponsored group. This represented something fundamentally new — attacks that evolved faster than traditional detection models could adapt.

According to SANS Institute analysis, Horizon3's NodeZero testing achieved full privilege escalation in about 60 seconds. According to CrowdStrike's 2025 Global Threat Report, the average breakout time has plummeted to 29 minutes, with the fastest recorded breakout occurring in just 14 seconds. AI-powered attacks are compressing these timelines further.

Defending against novel, adaptive threats requires creativity, strategic thinking, and the ability to reason about attack scenarios that don't yet exist in any dataset. That's human territory.

Cybersecurity decisions carry significant ethical, legal, and business implications. Should the security team block a suspicious transaction that might be legitimate? How aggressively should threat hunting operate in privacy-sensitive environments? What level of risk is acceptable for a critical system upgrade?

These questions don't have algorithmic answers. They require judgment informed by organizational values, regulatory requirements, and stakeholder priorities.

CISA's guidance on secure AI integration in operational technology emphasizes this point — introducing AI without proper human oversight can introduce risks that outweigh benefits, particularly in environments controlling vital public services.

When AI blocks a transaction or quarantines a file, can it explain why in terms stakeholders understand? Many advanced models operate as black boxes, making decisions based on complex statistical relationships that even their creators struggle to interpret.

Regulatory frameworks increasingly demand explainability. The NIST AI Risk Management Framework emphasizes trustworthiness and transparency as core requirements. Security decisions that affect business operations, individual privacy, or legal compliance need clear justification.

Human experts translate AI outputs into business context, validate recommendations against organizational knowledge, and provide the explainability that compliance and stakeholder management require.

Rather than replacement, the emerging model is augmentation. AI handles what it does best — rapid analysis, pattern recognition, continuous monitoring — while humans provide what AI cannot: judgment, creativity, ethical reasoning, and strategic thinking.

This division of labor allows security teams to operate more effectively. AI handles the exhausting, repetitive analysis that would overwhelm human teams. Professionals focus on higher-order tasks that require uniquely human capabilities.

Organizations successfully integrating AI into security operations follow several common patterns:

SANS Institute's research on AI-driven cyber defense emphasizes building "safe harbor" — frameworks that allow AI to operate at machine speed for appropriate tasks while maintaining human oversight where judgment matters.

AI won't eliminate cybersecurity jobs, but it's definitely changing what those jobs look like.

Routine tasks are automating away. Security analysts spend less time manually reviewing logs and more time investigating complex incidents. Architecture roles increasingly require understanding how to design systems that incorporate AI securely.

New specializations are emerging:

The skillset for cybersecurity professionals is expanding. Technical security knowledge remains fundamental, but professionals increasingly need to understand AI capabilities and limitations, work effectively with AI tools, and translate between technical AI outputs and business requirements.

For current and aspiring cybersecurity professionals, several strategies help navigate this transition:

While AI delivers significant benefits, it also introduces risks that organizations must actively manage.

Attackers can manipulate AI systems through adversarial inputs designed to evade detection or by poisoning training data to create blind spots. As defenders deploy AI, attackers develop techniques to exploit AI weaknesses.

The SANS Institute documented how threat actors weaponize AI across the entire attack lifecycle — from reconnaissance through execution. This isn't theoretical; it's happening in production environments.

Teams that depend too heavily on AI risk losing fundamental security skills. When AI handles routine analysis, junior analysts may not develop pattern recognition capabilities that come from hands-on experience.

Organizations need deliberate strategies to maintain core competencies even as AI handles more tasks.

AI security tools often require access to sensitive data for training and operation. This creates privacy risks and regulatory compliance challenges, particularly in jurisdictions with strict data protection requirements.

The NIST AI Risk Management Framework specifically addresses privacy-preserving AI as a critical future direction for cybersecurity applications.

AI systems can generate high volumes of alerts, many of which are false positives. Without proper tuning and human oversight, this leads to alert fatigue where genuine threats get lost in noise.

Effective AI integration requires ongoing refinement to balance sensitivity with specificity.

Organizations successfully deploying AI in cybersecurity share several common practices.

So, will AI take over cybersecurity?

The evidence points to transformation rather than takeover. AI is becoming an indispensable part of the security toolkit, handling tasks that would be impossible for human teams alone. But it's not replacing the need for skilled professionals — it's changing what those professionals do.

The most effective security programs combine AI's strengths in speed, scale, and pattern recognition with human capabilities in judgment, creativity, and contextual reasoning. Organizations that view AI as augmentation rather than replacement build more resilient defenses.

For cybersecurity professionals, this means adapting but not abandoning the field. The core mission — protecting systems, data, and people from threats — remains fundamentally human. The tools are evolving, the techniques are advancing, but the need for skilled defenders has never been greater.

And honestly? As attacks become more sophisticated and AI-powered, organizations need human expertise more than ever to navigate the complexity, make strategic decisions, and stay ahead of adversaries who are also leveraging these same technologies.

The relationship between AI and cybersecurity will continue evolving rapidly. Future trends point toward more autonomous responses for low-risk scenarios, privacy-preserving AI techniques that protect sensitive data, and quantum-resistant security preparations as computing paradigms shift.

But through all these changes, one constant remains: cybersecurity is fundamentally about protecting people, organizations, and society from harm. That mission requires not just technological capability but wisdom, ethics, and judgment — qualities that remain distinctly human.

AI is a powerful ally in that mission. It's not the replacement.