The Top Free AI-Powered Platforms Every Digital Marketer Should Be Using

Discover the best free AI tools and platforms for digital marketing in 2026. Save time on content and SEO insights with these top-rated free options.

Teachers check for AI-generated work using specialized detection tools like GPTZero, Turnitin, and Originality.ai, manual analysis of writing style inconsistencies, and direct conversations with students. However, research shows these AI detectors have high false positive rates (up to 50% in some studies) and can unfairly flag work from non-native English speakers and neurodivergent students, making them unreliable as sole indicators of AI use.

Since ChatGPT's release in November 2022, educators have faced an unprecedented challenge. Students now have access to AI tools that generate human-like essays in seconds. The technology has forced teachers to rapidly redefine assessment practices and rethink how they evaluate authentic student work.

But here's the thing—detecting AI-generated text isn't as straightforward as many assume.

Teachers have developed multiple approaches to identify AI use in student submissions. Some rely on specialized detection software. Others use manual analysis techniques honed over years of grading student work. Many combine both methods while maintaining open dialogues with their students about responsible AI use.

The real question isn't just whether teachers can detect AI. It's whether current detection methods actually work reliably.

Educational institutions have rapidly adopted AI detection software as a first line of defense. School districts from Utah to Ohio to Alabama are spending thousands of dollars on these tools, despite mounting research showing the technology is far from foolproof.

Several platforms have emerged as the most commonly used AI detectors among educators:

GPTZero launched specifically to help educators detect AI-generated writing. GPTZero demonstrated accuracy rates in a study analyzing 500 writing samples, according to research published in the Information Systems Education Journal.

The platform offers a free basic plan with limited features. GPTZero offers premium plans with pricing details available on their official website. More than 380,000 educators use GPTZero according to the platform.

GPTZero uses sentence-by-sentence analysis in its Deep Analysis feature. This allows teachers to identify specific portions of text that may be AI-generated within mixed submissions—a common scenario as students blend their own writing with AI-generated content.

Turnitin, already widely used for plagiarism detection in schools, added AI detection capabilities to its platform. The company initially claimed a false positive rate of less than 1% at the sentence level, though independent testing produced different results.

But a Washington Post investigation produced different results. Their testing found a much higher false positive rate of 50%, though with a smaller sample size. According to reporting by The Washington Post, Turnitin now measures accuracy on a sentence-by-sentence level—a narrower measure than their original claims.

The tool integrates directly into learning management systems many schools already use. Teachers can review AI detection scores alongside plagiarism reports in a single interface.

Originality.ai markets itself as having demonstrated exceptional performance across multiple published studies. According to the platform's own reporting, it achieved the highest accuracy rates in a meta-analysis of eight third-party studies.

The tool claims 99%+ accuracy for its Academic Model designed specifically for educators, with less than 1% false positive rate. Originality.ai offers pricing on a credit system at $0.01 per 100 words scanned, with the ability to add unlimited educators per account.

In a study evaluating AI detectors on the Human and AI Text Database (AH&AITD), GPTZero achieved a 63.77% accuracy rate while Originality.ai achieved higher accuracy.

Originality.ai detects content from multiple AI models including GPT-3, GPT-3.5, GPT-4, and others. It also includes plagiarism detection features in the same platform.

Long before AI detection software existed, teachers developed skills to recognize when student work didn't match expected patterns. These manual methods remain crucial, especially given the reliability issues with automated detection.

Experienced educators know their students' writing capabilities. When a submission shows dramatic departures from a student's established voice, vocabulary, or sentence structure, it raises red flags.

Teachers look for sudden shifts in:

AI-generated text often has a particular polish that student writing typically lacks. It tends to be grammatically perfect, well-organized, and free of the minor errors that characterize authentic student work.

Teachers ask students follow-up questions about their submissions. If a student submitted AI-generated work, they often struggle to explain their reasoning, defend their thesis, or discuss specific points in depth.

This conversational approach serves multiple purposes. It helps teachers gauge authentic understanding while creating opportunities to discuss academic integrity and proper AI use.

Some educators have students write portions of assignments in class or present their work verbally. These real-time assessments make it significantly harder to rely solely on AI-generated content.

AI models sometimes generate plausible-sounding but non-existent citations. Teachers verify sources to ensure they're real and actually support the claims made in student papers.

When citations check out but seem oddly formatted or inconsistent with proper academic style, it can indicate AI involvement. The technology has improved at generating realistic references, but errors still occur frequently enough to serve as detection signals.

Here's where things get problematic. Research from multiple academic institutions reveals that AI detection tools suffer from fundamental reliability issues that make them questionable for high-stakes academic decisions.

According to research published in ERIC (ED673127), AI detection programs have been found to return high rates of false detection of AI-generated text. This leads to increased likelihood that students will be unfairly academically penalized.

Research documented in the ERIC database demonstrates that human-written work is incorrectly flagged as AI-generated at concerning rates.

False positive rates vary widely across different tools. While Turnitin initially claimed less than 1% false positives, independent testing has produced much higher rates. According to law library research guides, recent studies indicate Turnitin's false positive rate has been measured at 50% in independent testing, significantly higher than the vendor's 1% claim.

Multiple studies demonstrate that AI detection tools are biased against non-native speakers and students who are underrepresented in higher education. According to information compiled by Brandeis University, this bias represents a serious equity concern.

Students for whom English is a second language write in patterns that can result in false positive rates up to 70% according to recent studies. The technology mistakes certain linguistic patterns common among second-language learners for AI characteristics.

Recent studies also indicate that neurodivergent students face higher false positive rates. Their writing patterns may differ from neurotypical students in ways that trigger detection algorithms.

As students become more sophisticated about AI use, they've learned to modify AI-generated text to evade detection. Simple techniques like asking AI to write in a more casual style or manually editing generated content can fool detection algorithms.

Research by Fleckenstein et al. (2024) titled "Do teachers spot AI? Evaluating the detectability of AI-generated texts among student essays" found that experienced teachers were unable to correctly identify low-quality texts but more successful with high-quality texts.

The cat-and-mouse game between AI generation and detection technology continues to evolve. So-called "AI bypassers" or "humanizers" have emerged—tools specifically designed to modify AI-generated text to avoid detection.

Leading universities and educational organizations have issued guidance strongly cautioning against relying solely on AI detection software for academic integrity decisions.

The University of Iowa published a position statement titled "The case against AI detectors" recommending that instructors refrain from using these tools. The institution notes that technological policing has the potential to cause harm in educational settings.

MIT Sloan School of Management states directly: "AI Detectors Don't Work. Here's What to Do Instead." Their guidance emphasizes transparent policies, open discussions with students, and assignments that engage intrinsic motivation rather than detection technology.

Brandeis University compiled research showing that AI detection tools are unreliable and can be biased. Their AI Steering Council recommends understanding these limitations before implementing detection tools.

According to researchers including Soheil Feizi quoted in The Washington Post reporting, concerns exist that detection technology may be fundamentally impossible at the required accuracy levels, proposing a 0.01% false-positive rate baseline.

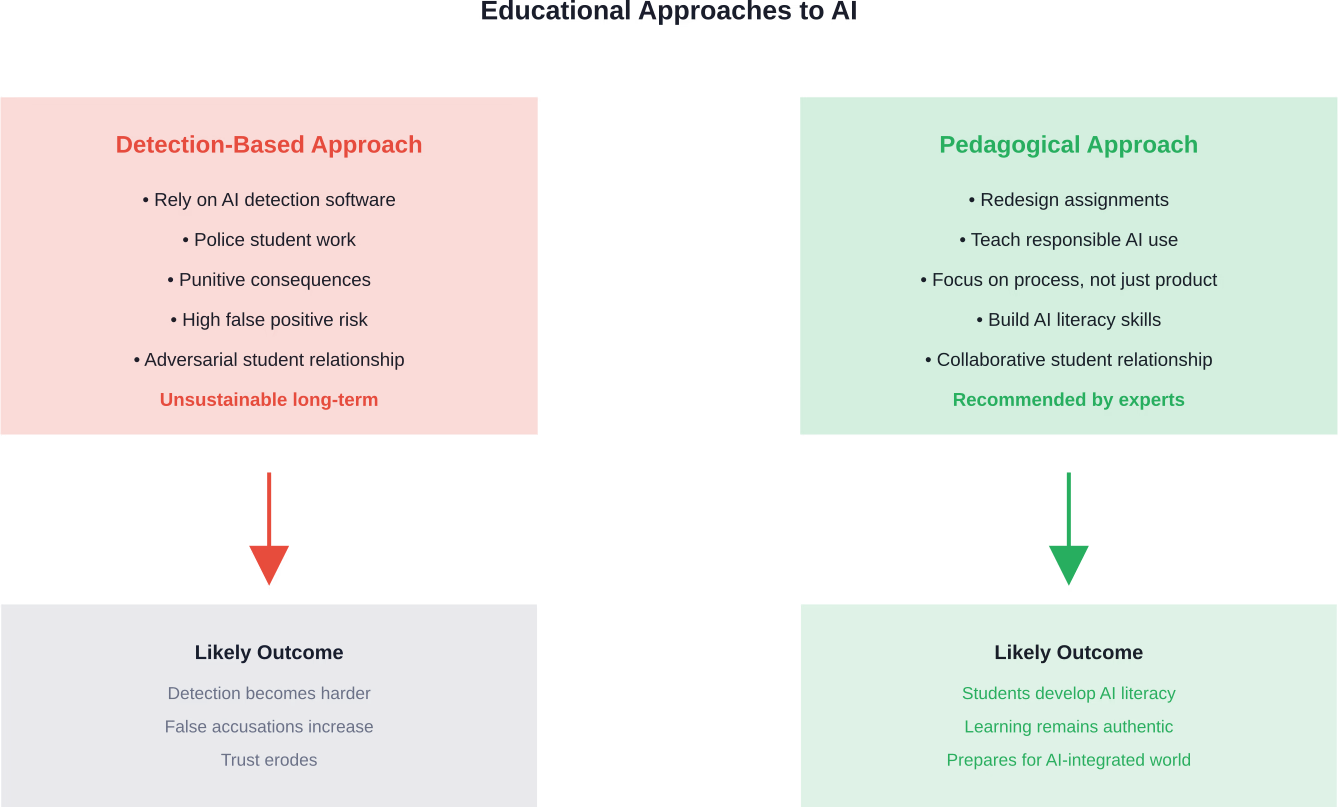

Rather than relying on unreliable detection technology, educators are developing pedagogical approaches that make inappropriate AI use less appealing or effective.

Teachers are creating assignments that AI tools struggle to complete effectively. These include:

Assignments that require personal reflection, specific course context, or original research based on primary sources are harder to outsource to AI effectively.

Evaluating the writing process rather than just the final product makes AI use more visible. Teachers who require brainstorming documents, outlines, rough drafts, and revision histories can better track authentic student work.

When students must demonstrate their thinking at multiple stages, simply submitting an AI-generated final product becomes insufficient. The process documentation itself becomes evidence of learning.

Progressive educators are teaching students how to use AI tools responsibly rather than banning them outright. This includes discussions about:

By treating AI as a tool that requires skill to use effectively and ethically, teachers prepare students for a world where these technologies are ubiquitous.

Open conversations about AI create classroom cultures where students feel comfortable discussing their use of technology. When teachers clearly communicate expectations and explain the pedagogical reasons behind assignments, students are more likely to engage authentically.

Many students don't fully understand what constitutes appropriate AI use. Clear policies combined with genuine dialogue help establish shared expectations rather than an adversarial dynamic.

The emergence of AI writing tools has fundamentally altered discussions about academic integrity. Traditional definitions of plagiarism and cheating don't map neatly onto AI assistance.

Is using ChatGPT to generate an outline different from using it to write full paragraphs? What about asking AI to improve grammar in student-written text? These questions don't have universal answers—institutions and individual instructors are establishing their own policies.

Some schools have implemented honor codes specifically addressing AI use. Others have integrated AI literacy into their curriculum. A few have banned AI tools entirely, though enforcement remains challenging.

The technology continues evolving faster than educational policy. Tools that didn't exist when syllabi were written launch mid-semester. Detection methods that seemed promising become obsolete within months.

If considering whether teachers can detect AI use, here's the reality:

Teachers have multiple methods for identifying AI-generated work, though none are foolproof. Detection software exists but suffers from serious accuracy and bias problems. Manual analysis by experienced educators often proves more reliable.

Getting caught using AI inappropriately can result in serious academic consequences—failed assignments, course failures, or disciplinary action. But the bigger risk is missing genuine learning opportunities.

Most teachers aren't trying to catch students in a trap. They want to help develop real skills that AI tools can't replace—critical thinking, analysis, synthesis, and authentic voice.

When policies allow appropriate AI use, take advantage of it transparently. When they don't, understand that the restrictions exist to protect learning, not to make life harder.

The detection arms race between AI generation and AI detection likely won't end well for detection. As language models improve and become harder to distinguish from human writing, detection will become increasingly difficult.

Education will need to adapt by focusing on assignments and assessments that emphasize skills AI can't replicate—original thinking, personal insight, creative synthesis, and deep understanding of context.

Some educators envision a future where AI literacy becomes as fundamental as digital literacy. Students would learn to use AI tools effectively while understanding their limitations and ethical implications.

The technology isn't going away. The question is whether education systems will adapt pedagogically or continue attempting to police usage through unreliable technological means.

Teachers check for AI using a combination of detection software, manual analysis, and direct conversation with students. But the reliability of these methods varies dramatically.

The evidence is clear: AI detection tools suffer from accuracy problems and bias issues that make them unsuitable as the sole basis for academic integrity decisions. Multiple leading academic institutions recommend against relying exclusively on technological detection.

The more effective approach involves pedagogical adaptation. Assignments designed to emphasize personal reflection, specific course context, and process documentation are inherently more resistant to inappropriate AI use. Teaching students to use AI responsibly and developing AI literacy prepares them for a world where these tools are ubiquitous.

The fundamental question isn't whether teachers can detect AI. It's whether education will evolve to focus on skills that remain valuable even when AI can generate decent essays—critical thinking, original insight, ethical reasoning, and authentic understanding.

For educators struggling with these issues, the recommendation from experts is consistent: transparent policies, open dialogue with students, thoughtful assignment design, and a focus on learning rather than policing. For students, the message is equally clear: shortcuts undermine learning, and the risks—both of getting caught and of missing educational opportunities—far outweigh any temporary convenience.

The AI detection conversation will continue evolving. But one thing remains certain—technology alone won't solve the challenge of maintaining academic integrity in the age of generative AI.