How to Use Shopify Cash the Right Way

Learn how Shopify Cash works, where to use it, and how to get paid or refunded. A quick guide for both shoppers and store owners.

Colleges are increasingly using AI detection tools like Turnitin to check application essays for AI-generated content, though detection accuracy remains imperfect with false positive rates. About 70% of top U.S. universities have no formal AI policy, while 7% prohibit AI use completely and 27% allow restricted use for brainstorming or editing. Admissions officers rely on multiple methods including linguistic analysis, cross-referencing with other materials, and interviews to verify authenticity.

The short answer? Yes, many colleges are checking for AI use in application essays.

But here's where it gets complicated. The technology isn't perfect, the policies aren't uniform, and the entire landscape is shifting faster than admissions offices can keep up.

With tools like ChatGPT and other generative AI platforms becoming mainstream, college admissions teams face an unprecedented challenge. They need to verify that the essays crossing their desks genuinely represent the students applying—not the output of an algorithm.

So what's really happening behind those admissions office doors? Let's break down the detection methods, the policies shaping this new era, and what it all means for applicants navigating the college process.

According to the University of North Carolina's admissions office, they use AI programs to provide data points about students' Common Application essays. These data points include writing style and grammar analysis, allowing admissions teams to focus on content while flagging potential inconsistencies.

Northwestern University updated their guidance in September 2025, stating they expect applications to reflect students' own thoughts, experiences, values, and perspectives. They acknowledge AI can be powerful when used appropriately—particularly for students in settings where college-prep resources are limited.

But the policy landscape remains fractured. A recent review of the Top 30 U.S. universities revealed: about 70% of schools have no formal AI policy, 7% prohibit AI use completely, and roughly 27% allow restricted use, usually for brainstorming or editing but not drafting.

This patchwork reflects both the speed of technological change and genuine uncertainty about how to balance accessibility with authenticity.

Admissions offices aren't relying on a single method. They're combining multiple detection approaches to build a fuller picture.

Turnitin released its AI writing detection feature in April 2023, and it's become a primary tool for many institutions. The company claims 98% confidence in controlled lab environments, though it acknowledges detectors have significant accuracy limitations, with margins of error up to ±15 percentage points.

That's significant. A score of 50 could actually represent anywhere from 35 to 65 percent AI-generated content.

Turnitin's own guidance is clear: the tool provides information, not an indictment. According to their website, the AI detection model may not always be accurate and can misidentify both human and AI-generated text. They explicitly state it should not be used as the sole basis for adverse actions against a student.

Some universities including Vanderbilt have disabled AI detection tools due to reliability concerns. After several months of testing, Vanderbilt's Center for Teaching concluded the tool raised too many concerns about fairness and accuracy.

The University of Kansas Center for Teaching Excellence warns that Turnitin's AI detector appears to have a less than 1% false positive rate but will likely miss up to 15% of text actually written by AI.

Admissions officers are trained to spot certain patterns that suggest AI generation. These include:

Research from Stanford shows AI detectors can be biased against non-native English speakers. This puts international students and those from under-resourced educational backgrounds at greater risk of false accusations.

Admissions teams compare essays against other application components. If an essay demonstrates sophisticated writing but short-answer responses seem basic, that inconsistency raises flags.

They also review teacher recommendations, which often comment on a student's writing ability. A lukewarm assessment of writing skills paired with a polished essay creates obvious questions.

According to academic integrity researchers, document metadata can reveal authorship patterns. Word documents contain information about when files were created, by whom, and which software was used.

While not typically used by admissions offices, some institutions have explored metadata analysis for flagged applications. Patterns showing documents created by unfamiliar users or in unexpected time zones can indicate outside assistance.

The lack of standardization creates confusion for applicants. Here's what different institutions are saying:

Northwestern explicitly acknowledges AI can help students with limited resources, suggesting they view the technology through an equity lens. Other schools remain silent, leaving applicants to guess what's acceptable.

This uncertainty creates anxiety. Some students express concerns about essays being flagged as AI-generated when they believe they wrote authentically.

Look, the technology simply isn't there yet.

When Vanderbilt disabled Turnitin's AI detection in 2023, they cited concerns about false positives and the tool's potential to unfairly impact certain student populations. The University of Kansas echoes this caution, recommending instructors never rely solely on detection scores.

Purdue University notes in their January 2024 guidance that while Turnitin aims for high accuracy, the system will inevitably miss some AI-generated text and occasionally flag human writing incorrectly.

Research on detection systems may show bias against non-native English speakers, as noted in academic integrity literature. This puts international students at particular risk.

That's a serious equity issue. If detection tools systematically flag international students or those from less privileged educational backgrounds, they become instruments of discrimination rather than integrity enforcement.

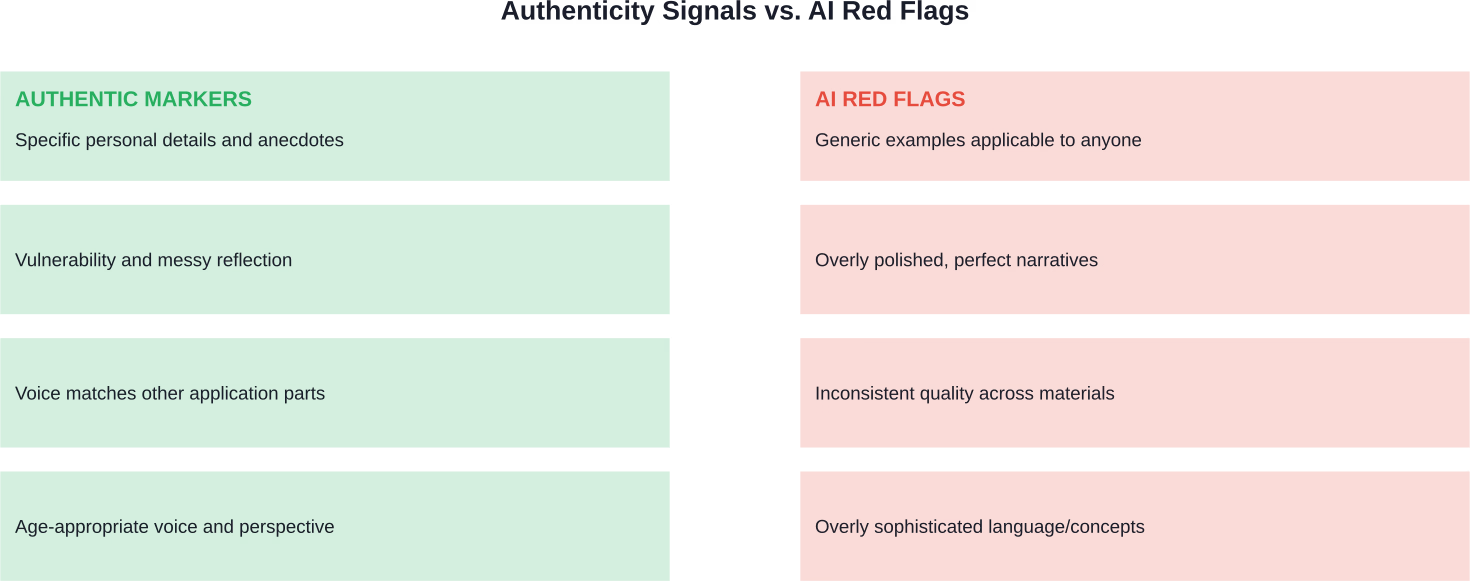

Beyond detection tools, admissions officers rely on their experience reading thousands of essays. They're looking for authenticity markers that AI struggles to replicate.

Instead of generic statements like "I learned leadership through basketball," authentic essays include specific moments, conversations, sensory details, and quirky observations that feel genuinely lived.

AI tends to produce sanitized, universally positive narratives. Human essays often include messiness—moments of doubt, failure, or confusion followed by genuine reflection.

The essay should sound like it came from the same person who wrote the short answers, filled out activity descriptions, and earned feedback from teachers. Voice consistency across the application matters tremendously.

Seventeen-year-olds don't typically write with the philosophical depth of graduate students. Essays that sound too polished or mature relative to other application materials stand out.

What happens if an admissions office determines an essay was AI-generated?

College Board's AP Exam and SAT testing rules indicate their approach to academic integrity. These rules warn of serious consequences for violating integrity standards, including score cancellation and being banned from future exams.

For college applications, consequences vary but can include:

But here's the thing—many schools don't have clear enforcement procedures yet. The uncertainty cuts both ways. Some students using AI might slip through, while others writing authentically might face unfair scrutiny.

The best defense against AI detection concerns is writing genuinely personal essays. Here's how.

Before any structure or editing, write freely about your experiences. Don't worry about grammar or organization. Just get your thoughts down in your natural voice.

When a teammate was injured during a crucial moment, quick decisions had to be made under pressure—and hesitation followed. Specificity is the enemy of AI-generated blandness.

Don't just describe what happened. Show how you processed it. What did you misunderstand initially? What surprised you? What do you still wonder about?

If it doesn't sound like something one would actually say, revise it. Essays should sound like slightly more polished versions of natural speech, not like corporate memos.

Teachers, counselors, and trusted adults can help strengthen essays while keeping authentic voice intact. This differs from having AI rewrite content entirely.

Here's where it gets philosophically tricky.

Academic integrity literature indicates that students encounter unclear expectations about AI use across different institutional contexts. Some professors encourage AI for brainstorming; others prohibit it entirely.

Northwestern acknowledges AI can help students without access to expensive college counselors or prep resources. Is using AI for grammar checking different from using Grammarly? What about using it to generate ideas you then completely rewrite in your own words?

These aren't simple questions. But admissions offices are clear on one point: the final essay must represent the student's own thinking, experiences, and voice.

Using AI to generate the entire essay violates that standard. Using it to check grammar or brainstorm ideas probably doesn't—though check specific school policies.

CU Boulder and University of Pennsylvania researchers published research in October 2023 on AI tools that identify personal traits like leadership and perseverance in essays. The study's lead researcher, Sidney D'Mello, emphasized AI should never replace human admissions officers but could help identify promising students.

That research team designed their tools to avoid racial or gender bias, though they found female writers were still slightly disadvantaged in some metrics.

So we're heading toward a future where AI might be used both to generate essays and to evaluate them. The irony isn't lost on anyone.

Academic institutions are grappling with Teaching and Learning in the Age of Artificial Intelligence, as Syracuse calls it. The International Center for Academic Integrity emphasizes that enforcement alone won't solve the problem—education and clear expectations matter more.

Expect policies to evolve rapidly. Schools will likely move toward clearer guidelines, better training for admissions officers, and more sophisticated detection methods that account for equity concerns.

Yes, colleges check for AI in application essays. But the real story is more nuanced than a simple yes or no.

Detection technology exists but remains imperfect. Policies vary wildly across institutions. And the entire system is evolving faster than anyone can track.

The safest path forward? Write essays that genuinely represent who you are. Include specific details only you could know. Let your actual voice come through. Show vulnerability and real reflection.

Those essays won't just avoid detection problems—they'll be more compelling to read. Admissions officers spend their careers evaluating authenticity. They can tell the difference between a formulaic AI-generated essay and one that reveals a real person.

Don't risk your college future on technology that promises to make the process easier. The application essay is your chance to show colleges who you are beyond grades and test scores. Make it count by making it genuinely yours.