Facebook Ads Creative Testing Strategy 2026 Guide

Master Facebook Ads creative testing in 2026. Learn proven strategies, frameworks, and Meta's latest creative-first approach to lower CPL and scale campaigns.

A Meta ads creative testing framework is a systematic approach to testing, analyzing, and scaling ad variations to improve campaign performance while controlling costs. The most effective frameworks prioritize strategic variation testing over volume, use structured methodologies like the 3-3-3 approach (testing 3 concepts, 3 formats, 3 variations), and establish clear metrics for evaluating winners before scaling.

Creative testing on Meta isn't about making more ads. It's about making the right variations.

Every week, advertisers audit accounts spending $50 per day and $5,000 per day. Same pattern emerges: campaigns drowning in untested creatives with no clear hypothesis behind them. The algorithm can't even gather enough data to test that many variations meaningfully.

But here's the thing—Meta's advertising ecosystem evolved dramatically in recent years. With the rollout of Andromeda in mid-2025, Meta's first-step algorithm within its advertising recommendation pipeline changed the game. This update coincided with rapid adoption of generative creative tools and resulted in a massive increase in ad volume the platform processes.

The question isn't whether to test creatives. It's how to do it without burning through budget or overwhelming the learning phase.

Meta's algorithm processes exponentially more ads than just two years ago. Meta's engineering blog states that Andromeda enables a meaningful increase of model capacity (10,000x) for enhanced personalization in the ads retrieval stage.

That's not a typo. Ten thousand times more ads.

In this environment, standing out requires precision. AI-Powered Creative Automation tools now generate tailored ad variants—images, headlines, CTAs—based on user context, triggering up to 40% faster campaign iteration. Dynamic creative optimization continuously adapts images, headlines, and CTAs to match individual user preferences.

The challenge? Most advertisers approach testing backward. They create dozens of variations without clear hypotheses, then wonder why performance plateaus.

Before a campaign launches, the first real decision is which creative is worth putting budget behind. Extuitive is built for that step. It predicts likely ad performance before launch using AI models validated against live campaign results. For teams weighing different ad options, it gives a clearer way to compare creative before anything goes live.

Use Extuitive to:

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

Before diving into specific frameworks, understand what separates effective testing from random experimentation.

Every test should answer a specific question. Not "which ad performs better" but "does emphasizing product benefits outperform lifestyle messaging for this audience?"

Random changes produce random results. Strategic variations produce insights that compound over time.

Here's what nobody tells you: if daily budget sits at $50, $100, or even $200, there's no need for 50 creatives. The algorithm won't see enough data to test that many meaningfully.

For smaller budgets, focus on quality over quantity. Three well-designed variations with clear hypotheses will outperform twenty random attempts.

What defines a winner? Cost per trial 20% below baseline? ROAS above 3.5x? Conversion rate improvement of 15%?

Define success criteria upfront. Otherwise, analysis becomes cherry-picking favorable metrics after the fact.

One of the most effective frameworks for Meta creative testing follows the 3-3-3 methodology: test 3 concepts, 3 formats, and 3 variations.

This structured approach prevents overwhelm while ensuring comprehensive coverage of creative dimensions.

Start with three distinct messaging angles. Not three versions of the same message, but three genuinely different concepts.

For a fitness app, that might be: transformation stories, expert credibility, or community belonging. Each concept answers "why should someone care" differently.

Test how each concept performs across different formats: static images, short-form video, and carousel ads.

Format impacts delivery and engagement dramatically. A concept that flops as a static image might crush as a 15-second video.

Within winning concept-format combinations, test three tactical variations. Different headlines, calls-to-action, or visual treatments.

This layered approach builds knowledge systematically. First identify winning concepts. Then optimize format. Finally, refine execution.

Campaign structure determines whether test results are meaningful or muddled.

Create dedicated ad groups for testing, isolated from proven performers running in business-as-usual (BAU) ad groups.

Why? Mixing untested creatives with winners pollutes data. The algorithm splits delivery unpredictably, making it impossible to evaluate new variations fairly.

A clean structure looks like this:

Allocate testing budget proportionally. Smaller accounts with $100-200 daily budgets shouldn't spread resources across ten different test groups.

For accounts under $500 daily, two testing groups plus one BAU group works well. Above $1,000 daily, expand to three or four testing groups with different hypotheses.

Establish clear criteria for promoting creatives from testing to BAU. A practical approach monitors cost per trial or cost per acquisition.

For example: check testing ad groups and monitor cost per trial. If CPT was 20% above baseline during the last 2 days, pause that winner and put a new winner from the testing ad groups. This creates a continuous cycle where testing feeds proven performers into rotation while retiring fatigued creatives.

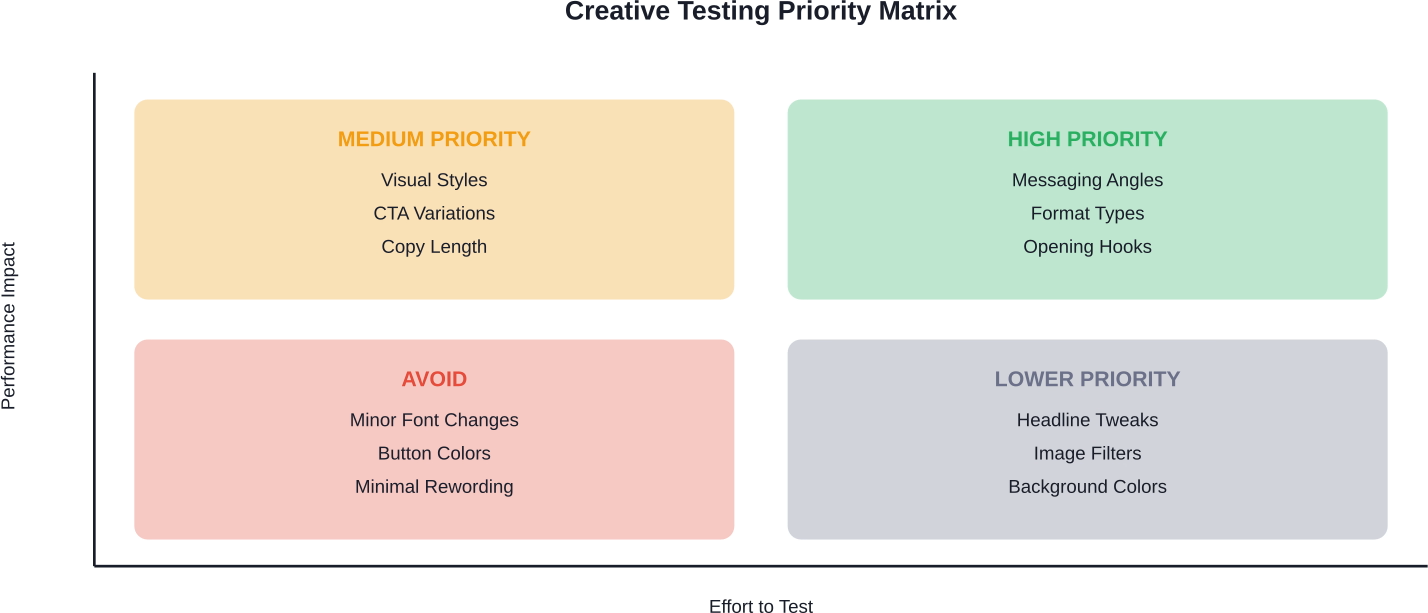

Not all creative variations deserve testing. Some elements drive performance. Others just create noise.

Focus testing efforts on elements that significantly impact performance:

Some variations rarely move the needle enough to justify testing resources:

Save testing budget for variations that represent genuine strategic differences.

ROAS tells part of the story. But surface-level return on ad spend misses crucial context.

A creative with 4x ROAS isn't necessarily better than one with 3.5x ROAS if the first attracts lower-quality customers who churn immediately.

Track cost per trial, cost per purchase, and lifetime value indicators. Sometimes a creative with slightly lower immediate ROAS attracts significantly better customers.

Where ads deliver matters enormously. A creative might show strong overall performance because it dominates Feed placement, while bombing in Reels.

Check placement breakdowns. One app company discovered their biggest win wasn't a creative change at all—it was understanding placement distribution and optimizing for where their best customers actually engaged.

Wait for sufficient sample size before declaring winners. A creative with 20 conversions at $10 CPA isn't reliably better than one with 18 conversions at $11 CPA.

Generally speaking, wait for at least 50-100 conversions per variant before making firm conclusions, depending on baseline conversion rates.

Finding a winning creative is step one. Scaling it without destroying performance is step two.

Don't immediately dump 80% of budget into a new winner. Move proven creatives into BAU rotation alongside existing performers, then gradually shift budget based on sustained performance.

Even winners eventually fatigue. Monitor frequency and cost trends daily. When cost per acquisition rises 15-20% above baseline for two consecutive days, consider that creative tired.

Rotate fatigued creatives out temporarily. Sometimes they recover performance after a break.

Top performers maintain continuous testing velocity. Teams building hundreds of new creative assets weekly ensure fresh variations always wait in the pipeline.

This doesn't mean creating hundreds from scratch. It means systematically iterating on winning themes with new executions.

Even structured frameworks fail when common mistakes creep in.

Changing headline, visual, format, and CTA simultaneously makes it impossible to know what drove results.

Test one variable category at a time. Isolate what actually moves performance.

Meta's algorithm needs learning time. Pausing ads after 24 hours because they haven't delivered wastes the learning investment.

Let tests run for at least 3-7 days depending on conversion volume, unless performance is catastrophically bad.

A creative that crushes for cold audiences might bore warm audiences. Segment testing by audience temperature when possible.

With Meta's Andromeda algorithm prioritizing creative diversity, running the same concept repeatedly—even with minor variations—can limit delivery.

The platform rewards genuine creative variety. Surface-level changes to the same core concept won't fool the algorithm.

The right measurement infrastructure makes or breaks testing programs.

Every test should feed organizational learning. Document hypotheses, results, and insights in a centralized system.

What worked six months ago provides context for today's tests. Patterns emerge over time that individual tests miss.

Meta creative testing isn't rocket science. But it requires discipline.

The difference between accounts that scale profitably and those that burn budget comes down to systematic approach. Clear hypotheses. Structured testing groups. Defined success metrics. Consistent iteration.

Start with the 3-3-3 framework: three concepts, three formats, three variations. Build from there based on what the data reveals.

Document everything. Every test teaches something, even failures. Especially failures.

Most importantly, match ambition to budget reality. Three well-designed strategic tests beat thirty random variations every time.

The advertisers winning on Meta in 2026 aren't creating more ads. They're creating smarter ads, testing systematically, and scaling what works.

Build a framework. Stick to it. Let the data guide decisions instead of hunches. That's how creative testing delivers sustainable performance improvement instead of temporary wins that evaporate under scrutiny.