The Best Shopify Bundle Apps That Actually Boost Sales

Tired of low AOV? These are the top bundle apps that move the needle on Shopify—from smart upsells to mix-and-match deals that your customers will love.

Claude AI is owned and developed by Anthropic PBC, a Public Benefit Corporation founded in 2021 by former OpenAI executives Dario and Daniela Amodei. Despite receiving billions in funding from Amazon, Google, and other tech giants, Anthropic maintains full ownership and control over Claude, with investors holding only minority stakes and no governance power.

The question of who owns Claude AI isn't as straightforward as it seems. With billions pouring in from Amazon, Google, and other tech giants, many assume these corporations control the chatbot that's become ChatGPT's strongest competitor.

But here's the thing—ownership and investment aren't the same thing.

Claude AI is wholly owned by Anthropic, an AI safety and research company that's structured specifically to resist the profit-driven pressures that plague other tech companies. Let's break down exactly how this works and why it matters.

Anthropic PBC (Public Benefit Corporation) is the sole owner and developer of Claude AI. Founded in 2021, this San Francisco-based company operates under a unique corporate structure designed to prioritize AI safety over rapid profit maximization.

The company was established by a group of former OpenAI executives who left over disagreements about safety practices and the direction of AI development. According to Anthropic's official website, their mission centers on building "reliable, interpretable, and steerable AI systems."

Dario Amodei serves as CEO, while his sister Daniela Amodei holds the position of president. Other co-founders include Jared Kaplan, Jack Clark, Chris Olah, Ben Mann, Sam McCandlish, and Tom Brown—all bringing deep technical expertise from their previous roles at OpenAI.

Anthropic's status as a Public Benefit Corporation isn't just legal jargon. It's a deliberate firewall.

Unlike traditional corporations that must prioritize shareholder returns, PBCs are legally required to balance profit with public benefit. For Anthropic, that public benefit is explicitly defined: developing AI that's safe and beneficial for humanity.

This structure gives Anthropic's leadership the legal backing to reject decisions that might be profitable but potentially harmful—something conventional corporations struggle to do.

In September 2023, Anthropic introduced the Long-Term Benefit Trust (LTBT), an independent governance body that represents one of the most innovative approaches to AI company oversight.

According to Anthropic's official announcement, the LTBT consists of five financially disinterested members with authority to select and remove a portion of Anthropic's board.

What does "financially disinterested" mean? These trustees don't hold equity in Anthropic. They can't profit from decisions that boost the company's valuation. Their sole mandate is protecting Anthropic's mission.

This creates a governance structure where profit-seeking investors—even those who've invested billions—can't override safety considerations to accelerate product releases or cut corners on research.

Now this is where it gets interesting.

Anthropic has raised massive amounts of capital—but funding doesn't equal ownership. According to Anthropic's official announcements, the company completed a $30 billion Series G funding round in February 2026, led by GIC and Coatue. This round valued Anthropic at $380 billion post-money, making it the second-largest venture funding deal of all time.

Previously, in September 2025, Anthropic raised $13 billion in a Series F round led by ICONIQ, valuing the company at $183 billion post-money.

The roster of investors reads like a who's who of tech and finance, but their role is strictly financial. Key backers include:

Amazon and Google also hold strategic partnerships with significant investment stakes, but neither controls Anthropic's operations or governance.

Let's address the elephant in the room: doesn't Amazon or Google own Claude?

Short answer: no.

Amazon has invested in Anthropic and serves as Claude's cloud infrastructure provider through AWS. Google has also made substantial investments and integrated Claude into some of its enterprise offerings. But investment isn't ownership.

Both companies hold minority equity positions. Neither has board seats controlled by their investment. Neither can dictate product decisions, safety protocols, or research priorities.

According to available partnership data, Amazon's role centers on providing computational infrastructure. Anthropic announced in 2024 that the partnership would bring more than one gigawatt of AI compute capacity online by 2026—a massive infrastructure commitment, but one that doesn't translate to ownership or control.

Think of it this way: when a bank loans money to a homeowner, the bank doesn't own the house. When venture capitalists invest in a startup, they typically get equity—but that equity comes with varying levels of control depending on the terms. Anthropic's structure explicitly limits investor control.

The origin story matters here.

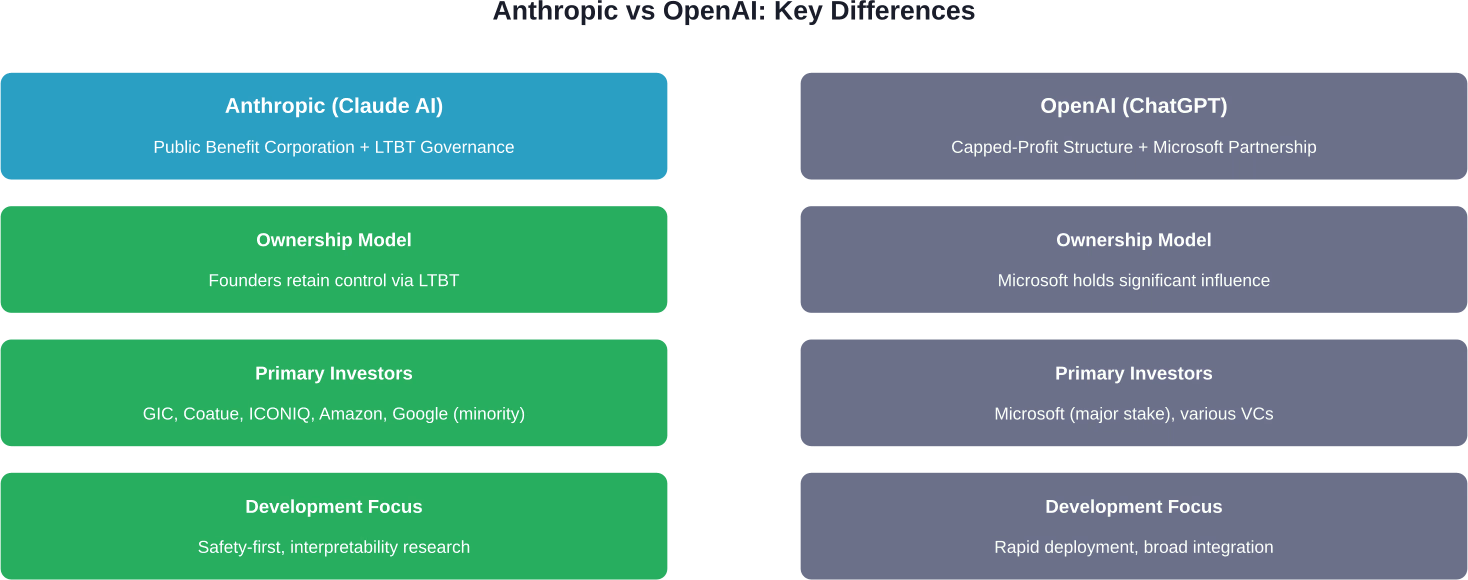

Dario Amodei served as VP of Research at OpenAI before leaving in 2021. Daniela Amodei was VP of Operations. They departed—along with several other senior researchers—over fundamental disagreements about AI safety and the company's direction following OpenAI's transition from a nonprofit to a capped-profit model.

The philosophical differences between Anthropic's founders and OpenAI leadership centered on AI safety practices and the company's transition to a capped-profit model. The core tension: how do you balance the enormous capital requirements of frontier AI research with the need for cautious, safety-focused development?

OpenAI's partnership with Microsoft and subsequent product acceleration represented one answer. Anthropic's structure represents another.

The founders weren't just looking to build a competitor to ChatGPT. They were architecting a company structure that could resist the gravitational pull toward rapid commercialization at the expense of safety research.

Anthropic introduced Claude to the public in March 2023 after months of closed alpha testing with partners including Notion, Quora, and DuckDuckGo. According to the official announcement, Claude was positioned as "a next-generation AI assistant based on Anthropic's research into training helpful, honest, and harmless AI systems."

That "helpful, honest, and harmless" framework—often abbreviated as HHH—guides Claude's development. It's not marketing speak. It's the technical approach that differentiates Claude's training methodology.

Anthropic treats AI safety as a systematic science, conducting frontier research and applying safety techniques before deploying systems commercially. The company regularly publishes interpretability research—studies that attempt to understand how large language models actually work internally.

Recent research published in March 2025 explored how to trace the "thoughts" of a large language model, examining what language Claude uses "in its head" and how it decides when it knows an answer versus when it should refuse to respond.

As of April 2026, Anthropic is the second-most valuable AI company globally. The $380 billion post-money valuation from the February 2026 Series G round places it behind OpenAI, which reached a valuation of $850 billion in March 2026.

According to Crunchbase data, Anthropic's Series G represents the second-largest venture funding deal of all time, following only OpenAI's $40 billion funding in 2025.

But valuation doesn't equal revenue or profitability. Like most frontier AI companies, Anthropic operates at a massive scale with enormous infrastructure costs. The company generates revenue through Claude's API, enterprise licenses, and partnerships, but specific financial performance remains private.

Anthropic continues expanding both its research capabilities and product offerings. In March 2026, the company launched The Anthropic Institute, a research effort focused on addressing challenges posed by increasingly powerful AI systems.

The Institute draws on research from across Anthropic to provide information that other researchers and the public can use during the transition to a world with much more capable AI systems. According to the announcement, this reflects Anthropic's belief that "far more dramatic progress will follow" the rapid AI advancement seen since the company's founding in 2021.

Recent technical breakthroughs include Claude Mythos Preview, announced April 7, 2026. This model demonstrates particularly strong performance on cybersecurity tasks. Anthropic launched Project Glasswing alongside it—an initiative to use Mythos Preview to help secure critical software infrastructure.

Economic research published in March 2026 examined labor market impacts of AI, introducing a new measure called "observed exposure" that combines theoretical LLM capability with real-world usage data. The research found that while AI has theoretical capability to impact many occupations, actual adoption remains a fraction of what's possible.

So why does all this corporate structure matter if you're just using Claude to help with writing or coding?

Real talk: it matters because it shapes how the product evolves.

Companies optimizing purely for growth might rush features, cut corners on safety testing, or prioritize viral engagement over reliability. Anthropic's structure theoretically insulates it from those pressures.

That doesn't make Claude perfect. No AI system is. But it does mean the incentive structure differs from competitors where investors can push for faster monetization or aggressive growth targets that might conflict with careful development.

Users get a product built by a company that—at least structurally—can afford to say "not yet" when a feature isn't ready, or "no" when a capability poses safety concerns.

Anthropic owns Claude AI. Full stop.

The company has built one of the most sophisticated governance structures in the AI industry specifically to maintain that ownership and control despite accepting billions in funding from major tech companies and investment firms.

The Long-Term Benefit Trust, Public Benefit Corporation status, and mission-focused leadership team create multiple layers of protection against the profit-maximization pressures that can compromise safety research.

Whether this structure will prove effective long-term remains to be seen. Corporate governance is easy when valuations are rising and capital is flowing. The real test comes during difficult periods when the mission and the balance sheet point in different directions.

But as of April 2026, Anthropic has maintained its independence and control over Claude despite operating in an industry where consolidation and acquisition are the norm. That's worth noting—and watching.

For users, developers, and enterprises building on Claude, this ownership structure provides some assurance that the product roadmap won't suddenly shift based on an acquisition or investor pressure. The same team that founded Anthropic still runs it, with structural protections designed to keep it that way.

And in an AI landscape increasingly dominated by a handful of massive tech companies, that kind of independence matters.