Top Instagram Management Agencies Working with Brands Worldwide

A closer look at agencies helping brands manage Instagram strategy, content, and campaigns across different industries.

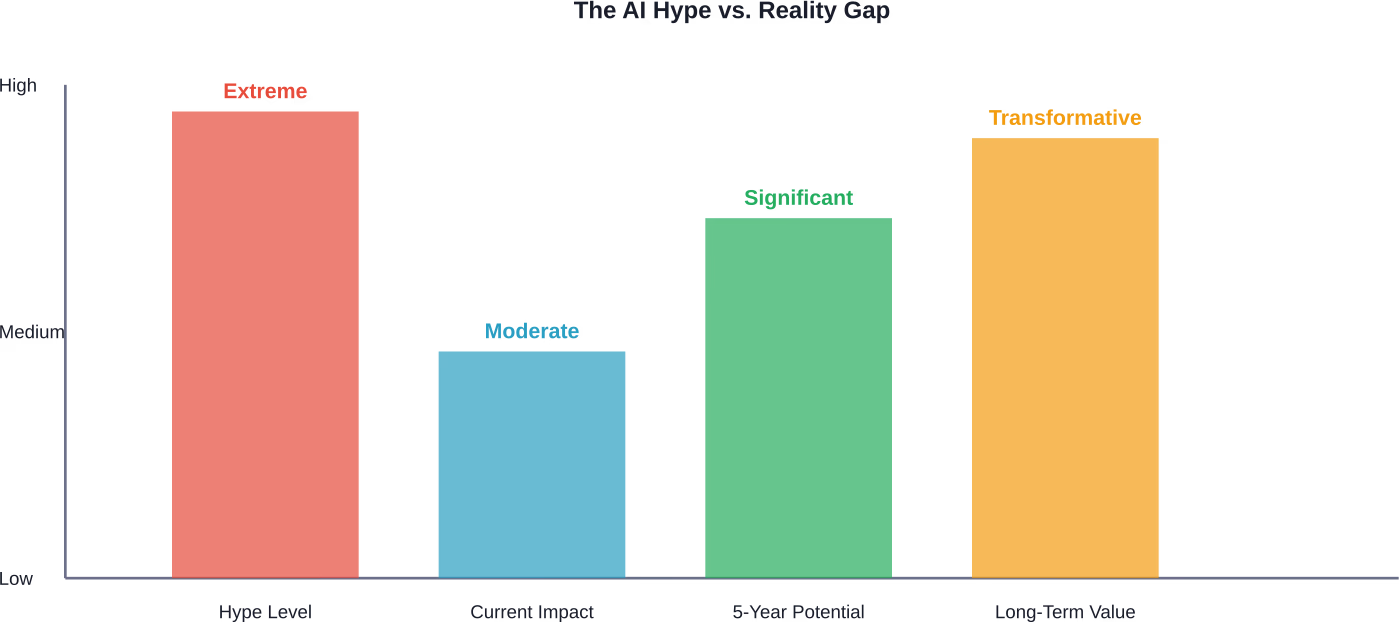

AI is both overhyped and underhyped depending on the lens. While tech leaders promise imminent superintelligence and job displacement that hasn't materialized, organizations are simultaneously underestimating AI's long-term transformational potential. The gap between inflated short-term expectations and underappreciated structural change defines the current AI landscape.

The AI debate has reached fever pitch. Tech executives promise revolutionary transformation while skeptics point to disappointing ROI and unfulfilled promises. Mark Benioff claims 30-50% of Salesforce operations now run on AI, yet countless businesses struggle to see meaningful productivity gains.

So which narrative holds water?

The answer isn't simple. According to Stanford HAI researchers, the era of AI evangelism is giving way to evaluation. Faculty predict 2026 will be defined by rigor, transparency, and a long-overdue focus on actual utility over speculative promise.

Let's cut through the noise and examine what the data actually shows.

The evidence for excessive hype isn't hard to find. Despite billions poured into AI infrastructure and countless headlines about superintelligence, the promised workplace revolution remains largely theoretical.

Here's the reality check: widespread white collar job displacement simply isn't happening yet. IT budgets have increased significantly as CEOs prioritize AI adoption, but this push-effect has actually slowed hiring rather than transformed productivity. Companies report implementation challenges that sound familiar to anyone who's lived through previous tech hype cycles.

Research on AI's workplace impact reveals a troubling gap between promise and performance. When businesses implement AI without fundamental process redesign, they see minimal gains. Sound familiar? It should.

Economist Ajay Agrawal draws parallels to electrification. Early factories installed electric motors but kept their old layouts designed for steam power. The perceived value—slightly reducing operational costs—wasn't enough to justify tearing apart existing infrastructure.

The massive productivity lifts, sometimes 300-500%, only came later when companies realized they could completely redesign their factory floors.

We're making the same mistake with AI. Organizations bolt generative models onto existing workflows and wonder why transformation doesn't materialize.

Academic researchers haven't been innocent bystanders. A paper from arXiv examining AI hype origins identifies how technological R&D attracts substantial investment based on perceived economic prosperity and national security benefits. AI R&D currently dominates global scientific output and continues attracting increased funding.

But when capabilities are overestimated, there's enhanced risk of subjecting the public to misrepresented technological solutions. The pressure to publish, secure funding, and demonstrate impact creates incentives for overselling results.

Now here's the twist. While short-term promises are inflated, many organizations are simultaneously underestimating AI's structural impact.

Stanford's AI Index reveals that open-source models jumped from 33.3% in 2021 to 65.7% in 2023. That's a fundamental shift in how AI technology develops and disseminates. Yet closed-source models achieved a median performance advantage of 24.2% over open-source counterparts on 10 selected benchmarks.

The real transformation won't come from chatbots handling customer service. It'll come from completely reimagining business processes, educational systems, and creative workflows.

Research from Brookings reveals that more than 30% of all workers could see at least 50% of their occupation's tasks disrupted by generative AI. But "disrupted" doesn't mean "replaced."

The data shows the greatest impacts will hit middle- to higher-paid occupations, clerical roles, and women disproportionately. Yet the nature of that impact remains deeply uncertain.

A 2024 field experiment with nearly 1,000 Turkish high school students found that unrestricted access to ChatGPT during math practice improved practice-problem accuracy but reduced subsequent performance. Similarly, workplace AI adoption shows varied effects depending on implementation approach.

The pattern? AI amplifies existing organizational capabilities and inequalities rather than erasing them.

The conversation at elite gatherings has shifted noticeably. According to Stanford HAI's analysis of recent Davos discussions, world leaders now focus on ROI over hype. The topics? Sovereign AI, open ecosystems, and workplace change.

One executive from a major tech company business unit stated he was charged with growing his business unit by something like $40 billion over the next three to five years, with no increase in headcount. That's a marked change from the "AI at all costs" mentality of 2023-2024.

Real talk: when the people making billions from AI start tempering expectations, pay attention.

Here's what often gets missed. Hype isn't harmless marketing. It has real consequences.

Research published through Brookings examines how AI systems' alignment with human values often fails to account for context and diverse populations. Standard fine-tuning with random sampling resulted in high error rates for underrepresented groups—52% error rate for people of color. Proposed methods reduced errors from 52% to 30%, but that's still unacceptable for deployed systems.

When hype drives premature deployment, marginalized groups pay the price. The rush to market means safety, fairness, and robustness get deprioritized.

ArXiv research on large language model limitations warns that exponentially growing hype around AI creates blind spots. Organizations adopt systems without understanding their fundamental constraints.

Community discussions among developers and tech workers reveal fascinating contradictions. Many insiders acknowledge that extreme AI hype gets in the way of making the most out of the technology.

There's a growing recognition that AI's real value lies in augmentation rather than replacement. The most successful implementations treat AI as a copilot, not an autopilot.

But wait. If insiders are skeptical, why does the hype continue?

Follow the money. Tech companies have committed hundreds of billions to data center buildouts. That investment needs justification. Whether AI delivers immediate ROI or not, the narrative must support the spending.

Is AI a bubble ready to burst? The answer requires nuance.

Investment levels certainly show bubble characteristics. Valuations for AI companies often disconnect from current revenue or profitability. The semiconductor market was valued at approximately $628 billion in 2024 and is projected to exceed $1 trillion by 2030.

Yet unlike previous tech bubbles, AI does produce measurable value in specific applications. Computer vision, natural language processing, and recommendation systems work. They're not vaporware.

The bubble isn't in AI's existence—it's in the timeline and scope of expectations.

Stanford researchers predict that 2026 marks an inflection point. Evaluation replaces evangelism. Organizations demand proof of value, not promises.

That's actually healthy. The technology needs space to mature without the crushing weight of unrealistic expectations. Developers need permission to acknowledge limitations without being labeled pessimists.

For AI to deliver transformational value, organizations must:

The electrification parallel remains instructive. Those productivity gains of 300-500% didn't come from slapping electric motors onto steam-era designs. They came from fundamental reimagining.

So is AI overhyped? Yes and no.

The breathless promises of imminent superintelligence and job market apocalypse? Absolutely overhyped. The suggestion that organizations can sprinkle AI onto existing processes and achieve transformation? Dangerously misleading.

But the long-term potential for fundamental restructuring of work, creativity, and problem-solving? Probably underestimated by most organizations.

The gap between where we are and where the hype suggests we should be creates real problems. Premature deployment harms vulnerable populations. Inflated expectations lead to disillusionment and reduced investment when inevitable setbacks occur. The pressure to oversell capabilities undermines trust.

What's needed now is exactly what Stanford researchers predict: rigor, transparency, and focus on utility. Let AI prove its value through specific applications rather than grand promises. Give organizations permission to move slowly and redesign thoughtfully.

The technology is real. The potential is significant. But realizing that potential requires honest assessment of current limitations and patient investment in fundamental change.

That's less exciting than hype. It's also more likely to deliver lasting value.

Ready to cut through AI hype in your organization? Start with one specific use case, measure results rigorously, and redesign processes around AI capabilities rather than bolting technology onto existing workflows. The transformation will take longer than promised—and matter more than expected.