How to Lock a Shopify Store Without Breaking Your Setup

Learn how to lock your Shopify store using password protection, when to use it, and what to watch out for with SEO and visitors.

AI agents for marketing agencies automate repetitive workflows, personalize campaigns at scale, and execute tasks autonomously using real-time data. According to MIT Sloan research, these systems differ from chatbots by perceiving, reasoning, and acting independently. Agencies can deploy AI agents for content creation, campaign optimization, lead scoring, and customer segmentation—reducing manual workload while improving targeting precision.

Marketing agencies face relentless pressure. Tighter budgets. More channels demanding content. Faster campaign cycles. Higher personalization expectations from every client.

And here's the thing: traditional marketing automation can't keep up anymore.

That's where AI agents come in. These aren't your typical chatbots that answer questions. According to the MIT Sloan Management Review, AI agents represent a new breed of systems that are semi-or fully autonomous—able to perceive, reason, and act independently without constant human oversight.

McKinsey estimates that generative AI could add the equivalent of $2.6 trillion to $4.4 trillion annually across the 63 use cases they analyzed. But what does that actually mean for your agency?

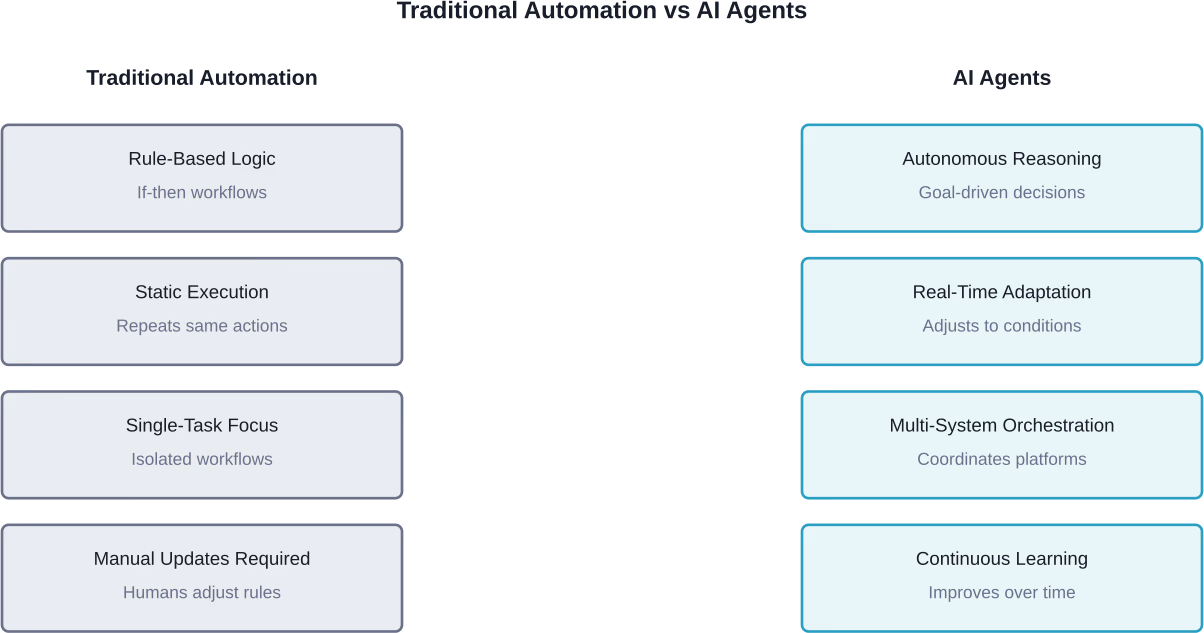

AI agents aren't just another layer of automation stacked on top of your existing tech stack.

Traditional marketing tools follow predetermined rules. If this happens, do that. They execute workflows you've already mapped out. They're reactive.

AI agents? They're proactive.

These systems analyze data continuously, make decisions based on changing conditions, and execute tasks without waiting for human input. According to research from Perplexity on AI agent adoption, the two largest use case categories—Productivity & Workflow and Learning & Research—account for 57% of all agentic queries.

The shift is fundamental. Marketing moves from reactive communication to adaptive engagement.

Real AI agents for marketing share several defining traits:

According to Stanford research on generative AI in workplaces, agents that provided real-time recommendations to customer service operators improved performance by 14% on average, based on the number of issues they resolved per hour, compared to workers without AI assistance, with the biggest gains among less experienced employees.

Real talk: marketing agencies aren't struggling because they lack tools. Most agencies are drowning in tools.

The problem? Coordination overhead. Every new channel, every new client, every campaign variation adds exponential complexity to workflows.

According to research from Perplexity examining AI agent adoption, Productivity & Workflow represents one of the two largest categories of agentic AI use, accounting for approximately 57% combined with Learning & Research. Agencies that don't adopt autonomous systems face diminishing returns as client rosters grow.

Traditional scaling means hiring more people. AI agents scale operations without proportional headcount increases.

But here's what matters more: quality at scale.

Stanford research on AI and brand connections emphasizes that modern audiences expect personalized experiences across every touchpoint. Delivering that manually? Impossible at agency scale.

AI agents handle the grunt work—analyzing customer data, identifying segments, crafting variations, testing messages, and optimizing delivery timing across channels. Simultaneously. Continuously.

The FTC has intensified scrutiny of AI marketing claims. In March 2026, the agency banned Air AI from marketing business opportunities after charges the company misled entrepreneurs and small businesses. In September 2024, the FTC launched Operation AI Comply, announcing five enforcement actions against operations using AI hype or selling AI technology that enabled deceptive practices.

Agencies need systems that document decisions, maintain audit trails, and ensure claims are substantiated. Well-implemented AI agents provide transparency that manual processes can't match.

Enough theory. How do agencies actually deploy these systems?

AI agents don't just generate content. They orchestrate entire content workflows.

An agent might analyze performance data from past campaigns, identify high-converting topics and formats, generate drafts aligned with brand guidelines, create variations for different audience segments, localize content for regional markets, schedule publication across channels, and monitor initial performance to recommend adjustments.

All without a project manager coordinating five different team members.

According to arXiv research on AI agents interacting with online advertising, autonomous systems can sift through vast numbers of options far more comprehensively than human analysts, potentially altering the effectiveness and reach of traditional advertising approaches.

Marketing agents continuously monitor campaign performance across platforms, shift budget allocation toward high-performing channels in real time, pause underperforming ad sets before budget waste, test new creative variations based on engagement signals, and adjust targeting parameters as audience behavior shifts.

The difference? Speed. Human analysts review performance weekly or daily. Agents optimize hourly or even continuously.

Most AI-driven campaign optimization happens after ads go live. Budgets shift, audiences adjust, and underperforming creatives get filtered out over time. But for many agencies, the biggest inefficiency still sits earlier in the process, deciding which creatives are worth testing in the first place.

This is where platforms like Extuitive introduce a slightly different workflow. Instead of relying entirely on live A/B testing, teams can evaluate creative concepts before launch using historical performance data and simulated testing environments. The system analyzes patterns from past campaigns and estimates how new variations are likely to perform, helping narrow down options before any budget is committed.

In practice, this reduces the volume of low-probability tests and shifts part of campaign optimization upstream. Agencies still run live campaigns, but with a more filtered set of creatives, allowing them to focus, spend and iterate on ideas that already show stronger signals early on.

Perplexity research indicates that shopping for goods represents one of the top subtopics in agentic AI usage, accounting for a significant portion of queries. Agencies can deploy similar agents for B2B lead evaluation.

AI agents analyze behavioral signals across touchpoints, score leads based on conversion probability rather than simple demographics, identify micro-segments within broader audiences, trigger personalized nurture sequences for each segment, and flag high-intent prospects for immediate sales follow-up.

Client reporting consumes absurd amounts of agency time. AI agents transform this workflow by pulling data from multiple platforms automatically, identifying statistically significant trends and anomalies, generating narrative insights that explain performance drivers, creating client-specific reports in branded templates, and highlighting actionable recommendations based on data patterns.

What used to take a team member two days? Now it happens in minutes.

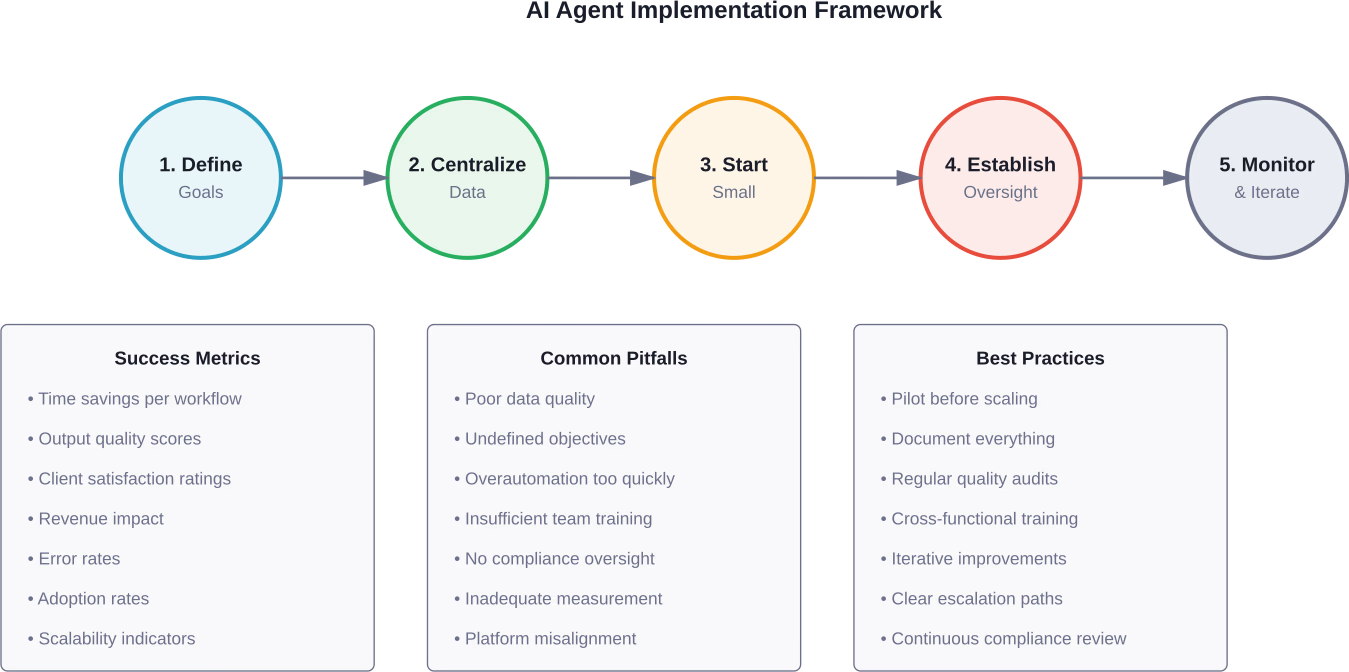

Building effective AI agents isn't about buying the fanciest platform. Implementation follows a deliberate framework.

Agents work best with specific, measurable goals. Vague objectives produce vague results.

Good objectives look like this:

Notice the pattern? Quantifiable outcomes tied to specific workflows.

Here's what trips up most implementations: AI agents are only as good as the data they access.

According to research from UC Berkeley's California Management Review on AI adoption, organizations must establish data foundations before deploying agentic systems. Scattered data across disconnected platforms cripples agent effectiveness.

Agencies need to consolidate customer data, campaign performance metrics, content assets, brand guidelines, and historical analytics into accessible repositories with consistent formatting and clear documentation.

Data collaboration infrastructure matters. Research examining AI agents emphasizes that market forces and infrastructure constraints significantly impact agent potential—fragmented data creates those constraints.

Don't deploy agents to mission-critical client campaigns on day one.

Start with internal workflows. Content research. Competitive analysis. Performance reporting. Social media scheduling. These deliver immediate value while teams develop confidence with the technology.

Stanford researchers studying AI adoption recommend beginning with tasks that have clear success metrics and limited downside risk if the agent makes suboptimal decisions.

Autonomous doesn't mean unsupervised.

Effective implementations include review gates at critical junctures. An agent might draft client-facing content, but a human reviews before publication. An agent might recommend budget reallocation, but a strategist approves before execution.

Over time, as confidence grows and patterns emerge, review frequency can decrease. But initial implementations benefit from oversight.

Track both efficiency metrics and quality metrics. An agent that completes tasks faster but produces worse outcomes hasn't delivered value.

Monitor time savings per workflow, accuracy rates against benchmarks, client satisfaction scores, revenue impact from optimizations, and error rates requiring human intervention.

Berkeley research on AI system adoption emphasizes continuous evaluation rather than one-time assessments. Agent performance evolves—measurement systems must keep pace.

Not all platforms marketed as "AI agents" actually deliver autonomous capabilities. When evaluating solutions, agencies should assess several critical features.

Some platforms require extensive technical setup. Others offer low-code or no-code interfaces.

For agencies without dedicated development resources, platforms with visual workflow builders and pre-built templates reduce time-to-value. But agencies with technical teams might prefer API-first platforms offering greater customization.

Client data flows through these systems. Agencies must verify that platforms implement encryption, access controls, compliance certifications, data residency options, and clear data usage policies.

The FTC has published extensive guidance on AI and data privacy. Platforms that ignore these requirements create liability exposure.

Implementation rarely goes perfectly. Anticipating obstacles helps agencies navigate them.

Garbage in, garbage out. Agents trained on incomplete or inconsistent data produce unreliable outputs.

Solution? Audit existing data before agent deployment. Clean up formatting inconsistencies. Fill gaps in historical records. Establish data quality standards going forward.

Some team members fear replacement. Others lack technical skills to work effectively with AI systems.

Research from Stanford examining AI in workplaces found that generative AI tools benefited less experienced workers most significantly—novices improved performance by 14% while experts saw smaller gains. Frame agent adoption as leveling up junior team members rather than replacing senior talent.

Training programs that teach teams how to prompt, review, and refine agent outputs build confidence and competence.

Agencies that automate too aggressively risk generic outputs that damage client brands.

Effective implementations maintain creative oversight. Agents handle research, data analysis, draft generation, and optimization testing. Humans guide strategic direction, brand voice, and final creative approval.

Enterprise AI platforms aren't cheap. Agencies need clear ROI projections before committing.

Start with pilot programs focused on high-volume, time-intensive workflows. Measure time savings, quality improvements, and revenue impact. Scale investment as proven value accumulates.

Pricing varies significantly across platforms—check official websites for current subscription tiers and feature availability.

The FTC's increased scrutiny of AI marketing claims demands attention from agencies deploying autonomous systems.

Marketing claims must be substantiated. If an AI agent generates claims about product efficacy, those claims need supporting evidence.

Agencies should implement review workflows that verify factual accuracy before publication. Agents can assist research, but humans must validate claims against reliable sources.

The FTC has indicated that failure to disclose AI-generated content may constitute deceptive practice in certain contexts. While disclosure requirements remain evolving, agencies benefit from proactive transparency.

When AI agents generate client-facing content, consider whether disclosure serves consumer interests and regulatory expectations.

The FTC's Operation AI Comply targeted companies using AI in deceptive or unfair ways. Agencies must ensure agents don't engage in practices like fake reviews, misleading endorsements, or false scarcity claims.

According to FTC guidance on online advertising and marketing, standards that protect consumers also maintain the credibility of digital marketing channels. Agencies that deploy agents responsibly protect both clients and the broader industry.

So where does this technology go next?

According to Stanford AI experts analyzing predictions for 2026, the era of AI evangelism is giving way to evaluation. Expect rigor, transparency, and focus on actual utility over speculative promise.

For marketing agencies, that means a shift from "Can we use AI?" to "Where does AI demonstrably outperform alternatives?"

Future systems will coordinate multiple specialized agents rather than deploying single generalist agents. One agent handles content research. Another optimizes distribution timing. A third monitors performance and triggers adjustments. A fourth manages budget allocation.

These agents communicate, share data, and coordinate actions without human intermediaries orchestrating every handoff.

Current personalization often means segmentation—grouping similar customers and targeting groups. Next-generation agents will personalize at the individual level, crafting unique messages for each prospect based on their specific behavioral patterns, preferences, and predicted needs.

Stanford research on AI transforming brand connections emphasizes that AI-driven personalization already creates opportunities for better audience relationships. That trend accelerates.

Agents will continuously monitor competitive landscapes, identifying shifts in messaging, pricing, positioning, and channel strategies. Rather than quarterly competitive audits, agencies will have real-time intelligence feeding strategic decisions.

AI agents will move beyond optimizing existing campaigns to proactively recommending new campaign opportunities based on market signals, seasonal patterns, competitive gaps, and emerging customer needs.

An agent could theoretically identify competitive advertising gaps and recommend campaign opportunities. However, this remains a forward-looking capability not yet demonstrated at scale in current implementations.

Marketing agencies face a choice. Adopt autonomous AI systems and scale operations efficiently. Or watch competitors deliver faster, more personalized, more data-driven campaigns at lower cost.

That's not hypothetical. It's happening now.

According to research examining AI agent adoption patterns, productivity and workflow optimization already represents the largest use case category. Agencies that wait for "perfect" solutions will find themselves perpetually behind.

But implementation requires discipline. Start with clear objectives. Centralize your data. Pilot on low-risk workflows. Establish oversight. Measure relentlessly. Scale what works.

The agencies that thrive in 2026 and beyond won't be the ones with the most AI tools. They'll be the ones that deployed AI agents strategically—automating grunt work while amplifying human creativity and strategic thinking.

Ready to transform your agency's workflows? Identify one high-volume, time-intensive process. Map the current workflow. Research platforms that address that specific use case. Run a 30-day pilot. Measure the results.

Then scale from there.

AI agents won't replace your agency. But agencies using AI agents will replace those that don't.