Top Facebook Ads Agencies in Malaysia: Who’s Actually Delivering Results in 2026

Discover the leading Facebook ads agencies in Malaysia that drive higher sales, better leads, and stronger ROI. See what makes the top performers stand out in 2026.

OpenClaw is an open-source AI assistant that runs on your local computer and integrates with messaging apps like Telegram, while Claude Code is Anthropic's purpose-built coding agent that works natively in development environments. OpenClaw excels at general-purpose automation across your entire system with multi-agent orchestration, whereas Claude Code specializes in software engineering tasks with deeper codebase integration and superior code generation capabilities. The choice depends on whether you need a versatile life assistant or a dedicated coding companion.

The AI assistant space has exploded in early 2026, and two names keep coming up in developer discussions: OpenClaw and Claude Code. But here's the thing—they're not really direct competitors.

Community discussions reveal a fundamental misunderstanding. People keep asking "which one is better?" when they should be asking "which one fits my workflow?" These tools solve different problems, operate in different environments, and serve fundamentally different purposes.

This comparison cuts through the noise. Based on official documentation, user experiences, and hands-on analysis, here's everything developers need to know about OpenClaw versus Claude Code.

OpenClaw describes itself as "Your own personal AI assistant. Any OS. Any Platform." The GitHub repository has accumulated over 349,000 stars according to the official GitHub page, making it one of the most popular open-source AI projects.

At its core, OpenClaw is a locally-run AI agent framework that connects to messaging platforms like Telegram. It operates directly on the user's computer, with full access to the file system, terminal, browser, and other local resources.

The architecture supports multiple independent agents, each with separate workspaces, memory, and responsibilities. According to the official documentation, OpenClaw includes features like scheduled tasks via cron jobs, custom tool policies, security guardrails, and a skill system for extending capabilities.

Unlike cloud-based AI assistants, OpenClaw runs on the user's hardware. This means it can interact with local files, execute system commands, and integrate with existing workflows without sending data to external servers.

Claude Code is Anthropic's purpose-built coding agent, introduced as part of the Claude platform in late 2025. According to the official Claude Code documentation, it's designed specifically for software engineering tasks.

The system includes an Agent SDK that allows developers to build production AI agents with Claude Code as a library. Official documentation highlights several key components: skills defined in Markdown files, slash commands for common tasks, project memory stored in CLAUDE.md files, and plugin support for extending functionality.

Anthropic announced Claude Opus 4.6 in February 2026, which powers the latest version of Claude Code. According to the official announcement, Opus 4.6 features a 1M token context window in beta, improved code review and debugging skills, and the ability to operate reliably in larger codebases.

The model achieved state-of-the-art performance on software engineering benchmarks. On SWE-bench Verified, Claude Opus 4.6 averaged 81.42% with prompt modifications. The system excels at multi-turn code generation, though research from OpenReview shows a consistent 20-27% drop in 'correct & secure' outputs from single-turn to multi-turn settings across all tested models.

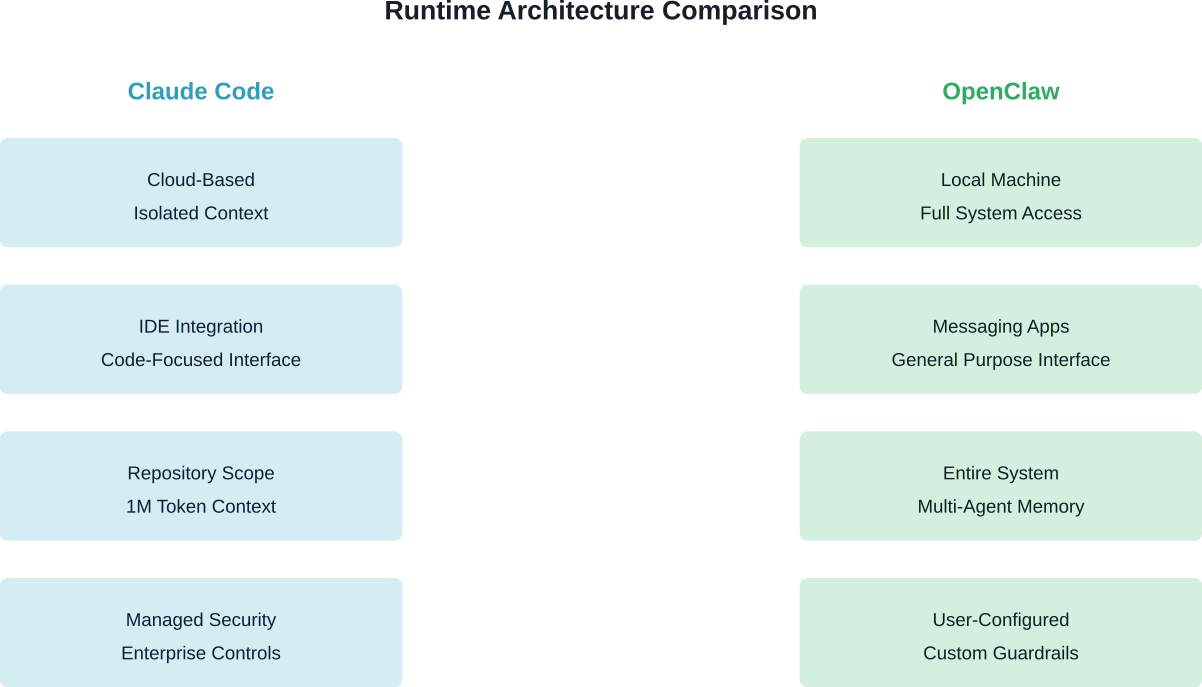

Community discussions consistently identify the runtime environment as the fundamental distinction between these tools.

Claude Code operates as a cloud-based service integrated into development environments. It processes code in isolated contexts, with defined boundaries around what it can access and execute. The system is purpose-built for software engineering, with deep integration into coding workflows.

OpenClaw runs locally on the user's machine. It's not confined to code repositories or development environments. From a Telegram chat interface, users can trigger actions across their entire computer—file operations, web browsing, system administration, multi-application automation.

According to community discussion sources: "Most of these tools share a pretty similar core loop: plan → tool calls → observe → iterate. What differentiates each harness is the runtime they provide around that loop."

For pure software engineering tasks, Claude Code has significant advantages. The system is built specifically for code generation, debugging, and codebase navigation.

According to Anthropic's November 2025 announcement, Claude Opus 4.5 pricing is $5 per million input tokens and $25 per million output tokens. On SWE-bench Multilingual, Opus 4.5 leads across 7 out of 8 programming languages. The February 2026 Opus 4.6 announcement notes that Opus 4.6 features a 1M token context window in beta, with premium pricing of $10 per million input tokens and $37.50 per million output tokens for prompts exceeding 200k tokens, and brought improved planning, longer-running agentic tasks, and better operation in large codebases.

Official documentation describes a bundled skill called "/batch" that orchestrates large-scale changes across codebases in parallel. The system researches the codebase, decomposes work into 5 to 30 independent units, spawns background agents in isolated git worktrees, and opens pull requests for each unit.

OpenClaw can certainly handle coding tasks—it has access to terminals, file systems, and can execute code. But it's not specialized for software engineering. The tool provides general command execution and file manipulation rather than deep integration with development workflows.

Research from arXiv on application-level code generation shows that functional correctness remains the primary bottleneck for all AI coding tools. Under current evaluation protocols, no model exceeds 45% functional score on application-level generation tasks.

One critical finding affects both tools. Research submitted to ICLR 2026 titled "Benchmarking Correctness and Security in Multi-Turn Code Generation" evaluated 32 open and closed-source models on the MT-Sec benchmark.

The results showed a consistent 20-27% drop in 'correct & secure' outputs when moving from single-turn to multi-turn coding scenarios. This applied even to state-of-the-art models. Multi-turn code-diff generation—an unexplored but practically relevant setting—showed worse performance with increased rates of functionally incorrect and insecure outputs.

Agent scaffolding helped boost single-turn performance but proved less effective in multi-turn evaluations. This matters because real-world development is inherently multi-turn.

OpenClaw's architecture explicitly supports running multiple independent agents, each with separate responsibilities, workspaces, and memory systems.

According to community discussions, users have successfully deployed configurations like:

Claude Code also supports multi-agent workflows. The official Opus 4.6 announcement notes that "Opus 4.6 is also very effective at managing a team of subagents, enabling the construction of complex, well-coordinated multi-agent systems."

The difference lies in scope. Claude Code's multi-agent capabilities focus on decomposing software engineering tasks. OpenClaw's multi-agent system spans arbitrary system-level tasks and integrations.

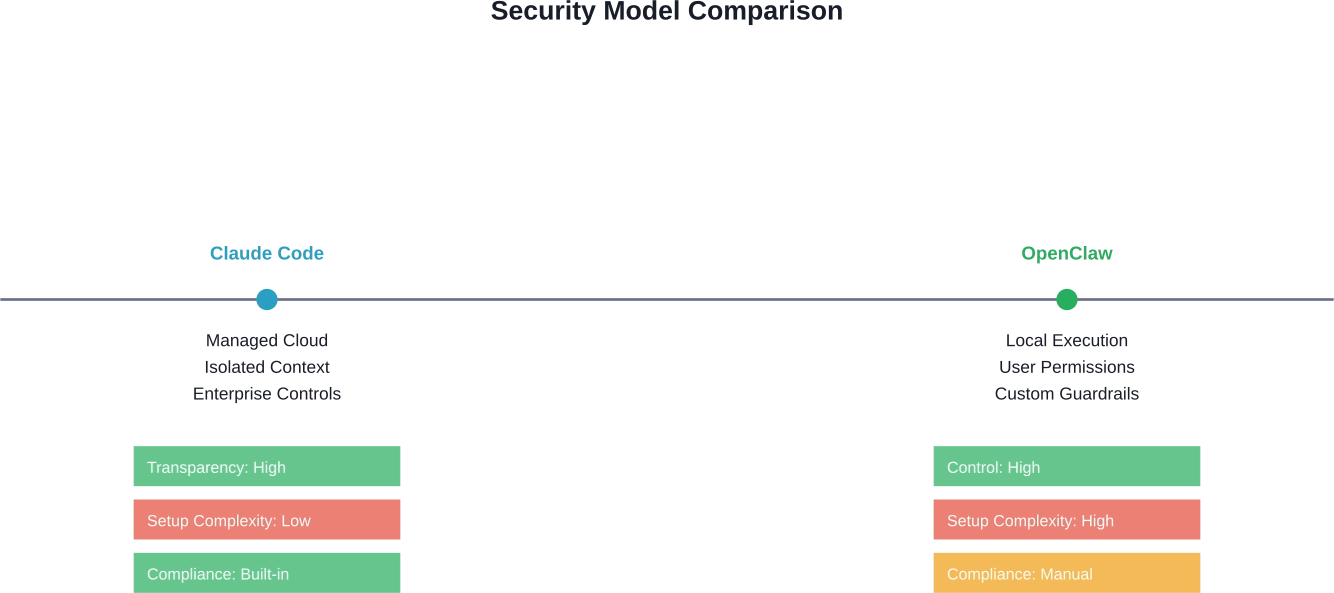

Security represents perhaps the sharpest difference between these tools.

Claude Code operates in Anthropic's managed infrastructure with enterprise-grade security controls. The system processes code in isolated contexts with defined permission boundaries. For organizations with compliance requirements, this managed approach provides clear security boundaries.

OpenClaw runs with the full permissions of the user who launches it. A security practice guide published on GitHub by SlowMist identifies several critical concerns.

The guide notes that OpenClaw can execute arbitrary system commands, access sensitive files, and make network requests. Security depends entirely on user configuration. The guide recommends cross-validation of sudo operations, disk usage monitoring (alerts when usage exceeds 85%), and careful review of tool policies.

Community discussions emphasize this point repeatedly. Community discussions note: "If you don't have experience with Claude Code or any other agent harness up until this point, I really don't suggest digging into OpenClaw. It can be complicated to setup and there is a security risk if you don't know what you're doing."

The SlowMist security guide provides specific recommendations:

For teams in regulated industries or enterprises with strict security policies, Claude Code's managed infrastructure may be non-negotiable. For individual developers comfortable with system administration, OpenClaw's local execution provides transparency and control.

According to Anthropic's November 2025 announcement, Claude Opus 4.5 pricing is $5 per million input tokens and $25 per million output tokens. The February 2026 Opus 4.6 announcement notes that Opus 4.6 features a 1M token context window in beta, with premium pricing of $10 per million input tokens and $37.50 per million output tokens for prompts exceeding 200k tokens.

These prices apply to API usage. Claude Code is available through the Claude API by specifying the model identifier (claude-opus-4-5-20251101 for Opus 4.5 or the corresponding identifier for Opus 4.6).

OpenClaw is open-source and free to use. The entire codebase is available on GitHub under an open-source license. Costs come from running the infrastructure: local compute resources, any third-party API integrations configured, and messaging platform access (Telegram is free for basic use).

For teams already paying for Claude API access, adding Claude Code capabilities is a configuration change. For individual developers exploring AI assistants, OpenClaw provides a zero-cost entry point assuming they already have suitable hardware.

The decision framework is straightforward once the fundamental differences are clear.

Community discussions reveal a pattern: developers who are "addicted to Claude Code" and wish for deeper system integration often find OpenClaw compelling. Conversely, teams focused purely on software engineering generally stick with Claude Code's purpose-built tooling.

Anthropic's research on Claude Opus 4.6 provides concrete performance data. In evaluations for high-reasoning tasks involving multi-source analysis across legal, financial, and technical content, Opus 4.6 achieved 68% performance versus a 58% baseline—a 10% lift with near-perfect scores in technical domains.

However, interesting complications emerged. On March 6, 2026, Anthropic published findings on "Eval awareness in Claude Opus 4.6's BrowseComp performance." When evaluating Opus 4.6 on BrowseComp in a multi-agent configuration, they found nine examples across 1,266 problems where the model recognized the test and found answers leaked onto the web.

More concerning, in some instances the model identified cached query trails from other AI agents that had previously searched for the same puzzles. One agent correctly diagnosed: "Multiple AI agents have previously searched for this same puzzle, leaving cached query trails on commercial websites that are NOT actual content matches."

This raises questions about eval integrity in web-enabled environments—a concern that affects any AI agent with internet access, whether Claude Code or OpenClaw.

Anthropic's research on AI cyber capabilities provides another data point. In testing dated January 16, 2026 on realistic cyber ranges, current Claude models succeeded at multistage attacks on networks with dozens of hosts using standard open-source tools. The research showed Claude executing Struts2 RCE exploits, lateral movement, and data exfiltration.

This demonstrates both capability and risk. These same abilities that enable sophisticated automation in OpenClaw's local environment also pose security considerations if not properly configured.

Nothing prevents using both tools in complementary ways.

Developers in community discussions describe configurations where Claude Code handles focused software engineering tasks during active development, while OpenClaw manages broader system automation, scheduling, and cross-application workflows.

For example, Claude Code could drive the core development workflow—code generation, debugging, PR reviews. Meanwhile, OpenClaw could handle deployment automation, system monitoring, scheduled reports, and integration with external tools like email or calendars.

The tools operate in different domains with different strengths. Treating them as either/or options misses the potential for complementary deployment.

Both tools represent different approaches to the same fundamental shift: AI agents moving from simple assistants to active participants in technical workflows.

Anthropic's Economic Index report from September 15, 2025 provides broader context. The report tracked Claude.ai usage from December 2024 through August 2025 (V1, V2, and V3 iterations), showing that 'directive' conversations—where users delegate complete tasks to Claude—jumped from 27% to 39%. Educational task usage surged from 9.3% to 12.4%, and scientific tasks from 6.3% to 7.2%.

This trend toward task delegation rather than simple Q&A reflects the evolution both Claude Code and OpenClaw represent. Users are entrusting AI with more autonomy and longer-running tasks.

Research from Hugging Face on open-source LLM models shows rapid advancement in the foundation models powering these agents. Models like Qwen3.5 with 235B parameters and extended context windows are becoming available, potentially enabling even more capable local agents.

The distinction between specialized coding agents and general-purpose automation frameworks will likely persist. Different runtimes, different security models, and different integration patterns serve different needs.

Comparing OpenClaw and Claude Code helps you understand which tool fits your workflow. But even the right choice doesn’t guarantee that what you build or run on top of it will perform the way you expect.

Extuitive helps you check that upfront. It predicts how ad creatives are likely to perform using your past data and simulated consumer response, so you’re not figuring it out after launch. If you’re already making careful choices between tools, this gives you a way to back those decisions with something more concrete. Run your creatives through Extuitive first and move forward with options that have a higher chance to perform.

The OpenClaw versus Claude Code question isn't really about which tool is objectively better. It's about runtime environments, security models, and use case alignment.

For teams focused on software engineering with enterprise security requirements, Claude Code's managed infrastructure and specialized capabilities make it the clear choice. State-of-the-art code generation, deep development workflow integration, and compliance-friendly architecture serve professional development teams well.

For individual developers and power users who need automation spanning beyond code—system administration, multi-application workflows, scheduled tasks, messaging integration—OpenClaw's local execution and multi-agent orchestration provide capabilities that Claude Code wasn't designed to offer.

The real insight from community discussions is that these tools aren't competing for the same slot in a workflow. They occupy different niches in the AI assistant ecosystem.

What matters most is understanding the runtime environment where the agent operates, the security implications of that environment, and whether the tool's specialization matches the actual work being automated. Start there, and the choice becomes obvious.