How Does Shopify Collective Actually Work? A Clear Guide Without the Jargon

A clear look at how Shopify Collective lets merchants sell each other's products without holding inventory.

Testing a market with Facebook ads requires structured experimentation with controlled budgets, clear metrics, and patience. Start with $10-20/day per test, run campaigns for 5-7 days minimum, and focus on CTR, CPC, and engagement metrics to validate demand before scaling. Test one variable at a time—audience, creative, or offer—to get actionable data without wasting spend.

Testing a new market feels like gambling with real money. Nobody wants to drop thousands on ads only to discover the market isn't interested. Facebook ads offer one of the fastest, most controllable ways to validate market demand before committing serious resources.

But here's the thing—most businesses test wrong. They either quit too early, test too many variables at once, or misread the data entirely. The result? Wasted budgets and false conclusions about whether a market actually exists.

This guide breaks down the proven frameworks for market testing with Facebook ads, the metrics that actually matter, and how to structure tests that give clear answers without burning cash.

Facebook's advertising platform provides access to a large user base with granular targeting options. That reach combined with flexible budgets makes it ideal for market validation.

Traditional market research takes weeks or months. Facebook ads deliver signal within days. The platform's targeting lets businesses reach narrow demographics, specific interests, or lookalike audiences modeled on existing customers.

The real advantage? Facebook ads provide measurable engagement data. Click-through rates, cost per click, and conversion metrics reveal whether people care enough to act. That's more valuable than survey responses or focus groups.

Online advertising platforms are subject to Federal Trade Commission oversight regarding truthfulness and consumer protection. The ability to track every impression, click, and conversion makes platforms like Facebook essential for data-driven market testing.

Budget determines how fast tests produce results. Too little spend means insufficient data. Too much risks money on unproven concepts.

For most market tests, start with $10-20 per day per test campaign. This budget gives Facebook's algorithm enough room to optimize delivery while keeping total exposure manageable. Smaller audiences—under 50,000 people—can run effectively at $5 per day.

Run each test for a minimum of 5-7 days before drawing conclusions. Facebook's delivery system needs time to learn and stabilize. Turning off campaigns after 24-48 hours based on early metrics leads to false negatives.

Here's how budgets break down for different testing scenarios:

Campaign budget optimization (CBO) can help when testing multiple ad sets, but for initial market validation, manual budgets per ad set provide clearer control.

When Facebook ads are being used to test a new market, Extuitive can help at the stage before anything goes live. The platform predicts likely ad performance before launch using AI models validated against live campaign results. That gives teams a way to compare creative options before budget is spent.

Use Extuitive to:

predict ad performance before launch

compare creatives before spending budget

check ads before they go live

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

Campaign objective tells Facebook what result to optimize for. Choosing wrong skews data and wastes budget.

For market testing, traffic campaigns work best in most cases. They optimize for link clicks, showing ads to people most likely to engage. This reveals whether the market finds the offer interesting enough to click through.

Engagement campaigns work when testing content appeal before a product exists. They optimize for likes, comments, and shares—useful for gauging interest in concepts or messaging angles.

Conversion campaigns require pixel data and a minimum volume of conversions to optimize effectively. For brand new markets with no baseline data, conversions campaigns often underperform until sufficient signal exists. Start with traffic, then switch to conversions once baseline engagement is established.

Use one clear goal per campaign. Don't mix objectives or success metrics within a single test. Each campaign should answer one specific question about market viability.

Audience selection determines who sees the ads. Poor audience targeting produces unreliable market signals.

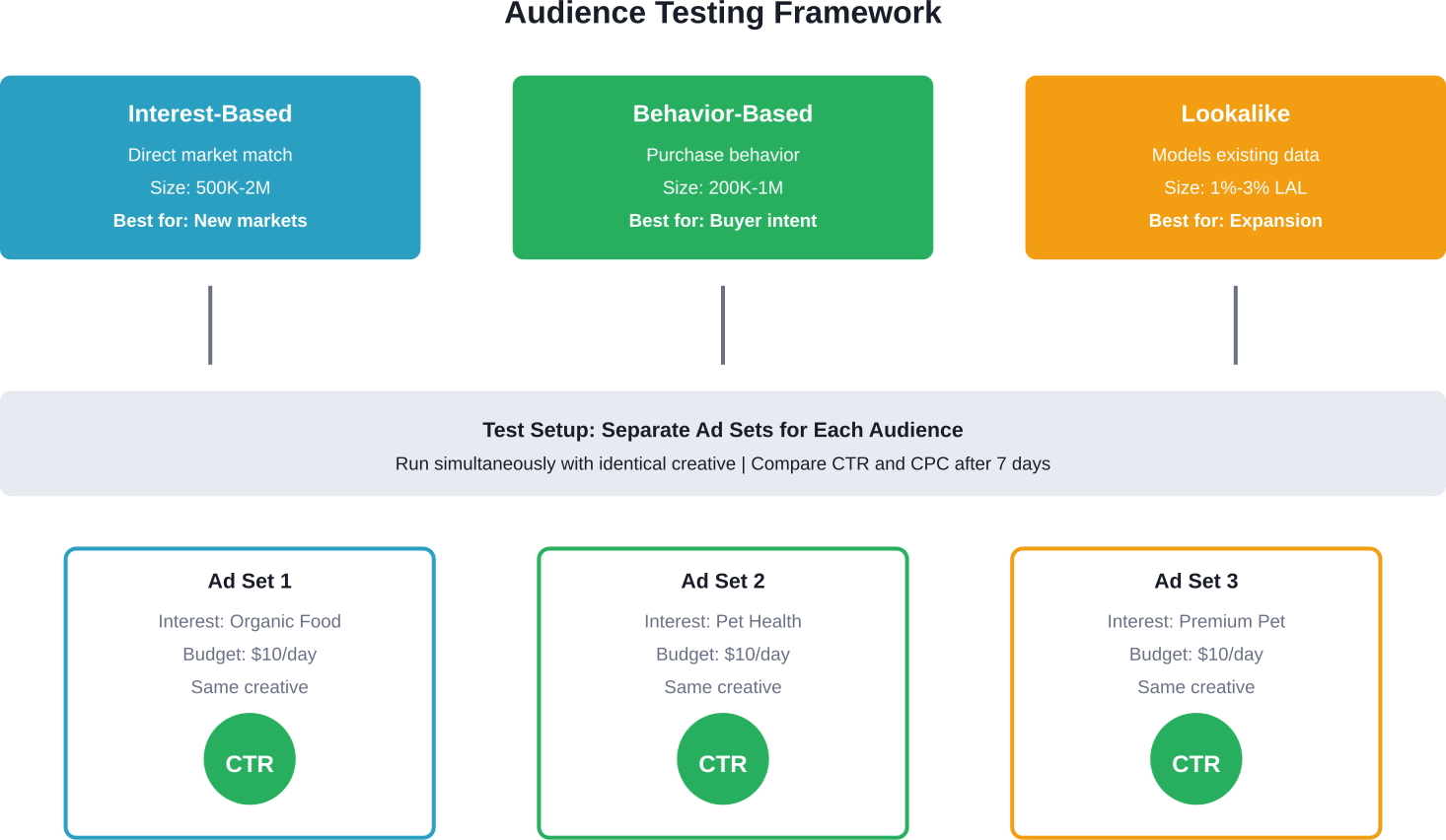

Start with interest-based audiences that directly match the market hypothesis. If testing demand for organic pet food, target people interested in organic products, pet health, and premium pet brands. Avoid broad audiences—they dilute signal.

Create separate ad sets for each audience being tested. Never combine multiple test audiences in one ad set. This allows direct comparison of performance metrics across different market segments.

Lookalike audiences work when existing customer data exists, but they're less useful for completely new markets. Lookalikes model known buyers—if testing a genuinely new market, no relevant buyer data exists yet.

Age and gender targeting should match the suspected market demographic, but leave some room for discovery. Overly narrow targeting might miss unexpected market segments.

Testing multiple variables simultaneously makes results unreadable. If an ad performs well, was it the audience, the creative, the offer, or the copy? No way to know.

Isolate variables across separate campaigns or tests:

This controlled approach produces actionable insights. When only the audience changes, performance differences clearly indicate which segment responds better.

Many marketers rush this process, testing everything at once. The data becomes noise. Slow down. Test sequentially if budget is limited.

Not all metrics matter equally for market testing. Impressions and reach measure exposure, not interest. Focus on engagement and cost metrics instead.

Click-through rate (CTR) is the primary signal of market interest. CTR above 1% is generally considered a strong engagement indicator. Below 0.5% suggests the market isn't interested or the messaging misses the mark.

Cost per click (CPC) reveals whether interest is affordable. High CPC—above $3-5 for most markets—signals either strong competition or weak ad relevance. Low CPC combined with strong CTR indicates an underserved market with real demand.

Cost per thousand impressions (CPM) shows audience saturation and competition. Rising CPM during a test suggests audience fatigue or competitive pressure. Stable or declining CPM indicates room for scaling.

Conversion rate matters most, but early-stage market tests might not generate enough conversions for statistical significance. CTR and CPC provide earlier signals with smaller sample sizes.

Track outbound link clicks specifically, not just link clicks. Outbound measures actual clicks through to the landing page, filtering out accidental engagement.

Creative testing requires different formats and angles, not just color variations. Test concepts, not cosmetic differences.

Start with one creative per product or market angle. Use either a static image or short video—test format as a variable only after validating the market itself.

Static images work well for straightforward products and clear value propositions. They load fast and cost less to produce. Videos outperform for products that need demonstration or storytelling.

Test different creative angles:

Headlines and primary text should match the creative angle. Don't pair a problem-focused image with benefit-focused copy. Mismatched messaging confuses the signal.

Keep creative production lean during testing. High-production video isn't necessary to validate market demand. Authentic, simple content often tests better than polished corporate material.

Sometimes the market exists but the offer structure doesn't match buying preferences. Price points, bundles, trials, and guarantees all affect response.

Test different offer frames with the same product:

Offer framing can significantly impact CTR with identical products and audiences. The market might want the product but need a different entry point.

Risk reversal—guarantees, free returns, no-commitment trials—particularly matters for new or unfamiliar products. Test adding these elements before concluding a market doesn't exist.

The biggest testing mistake is premature optimization. Turning off ad sets after one day, pausing campaigns overnight, or constantly adjusting budgets prevents the algorithm from stabilizing.

Facebook's delivery system uses machine learning to find the right people within the target audience. This learning phase requires 50+ optimization events (conversions, clicks, etc.) or 5-7 days of consistent delivery.

Don't pause campaigns overnight to save money. Budget pacing already controls daily spend. Pausing resets the learning phase.

Resist the urge to tweak targeting mid-test. Each significant edit restarts learning. Let the campaign run untouched through the full testing window.

Monitor performance, but don't react to daily fluctuations. Day one might show 0.3% CTR. Day five could hit 1.8%. Early performance rarely predicts final results.

After the testing window closes, compare results against benchmarks and between test variations. Look for patterns, not just single metrics.

Strong market signals show up as:

Weak signals don't always mean the market doesn't exist. They might indicate poor ad-to-market fit. Before abandoning a market, test a different angle or offer.

Compare relative performance when testing multiple audiences. Even if absolute CTR is 0.7% across all audiences, one audience at 0.9% and another at 0.5% reveals which segment has stronger potential.

Look for engagement quality, not just quantity. High CTR with 5-second page visits indicates clickbait mismatch. Lower CTR with 2-minute visits and multiple page views signals genuine interest.

When tests identify winning combinations, resist the urge to 10x budgets overnight. Rapid scaling disrupts delivery and raises costs.

Increase budgets by 20-30% every 3-4 days. This gradual approach keeps campaigns in the learning phase without shocking the auction dynamics.

Duplicate winning ad sets rather than just raising budgets. Run 3-5 identical ad sets at moderate budgets instead of one massive ad set. This diversifies delivery and reduces risk of algorithm hiccups.

Expand audience size by layering in adjacent interests or broader demographics once core audiences perform well. Don't jump straight to broad targeting—widen incrementally.

Initial tests generate data beyond the immediate metrics. People who engaged but didn't convert represent warm market segments worth retargeting.

Create custom audiences of:

Retargeting campaigns typically convert at higher rates than cold traffic at reduced cost per conversion. They validate whether initial interest translates to purchases with additional touchpoints.

Test different retargeting windows. Some products need 3-day windows (impulse purchases), others perform better with 14-30 day windows (considered purchases).

Even with structured frameworks, specific errors derail market tests. Watch for these patterns:

Test results fall into three categories: clear winners, clear losers, and ambiguous middle ground.

Clear winners show CTR above 1.5%, CPC under $2, and conversion rates above industry benchmarks. Scale these.

Clear losers show CTR below 0.5% across multiple creative and audience tests. Either the market doesn't exist or the product-market fit is wrong. Pivot or abandon.

The middle ground—0.7-1.2% CTR, moderate engagement, some conversions—requires iteration. Test different offers, creative angles, or audience segments before concluding.

Run at least 2-3 test cycles with different variables before abandoning a market completely. Sometimes the market exists but the first approach missed the mark.

Online advertising platforms are subject to Federal Trade Commission oversight regarding truthfulness and consumer protection. This applies to market testing campaigns just as much as scaled campaigns.

Avoid making unverified product claims just to test engagement. False advertising violations apply regardless of ad spend or campaign size. Meta’s Advertising Standards (formerly Facebook Ad Policies) and the updated FTC Health Products Compliance Guidance (2022/2023) require strict adherence to substantiated claims. Since 2024, Meta has also mandated the disclosure of 'Altered or Synthetic Content' (AI-generated) in social and political advertising, with expanded monitoring for health and financial services

When testing with testimonials or user-generated content, ensure proper permissions and disclosures are in place. Even during testing phases, compliance with advertising standards protects both the business and the platform account.

Facebook's ad policies prohibit certain content and claims. Familiarize yourself with restricted categories before testing in health, financial services, or other regulated industries.

Market validation is just the beginning. Tests that confirm demand need structured scaling plans to capitalize on findings.

Document what worked: specific audiences, creative angles, offers, and messaging. These become the foundation for scaled campaigns.

Create lookalike audiences from test converters once 100+ conversions exist. These expand reach while maintaining quality.

Develop creative variations based on test winners. Don't scale with just one ad—build a library of proven formats and messages to prevent fatigue.

Track unit economics throughout scaling. CPA and ROAS that work at $20/day might not hold at $200/day. Monitor breakeven points and profit margins as spend increases.

The market testing framework provides the data foundation. Scaling transforms that data into sustainable growth. But without proper testing, scaling becomes expensive guesswork.

Test methodically. Scale deliberately. The businesses that master this cycle consistently outperform competitors who skip validation and scale on hope.