Does Shopify Have Live Chat? What Store Owners Can Actually Use

Wondering if Shopify has live chat support? Here’s how Shopify chat works, who gets access, and what options are available.

AI agents workflow automation represents a paradigm shift where autonomous AI systems can observe environments, make decisions, and execute complex multi-step tasks without constant human oversight. These intelligent agents coordinate through structured workflows, combining LLM capabilities with external tools and contextual understanding to handle everything from document processing to multi-agent orchestration. Organizations are already seeing significant efficiency gains, with insurance underwriting agents achieving over 95% accuracy and modern frameworks delivering up to 16.28% performance improvements over traditional approaches.

The conversation around AI has fundamentally shifted. We're not talking about chatbots anymore—those reactive interfaces that wait for prompts and respond within narrow boundaries. What's happening now is far more interesting.

AI agents are autonomous programs that observe their surroundings, decide on actions, and execute multi-step tasks without constant human guidance. When these agents operate through structured workflows, they become capable of handling complexity that traditional automation can't touch.

According to research from arXiv on orchestrated multi-agent systems, autonomous agents in insurance underwriting now parse applications and supporting documents with over 95% accuracy, enabling much faster policy issuance. That's not incremental improvement—that's transformation.

AI agents workflow automation usually sounds broad, but some tools are built for one clear step in the process. Extuitive helps teams predict ad performance before launch. It is used to review creatives early, compare likely outcomes, and reduce some of the guesswork before campaigns go live.

Talk with Extuitive to:

👉 Book a demo with Extuitive to check ad performance before launch.

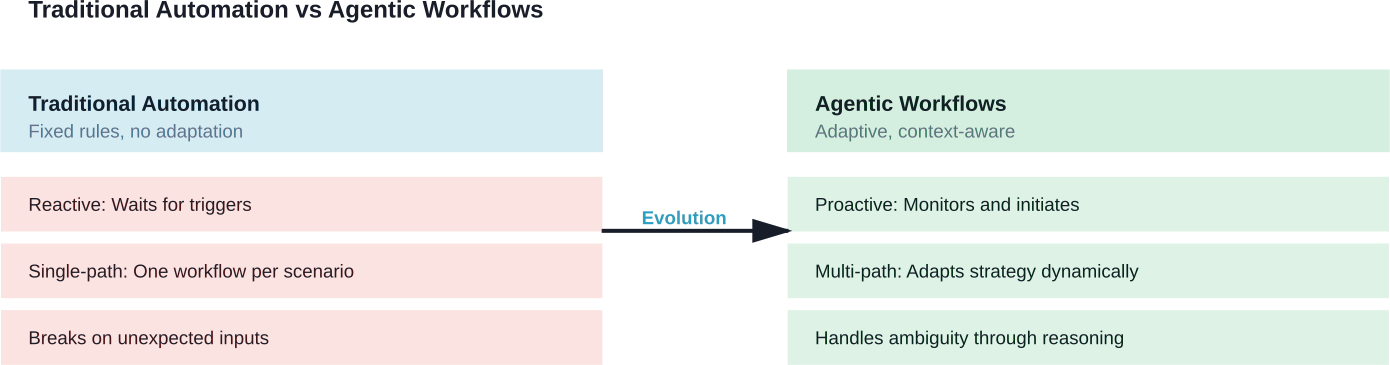

Traditional automation follows rigid rules. If-this-then-that logic works beautifully for predictable processes, but falls apart when context matters or when tasks require judgment.

Agentic workflows flip this model. Instead of following predetermined paths, AI agents interpret goals, assess context, and adapt their approach based on what they encounter. The difference isn't subtle.

Here's what sets agentic workflows apart:

Traditional automation handles the predictable. Agentic workflows handle the complex.

Building effective agentic workflows requires several foundational elements working together. None of these pieces are optional—remove one, and the system loses its adaptive capability.

Agents need to observe their operational context. This goes beyond reading inputs—it involves monitoring data streams, tracking process states, and recognizing when conditions warrant action.

In practical terms, perception might mean watching email queues for specific patterns, monitoring database changes for anomalies, or tracking API responses for error conditions. The agent doesn't wait to be told something happened. It watches and notices.

Large language models provide the cognitive foundation for agentic systems. But reasoning requires more than text generation—it demands structured approaches to problem decomposition and planning.

Research from the arXiv survey 'A Survey on Agent Workflow -- Status and Future' (submitted August 2, 2025) emphasizes that autonomous agents have emerged as powerful paradigms for achieving general intelligence through structured reasoning capabilities. These systems break complex goals into manageable subtasks, evaluate multiple approaches, and select strategies based on context.

An agent without tools is just a chatbot. Real workflow automation requires agents to interact with external systems—databases, APIs, file systems, specialized computation engines.

Microsoft's AutoGen framework demonstrates this well, providing strong support for agents that orchestrate multiple tools through conversational paradigms. Agents discuss which tools to invoke, coordinate their use, and iterate based on results.

Effective agents maintain context across interactions. Short-term memory tracks the current task state. Long-term memory stores learned patterns, successful strategies, and domain knowledge.

Without memory, agents become stateless responders—functional for single interactions but incapable of handling multi-step workflows that span hours or days.

When workflows require multiple specialized agents, structured communication becomes critical. According to arXiv research on multi-agent orchestration, autonomous agents collaborate through defined protocols and communication patterns to achieve complex shared objectives.

These protocols determine how agents request help, share findings, negotiate task allocation, and resolve conflicts when goals compete.

Not all agents are built the same. Different workflow challenges demand different agent architectures.

These agents operate on condition-action rules. They observe the current state and execute predefined responses. Think of them as enhanced automation—more flexible than traditional scripts but not truly adaptive.

Simple reflex agents work well for straightforward processes where the mapping between conditions and actions is clear and stable.

Model-based agents maintain internal representations of how the world works. They track state changes, predict outcomes, and adjust actions accordingly.

These agents can handle partial observability—situations where they can't see everything relevant to their task. The internal model fills gaps and guides decision-making even with incomplete information.

Goal-based agents work backward from desired outcomes. Given an objective, they generate plans, evaluate approaches, and execute sequences of actions designed to achieve specific results.

This architecture suits project-oriented workflows where the end state is clear but the path to get there requires dynamic planning.

Retrieval-augmented generation agents combine LLM reasoning with external knowledge retrieval. Instead of relying solely on training data, these agents pull relevant information from document stores, databases, or knowledge graphs during task execution.

RAG-enhanced agents offer several distinct advantages according to analysis from workflow automation experts: knowledge recency through access to updated information beyond training data, factual grounding in verifiable source material, and domain specificity through tailored knowledge bases.

Complex workflows often exceed what any single agent can handle effectively. Multi-agent systems distribute work across specialized agents that coordinate through structured interaction.

Research on the AOrchestra framework shows that automated sub-agent creation for agentic orchestration achieves a 16.28% improvement when paired with representative orchestration approaches. The framework dynamically creates specialized sub-agents as needed rather than predefining all agent types.

Some workflows require human judgment at critical decision points. Human-in-the-loop agents automate routine portions while escalating ambiguous cases or high-stakes decisions to people.

This hybrid approach combines automation's efficiency with human expertise and accountability.

Theory matters less than results. Where are agentic workflows actually delivering value?

Insurance companies deploy networks of specialized AI agents to automate labor-intensive underwriting processes. Autonomous agents parse applications, verify supporting documents, assess risk factors, and make approval recommendations.

According to arXiv research on multi-agent orchestration, these systems achieve over 95% accuracy in document processing, dramatically reducing policy issuance time from days to hours.

Multi-agent systems handle customer inquiries by coordinating specialized agents for different knowledge domains. One agent handles account lookups, another manages policy questions, a third escalates complex issues to human representatives.

The coordination happens transparently—customers interact with what feels like a single intelligent system, while behind the scenes multiple agents collaborate to resolve requests.

Development teams use agentic workflows for code review, test generation, bug triage, and deployment orchestration. Agents analyze pull requests, identify potential issues, generate test cases, and coordinate CI/CD pipelines.

Microsoft's Azure AI Agent Service supports Python and C# for building agents with user-defined tools. This service is currently in Public Preview.

RAG-enhanced agents automate data mining, pattern recognition, and insight generation. These systems pull data from multiple sources, apply analytical models, and generate reports without manual intervention.

The key advantage? Agents adapt their analysis approach based on data characteristics rather than following rigid analytical scripts.

According to IEEE research on intelligent agentic frameworks, autonomous agents are moving toward self-managing network configuration. These systems monitor network state, detect configuration drift, identify optimization opportunities, and execute changes within defined safety parameters.

Moving from concept to production requires structured implementation. Here's how successful teams approach it.

Start with specific outcomes. "Automate customer service" is too vague. "Reduce first-response time for tier-1 support tickets by 60% while maintaining 90% customer satisfaction" gives agents clear targets.

Success metrics should be quantifiable and aligned with business value—time saved, error reduction, cost per transaction, user satisfaction scores.

Document the current process in detail. Where do humans make decisions? What information do they use? What tools do they access? Which steps are sequential versus parallel?

Understanding the existing workflow reveals where agents add value and where human judgment remains essential.

Framework choice matters. According to community discussions around AI agent frameworks, Microsoft AutoGen excels at conversational multi-agent paradigms with strong human-in-the-loop support. Other frameworks prioritize different trade-offs—developer experience, cloud integration, scalability characteristics.

Evaluate frameworks based on actual workflow requirements, not feature checklists. The "best" framework is the one that matches specific use cases.

Resist the temptation to build complex multi-agent systems immediately. Single-agent workflows are simpler to debug, easier to validate, and faster to deploy.

Prove value with simple agents first. Complexity can scale as needs grow and teams gain experience.

Every automated flow requires verification through secure sandbox environments that simulate real scenarios. Data masking and seeding protect sensitive information during testing. Repeatable test cases validate outcomes at scale.

According to analysis from IT teams evaluating AI workflow automation, testing must also account for scalability and peak loads—agents that work fine with 10 concurrent tasks might fail catastrophically at 1,000.

Even highly autonomous workflows need human intervention points. Build escalation paths for ambiguous situations, monitoring dashboards for unusual patterns, and override mechanisms for emergencies.

The goal isn't to eliminate humans—it's to focus human attention where judgment matters most.

Certain workflow structures appear repeatedly across different domains and use cases.

The simplest pattern—agent completes task A, then task B, then task C. Each step depends on the previous one completing successfully.

Sequential chains work for linear processes where order matters and parallel execution offers no advantage. Document processing pipelines often follow this pattern.

Multiple agents work simultaneously on different aspects of a problem, then results aggregate into a unified output. This pattern accelerates workflows where independent subtasks can execute concurrently.

Research synthesis represents a common use case—multiple agents search different knowledge domains simultaneously, then a coordinator aggregates findings into coherent reports.

A coordinator agent decomposes complex tasks and delegates subtasks to specialized agents. The coordinator monitors progress, handles exceptions, and integrates results.

According to AOrchestra research, dynamic sub-agent creation extends this pattern—the system generates specialized agents on-demand rather than maintaining predefined agent pools.

Agents complete tasks, evaluate results against quality criteria, and refine outputs through multiple iterations. This pattern suits creative or analytical work where initial attempts rarely achieve optimal results.

Code generation often uses iterative refinement—generate initial code, test functionality, identify issues, revise implementation, repeat until tests pass.

Agents handle routine portions while humans provide guidance, make critical decisions, or validate outputs. The pattern alternates between autonomous and supervised phases.

High-stakes workflows like medical diagnosis or financial trading often adopt this hybrid approach—agents surface insights and recommendations, humans make final calls.

The AI agent framework landscape evolved significantly through 2025 and into 2026. Choosing well requires understanding core trade-offs.

AutoGen builds on a conversational paradigm where agents discuss tasks with each other and with humans. The framework excels at human-in-the-loop workflows and supports both Python and .NET implementations.

Strong integration with Microsoft's Azure ecosystem makes AutoGen attractive for organizations already invested in Azure infrastructure.

Major cloud platforms now offer managed agent services. Azure AI Agent Service provides SDK support for Python and C# with built-in agent templates and tool integrations.

Managed services reduce infrastructure complexity but may limit customization compared to framework-based approaches.

Research on resource-efficient agentic workflow orchestration highlights platforms like Murakkab, designed specifically for cloud-based multi-model coordination with complex control logic.

These platforms optimize for specific constraints—minimizing token generation to reduce latency and GPU load, or coordinating models with different capability profiles.

Framework evaluations should prioritize:

Feature parity matters less than fit with actual requirements. An over-featured framework that doesn't match workflow patterns delivers less value than a simpler tool aligned with specific needs.

Deploying agents is just the beginning. Continuous monitoring and optimization determine long-term success.

Track metrics across multiple dimensions:

No single metric tells the complete story. Balanced scorecards prevent optimizing one dimension at the expense of others.

According to research on resource-efficient orchestration, higher token generation correlates with increased latency, cost, and GPU load. For example, video Q&A configurations using Gemma-3-27B with 10 extracted frames and speech-to-text enabled achieve highest accuracy but also generate the most tokens.

The optimization challenge? Balancing quality against resource consumption. Sometimes simpler models with lower token generation deliver acceptable results at fraction of the cost.

Optimization follows predictable patterns:

Small improvements compound. A 10% latency reduction across five workflow steps cuts total execution time by 40%.

Autonomous agents operating in production environments introduce security and compliance challenges that demand proactive design.

Agents need sufficient permissions to accomplish tasks but excessive access creates risk. Apply least-privilege principles—grant only the minimum permissions required for specific functions.

Separate agent roles by function. An agent handling customer inquiries shouldn't have database write permissions beyond logging interactions.

Agentic workflows often process sensitive information—customer data, financial records, health information. Design must ensure compliance with relevant regulations—GDPR, HIPAA, SOC 2, industry-specific requirements.

Data handling policies should specify what agents can access, how long information persists in memory, and where processing occurs geographically.

Every agent action should generate auditable logs. When agents make decisions, capture the reasoning process, data accessed, tools invoked, and outcomes achieved.

Auditability enables compliance validation, debugging, and continuous improvement. It also provides accountability when automated decisions require review or explanation.

According to analysis from teams implementing AI workflow automation, comprehensive testing requires secure sandbox environments, data masking to protect sensitive information, and repeatable test cases at scale.

Testing shouldn't just validate happy paths. Edge cases, adversarial inputs, and failure scenarios reveal where agents might behave unexpectedly or unsafely.

The National Institute of Standards and Technology published guidance to cultivate trust in AI technologies while promoting innovation and mitigating risk. Organizations building agentic workflows should align implementations with established risk management frameworks.

NIST's approach emphasizes governance, mapping risks to specific contexts, measuring impact, and managing throughout the AI lifecycle.

Agentic workflows aren't a universal solution. Understanding limitations prevents misapplication.

Running sophisticated agents requires significant compute resources. LLM inference costs, API usage, and infrastructure overhead add up quickly at scale.

For workflows processing thousands of transactions daily, cost optimization becomes critical. Sometimes traditional automation remains more economical than agentic approaches.

Agents reason probabilistically, not deterministically. The same input might occasionally produce different outputs. This variability challenges workflows requiring absolute consistency.

Edge case handling improves with testing and refinement, but complete predictability remains elusive. Critical workflows may require hybrid approaches with human validation.

Connecting agents to legacy systems, proprietary databases, and specialized tools requires significant integration effort. APIs might not exist. Data formats might be inconsistent. Authentication schemes might not support programmatic access.

Integration complexity often exceeds agent development effort. Budget accordingly.

Building production-quality agentic workflows requires diverse expertise—LLM engineering, software architecture, domain knowledge, security practices. Finding teams with complete skillsets poses challenges.

Upskilling existing teams takes time. External expertise accelerates initial projects but knowledge transfer determines long-term sustainability.

The field is evolving rapidly. Several trends are shaping where agentic workflows head next.

Rather than predefining all agent types, systems increasingly generate specialized agents on-demand. AOrchestra's approach of automated sub-agent creation demonstrates this pattern—the framework spawns agents with specific capabilities as workflows require them.

Dynamic generation enables more flexible adaptation to novel situations without anticipating every possible agent type during design.

Moving beyond simple escalation patterns, newer frameworks enable fluid collaboration where humans and agents work together iteratively. Agents propose approaches, humans provide feedback, agents refine based on guidance.

This collaborative dynamic leverages AI's breadth and speed with human judgment and creativity.

Early multi-agent systems operate within organizational boundaries. Emerging patterns involve agents from different organizations collaborating through standardized protocols.

Supply chain coordination, multi-party transactions, and federated analytics represent domains where cross-organizational agent networks could deliver value.

IEEE has active standards efforts around autonomous and intelligent systems. As the field matures, expect greater standardization around agent communication protocols, security practices, and interoperability frameworks.

Standards reduce integration complexity and enable agent ecosystems spanning multiple platforms and vendors.

Agentic workflows represent genuine evolution in how systems handle complex processes. The shift from rigid automation to adaptive intelligence opens possibilities that weren't feasible before.

But this isn't magic. Success requires clear objectives, appropriate framework selection, thorough testing, and realistic expectations about capabilities and limitations.

Start small. Prove value with focused workflows before scaling to enterprise-wide deployments. Build expertise incrementally. Design for human collaboration rather than complete autonomy.

The organizations seeing real value from agentic workflows share common patterns—they define concrete success metrics, they test extensively before production deployment, they monitor continuously after launch, and they iterate based on actual outcomes rather than theoretical benefits.

AI agents aren't replacing human judgment. They're augmenting it, handling routine complexity so people can focus on work requiring creativity, empathy, and strategic thinking.

That's the real promise of workflow automation through AI agents—not eliminating human involvement, but elevating it to where it matters most.