200+ Nail Salon Name Ideas That Attract Clients (2026)

Discover 200+ creative nail salon name ideas for 2026. From elegant to edgy, find the perfect business name that attracts clients and builds your brand.

Testing audiences in Facebook ads involves systematically experimenting with different targeting options to identify which groups deliver the best campaign performance. Effective audience testing requires a sufficient budget for statistical significance, proper test setup isolating single variables, and data-driven analysis of key metrics like cost per acquisition and return on ad spend. The testing phase (Learning Phase) typically requires up to 72 hours or until 50 conversion events are generated before making optimization decisions.

Audience testing represents the backbone of profitable Facebook advertising campaigns. Without systematic testing, advertisers essentially gamble with their budgets, hoping the right people see their offers.

The reality? Even the most successful Facebook ads eventually lose momentum once scaled with a significant budget. The algorithmic landscape shifts constantly, audience behavior changes, and ad fatigue sets in faster than most marketers anticipate.

This guide breaks down the complete process for testing audiences on Facebook's platform. The strategies outlined here apply equally to Instagram placements, since both platforms share the same advertising infrastructure through Meta's business tools.

The advertising ecosystem has fundamentally changed. Meta has removed the ability to see demographic breakdowns (age, gender, location) for estimated audience size in its planning tools for all audience types, and Special Ad Categories have significantly restricted targeting and reporting capabilities since 2022-2023.

Here's the thing though—these platform changes mean advertisers can't rely on outdated targeting strategies from 2020 or 2021. The testing process has become more critical, not less.

Meta's algorithm has grown more sophisticated at finding relevant audiences when given proper signals. But it needs data. Lots of data. And that data comes from systematic testing.

Most Facebook audience tests still come down to trial and error. You launch different segments, wait for data, and end up spending part of the budget just to see what doesn’t work.

Extuitive shifts that step forward. It simulates how different audience groups react to your creatives before anything goes live, helping you compare combinations and spot stronger directions early. Instead of starting from zero, you go into campaigns with a filtered set of ideas that already make sense. If you want to make your audience testing more predictable and waste less budget, start using Extuitive before your next campaign goes live.

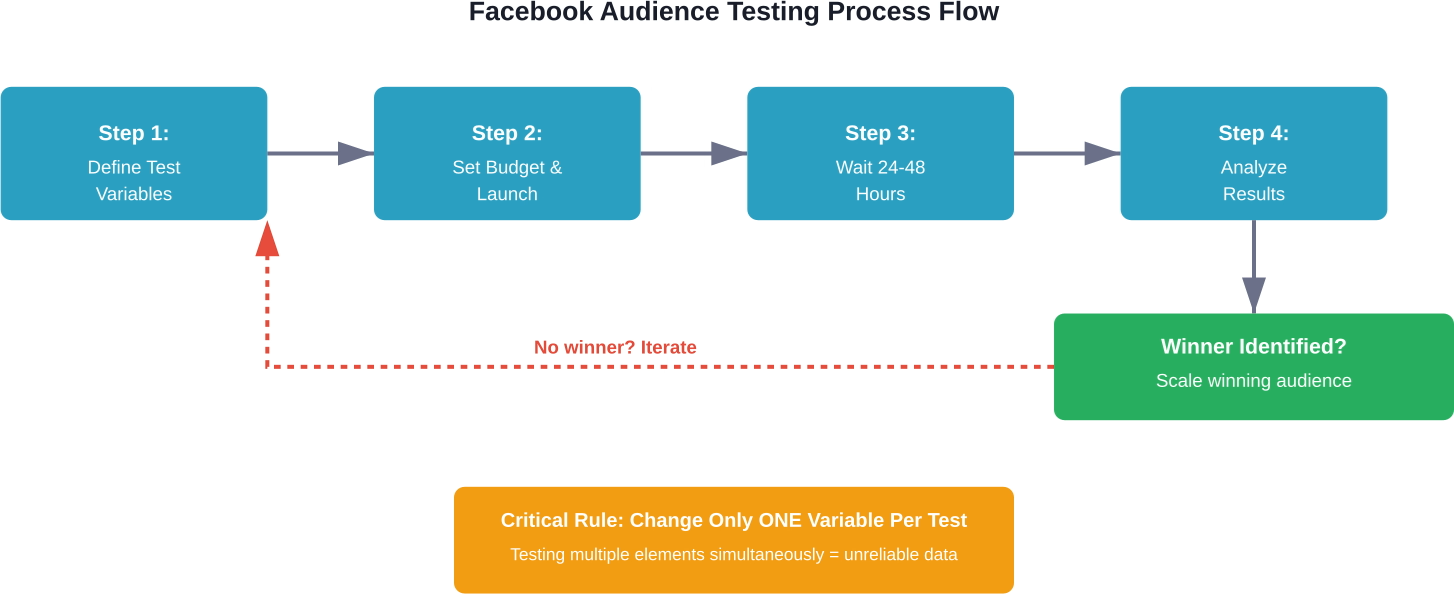

Successful audience testing follows a predictable structure. The framework remains consistent even as platform features evolve.

The testing phase serves a specific purpose: gathering statistically significant data to make informed decisions. During this phase, Facebook's algorithm learns which users within the target audience are most likely to complete the desired action.

Once Facebook gathers enough data, patterns emerge. Some audiences respond immediately. Others show promise but need refinement. Many fail completely.

Success requires a systematic approach and sufficient data to achieve statistical significance. Without reaching this threshold, decisions become guesswork dressed up as optimization.

Only test one element at a time. This principle cannot be overstated.

When testing audiences, everything else must remain constant: the creative, the copy, the placement, the bidding strategy. Change the audience targeting, nothing else.

Testing multiple variables simultaneously creates confusion. If performance improves, which change caused it? If results worsen, which element failed? The data becomes meaningless.

Not all audience tests deliver equal value. Some testing strategies consistently reveal actionable insights. Others waste the budget on marginal differences.

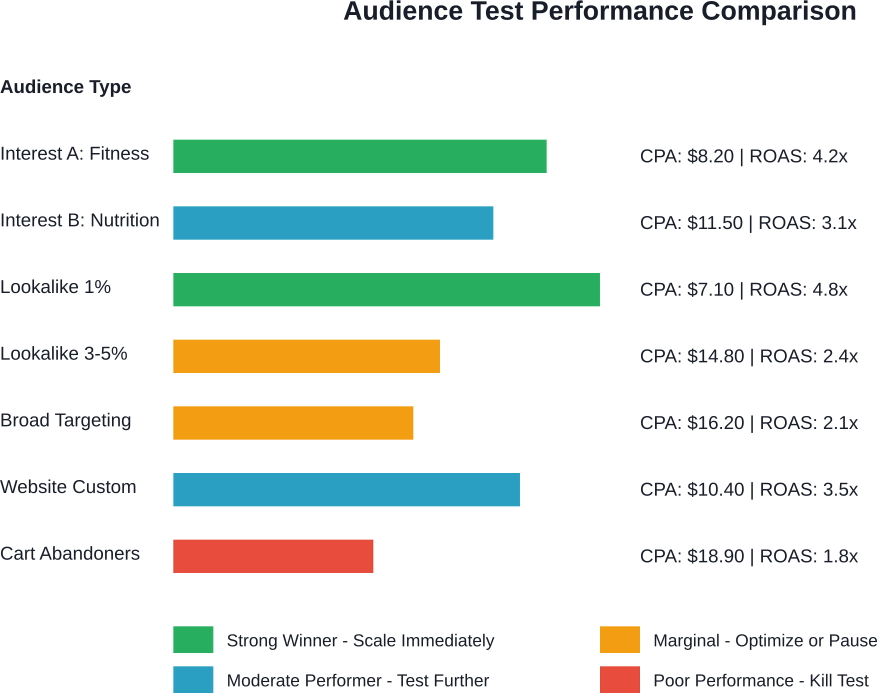

Interest targeting remains one of the most accessible testing methods. Facebook allows advertisers to target users based on their demonstrated interests, behaviors, and page likes.

The strategy: create separate ad sets for different interest categories. One ad set targets fitness enthusiasts. Another targets nutrition-focused audiences. A third targets weight loss communities.

Each ad set runs with identical creative and budget. After the testing phase, performance data reveals which interest groups respond most favorably to the offer.

Lookalike audiences leverage Facebook's algorithm to find users similar to existing customers or engaged audiences. The platform analyzes thousands of data points to identify patterns.

Testing different lookalike percentages often reveals surprising results. A 1% lookalike (most similar to the source audience) doesn't always outperform a 3-5% lookalike (broader but still relevant).

According to Brookings Institution research, while Facebook doesn't permit direct demographic queries for Lookalike audiences, advertisers can leverage daily reach estimates of Facebook's ad planning tool to indirectly observe audience composition.

Custom audiences built from existing data—website visitors, email lists, app users—often perform exceptionally well. But not all custom audiences perform equally.

Test different segments separately. Compare results from:

The granularity reveals which segments show genuine purchase intent versus casual interest.

This debate continues raging in advertising communities. Meta's algorithm has improved dramatically at finding relevant users within broad audiences. Sometimes overly specific targeting actually limits performance.

The only way to know? Test it.

Run one campaign with detailed targeting (stacked interests, behaviors, and demographics). Run another with minimal targeting, letting the algorithm optimize delivery. Compare results after sufficient data collection.

Proper test setup determines data quality. Sloppy configuration produces unreliable results, leading to poor scaling decisions.

Create a dedicated campaign for testing. Don't mix test ad sets with proven performers. The separation keeps data clean and decision-making clear.

Within the test campaign, create separate ad sets for each audience variation. Each ad set represents one hypothesis: "This audience will convert profitably."

Use identical ads across all test ad sets. Same images, same copy, same call-to-action. The audience becomes the only variable.

Budget determines how quickly tests reach statistical significance. Too little budget extends the testing phase indefinitely. Too much budget wastes money on underperforming audiences.

The amount of budget needed varies based on several factors. Higher-priced products require more spend to generate sufficient conversion data. Lower-priced impulse purchases reach significance faster.

A general guideline: allocate enough budget for each ad set to generate at least 50 conversions during the test period. For purchases, this might mean spending several hundred dollars per ad set. For lead generation with lower cost-per-lead, the requirement drops.

When budget constraints prevent testing multiple audiences simultaneously, sequential testing works. Test two or three audiences this week, then test additional variations next week. The process takes longer but preserves budget efficiency.

Once test ads launch, don't touch them for at least 24-72 hours. Let them run. Let the algorithm work.

Early performance data misleads. An ad set that looks terrible after 6 hours might perform brilliantly after 36 hours. Another that starts strong might fade quickly.

Facebook's learning phase requires time to optimize delivery. Premature interference—pausing ads, adjusting budgets, changing targeting—resets this learning process. The algorithm starts over, extending the time needed for reliable data.

The patience pays off. Community discussions consistently emphasize this point: advertisers who wait for sufficient data make better optimization decisions than those who react to early fluctuations.

Data analysis separates successful advertisers from those who burn through budgets without learning. Numbers tell stories, but only when interpreted correctly.

Different business models require different success metrics. E-commerce businesses focus on return on ad spend and cost per purchase. Lead generation businesses track cost per lead and lead quality. Content businesses might prioritize cost per click and engagement rates.

The primary metrics for audience testing include:

Real talk: obsessing over secondary metrics while ignoring primary business goals leads nowhere. If the business needs leads under ten dollars each, that's the metric that matters. Everything else provides context.

Statistical significance matters. Making decisions based on three conversions from one audience versus five from another means nothing. The sample size is too small for meaningful conclusions.

Generally speaking, audiences need to generate at least 50-100 conversions before patterns become reliable. For expensive products where conversions come slowly, advertisers might accept lower thresholds—but the risk of false conclusions increases.

When test budgets don't allow reaching ideal sample sizes, extend the testing period. Better to wait an extra week for reliable data than to scale a mediocre audience prematurely.

Not every test produces winners. Some audiences simply don't work for specific offers. Knowing when to abandon a test saves money for more promising opportunities.

Consider killing an audience test when:

Failed tests provide valuable information. They eliminate options, narrowing focus toward audiences that actually perform. Document what doesn't work to avoid repeating unsuccessful tests.

Finding a winning audience represents just the beginning. Scaling that winner while maintaining performance requires careful execution.

The scaling process typically follows one of two paths: vertical scaling (increasing budget on the winning ad set) or horizontal scaling (duplicating the winner to new ad sets or campaigns).

Increasing budget in the original ad set represents the most straightforward scaling approach. The method works well when done gradually.

You can typically increase the budget by up to 20% every 2-3 days to minimize the risk of resetting the learning phase. Larger jumps—doubling budget overnight, for example—often trigger algorithm relearning, temporarily tanking performance.

Monitor performance closely during scaling. CPA might increase slightly as budget grows (the easiest conversions get captured first), but dramatic performance drops signal scaling too quickly.

Duplicating winning ad sets to new campaigns allows more aggressive scaling while preserving the original performer. If the duplicate fails, the original continues running profitably.

Some advertisers create duplicate ad sets at higher budgets within the same campaign. Others launch entirely new campaigns with winning audiences. Both approaches work—testing reveals which performs better for specific accounts.

After establishing a winning audience, test related variations. If a 1% lookalike performs well, test 1-2% and 2-3% lookalikes. If fitness interest targeting succeeds, test adjacent interests like yoga or running.

Expansion maintains momentum when original audiences saturate. Eventually, even the best audiences reach most responsive users. Having tested alternatives ready prevents revenue drops.

Once the fundamentals work consistently, advanced strategies unlock additional performance gains.

Layering combines multiple targeting criteria to create more specific audiences. Instead of broadly targeting fitness enthusiasts, layer fitness interest with online shopping behavior and certain income brackets.

Exclusions prevent wasted spend on users unlikely to convert. Exclude past purchasers from acquisition campaigns. Exclude brand name searches from prospecting audiences. Exclude specific age ranges that historically don't convert.

Testing layered audiences against broader versions reveals whether increased specificity improves efficiency or simply limits reach unnecessarily.

Audience behavior shifts throughout the week. B2B audiences might respond better during weekday business hours. Consumer audiences might engage more evenings and weekends.

Test the same audience with different dayparting schedules. Run one ad set all day, every day. Run another only during peak engagement hours. Compare cost efficiency and conversion rates.

Different placements within Facebook's network attract different user mindsets. Feed placements capture users browsing casually. Story placements reach users consuming content rapidly. Reels compete with TikTok-style short video consumption.

Some audiences perform dramatically better in specific placements. Testing audience and placement combinations reveals these preferences.

Even experienced advertisers fall into predictable traps. Recognizing these mistakes accelerates the learning process.

The most common mistake: changing audience, creative, and placement all at once. When performance changes, the cause becomes impossible to identify.

Stick to the one-variable rule religiously. Test audiences with consistent creativity. Test creative with consistent audiences. The discipline feels restrictive but produces actionable insights.

Testing with ten dollars per day might work for page engagement campaigns. For conversion campaigns requiring multiple touchpoints, it barely registers.

Calculate minimum budget requirements before launching tests. If the budget doesn't allow proper testing, either adjust expectations or wait until more budget becomes available.

Checking results every two hours and panicking over early performance creates chaos. The 24-48 hour waiting period exists for good reason.

Set calendar reminders to review test results at appropriate intervals. Resist the temptation to constantly refresh Ads Manager. Trust the process.

When testing multiple interest-based audiences simultaneously, significant overlap might exist. Facebook could show ads to the same users across multiple ad sets, inflating costs and confusing results.

Use Facebook's audience overlap tool to check similarity between test audiences. When overlap exceeds 25-30%, consider testing these audiences sequentially rather than simultaneously.

Memory fails. Without documentation, patterns disappear. That audience tested six months ago might have performed brilliantly, but without records, the insight is lost.

Maintain a testing log. Record audience details, test dates, spend amounts, key metrics, and conclusions. The database becomes increasingly valuable over time.

Facebook's native tools provide substantial testing capabilities. Additional resources enhance the process further.

The platform includes built-in A/B testing functionality. This feature creates proper test structures automatically, splitting budgets evenly and running tests for specified durations.

The breakdown tool in Ads Manager reveals performance variations by age, gender, placement, device, and region. These insights identify patterns within broader audiences, suggesting new targeting refinements to test.

Audience overlap tool prevents testing audiences with excessive similarity. It compares targeting parameters and estimates how many users exist in multiple audiences simultaneously.

Facebook Pixel or Meta Pixel tracks user actions on websites, feeding conversion data back to the advertising platform. Proper pixel implementation is non-negotiable for conversion-based testing.

Custom conversions allow tracking specific actions beyond standard events. This granularity helps evaluate audience quality based on deeper engagement metrics.

Various tools aggregate Facebook advertising data with broader marketing metrics, revealing audience performance in full-funnel context. Check official sources for current tool availability and pricing, as these change frequently.

One-off tests produce temporary wins. Systematic, ongoing testing builds sustainable competitive advantages.

Dedicate a specific percentage of total ad spend to testing. Many successful advertisers allocate 15-20% of budget to experiments, with the remaining 80-85% running proven performers.

This split maintains stability while continuously searching for improvements. The testing budget might not generate immediate profit, but it fuels future scaling opportunities.

Regular testing schedules prevent complacency. Launch new audience tests weekly or bi-weekly depending on budget and bandwidth.

The consistent rhythm builds a knowledge base. After several months of systematic testing, patterns emerge revealing which audience types consistently perform for specific offers.

When multiple people manage Facebook advertising, centralized test documentation becomes critical. A shared testing log ensures everyone learns from every experiment.

Regular review meetings to discuss test results foster collaborative problem-solving. Different team members notice different patterns, accelerating collective learning.

Testing strategies require adaptation based on business type and goals.

E-commerce businesses focus heavily on ROAS and CPA metrics. Audience quality directly impacts profitability when product margins vary.

Testing product-specific audiences often reveals surprising insights. The audience that works for athletic shoes might completely fail for athletic supplements, even though both target fitness enthusiasts.

Seasonal behavior matters significantly. Test audience performance during different seasons and shopping periods. Holiday shopping audiences behave differently than January audiences.

Lead generation campaigns prioritize cost per lead, but lead quality matters equally. An audience generating five-dollar leads sounds amazing until sales teams report none of them qualify.

Test audiences while tracking both CPL and downstream conversion rates. The slightly more expensive audience that produces qualified leads typically wins long-term.

Local businesses face geographic constraints that affect testing. The total addressable audience might be relatively small, limiting testing options.

Focus testing on different value propositions and offer angles rather than purely demographic variations. Test the same geographic audience with different messaging approaches.

Audience testing represents an ongoing process, not a one-time project. The most successful advertisers treat testing as fundamental infrastructure, not optional experimentation.

Start with foundational tests: basic interest targeting, simple lookalike audiences, and custom audiences from existing data. Establish proper test setup procedures, ensure sufficient budget allocation, and develop discipline around the waiting period before analysis.

Document everything. The insights accumulated through systematic testing compound over time, creating knowledge assets that drive continuous improvement.

As testing sophistication grows, introduce advanced techniques: audience layering, placement-specific tests, and time-based optimization. But never abandon the fundamentals—the one-variable rule and statistical significance requirements remain critical regardless of sophistication level.

The advertising landscape continues evolving. Privacy changes, platform updates, and shifting user behavior require constant adaptation. A strong testing culture provides the flexibility to thrive despite these changes.

Begin testing systematically today. Allocate budget, define clear success metrics, and commit to the process. The audiences performing best for specific offers rarely match initial assumptions. Testing reveals the truth. And in paid advertising, truth equals profit.