Shopify Store Traffic Generation Strategies That Work in 2026

No-fluff breakdown of the exact traffic strategies Shopify stores rely on in 2026: SEO, paid ads, email, social, content, influencers and technical setup.

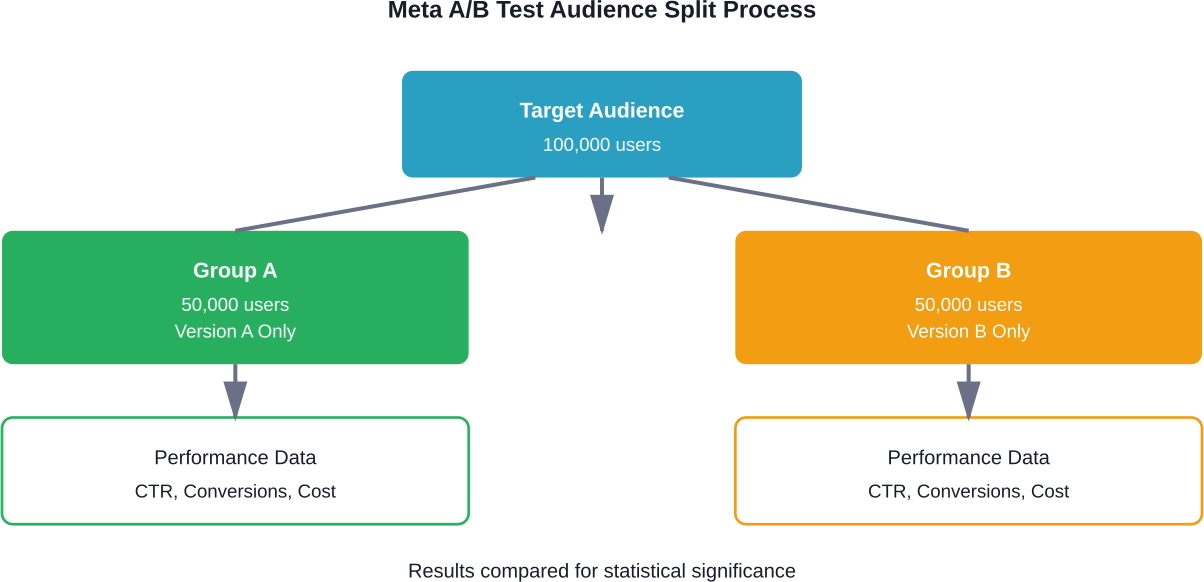

Meta Ads A/B testing compares two versions of an ad element to determine which performs better. The platform's built-in testing tool splits your audience evenly, controls for variables, and measures statistical significance to help optimize campaigns based on real performance data rather than assumptions.

Running ads without testing is like throwing darts blindfolded. You might hit something, but wouldn't it be better to see the board?

Meta Ads A/B testing removes the guesswork from advertising decisions. Instead of debating whether the sustainability angle or aesthetics message resonates better—like the landscaping company example from American Marketing Association research—the platform shows exactly which version drives results.

But here's where many advertisers stumble. Meta's A/B testing tool works differently than simply creating multiple ad variations under one ad set. The distinction matters for getting clean, reliable results.

According to Penn State Extension research on digital marketing, A/B testing effectiveness comes from testing the impact of small changes. Meta's built-in testing feature handles this testing automatically.

When advertisers create multiple ad versions within a single ad set, Meta's algorithm optimizes delivery. It shows the better-performing ads more frequently, which sounds helpful. The problem? This optimization creates overlapping audiences and inconsistent delivery, making it nearly impossible to know whether performance differences came from the creative itself or from the algorithm showing it to different people.

Meta's dedicated A/B testing tool solves this by splitting audiences evenly and controlling delivery. Each test version reaches a comparable group, creating what researchers call a "statistically fair comparison."

The platform divides the target audience into separate, non-overlapping groups. If testing two versions, each group sees only one version throughout the test period. This prevents the same person from seeing both ads, which would contaminate the results.

Stanford Graduate School of Business research notes that the increasing complexity of online platforms has revealed split testing's limitations. Meta addresses this through audience segmentation that happens at the delivery level, before ads ever reach users.

Meta A/B testing measures results after ads are already running. Extuitive is built for the step before that. It predicts ad performance before launch using AI models validated against live campaign results, so teams can compare creative before ads enter the test.

Use Extuitive to:

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

Meta structures campaigns in three levels: campaigns contain ad sets, which contain individual ads. Understanding this hierarchy explains why the platform creates new campaigns for certain tests.

When testing campaign-level elements like optimization strategy or delivery method, Meta needs to duplicate the entire campaign structure. Community discussions on platforms like Reddit highlight confusion about this—advertisers wonder why testing copy requires new campaigns when they could just add another ad variation.

The answer relates to control. For campaign-level tests, the system needs identical structures with one variable changed. For ad-level tests like creative or copy, the test can operate within ad sets.

Meta allows testing across different structural levels:

Each test should isolate one variable. Testing headline differences while simultaneously changing images creates ambiguity—which element drove the performance change?

From Ads Manager, select the campaign or ad set to test. The A/B test option appears in the tools menu.

First, choose the variable to test. Meta presents options based on the selected campaign structure. For creative tests, upload the alternative version. For audience tests, define the second targeting group.

Next, set the test budget and duration. According to Meta's testing setup recommendations, tests should target at least 80% estimated power. This represents the likelihood of detecting a meaningful difference if one exists.

The platform calculates estimated power based on budget, duration, and historical performance data. Tests with insufficient power waste budget on inconclusive results.

Duration matters too. Tests need enough time to account for daily fluctuations and gather sufficient data. Most tests run 3-14 days, depending on audience size and conversion volume.

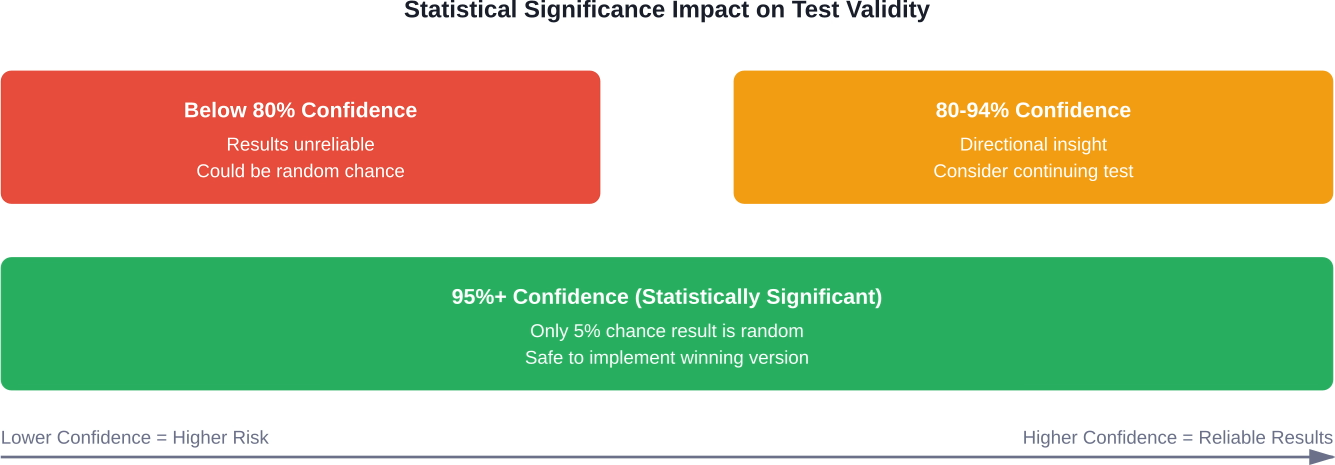

Not every performance difference means something. If Version A gets 102 clicks and Version B gets 98 clicks, that's probably random variance, not a real winner.

Meta uses statistical analysis to determine confidence levels. The platform shows when results reach significance, typically requiring a 95% confidence threshold. This means there's only a 5% chance the observed difference happened randomly.

Stanford Graduate School of Business research notes that the increasing complexity of online platforms has revealed split testing's limitations. Meta addresses this through adaptive algorithms that monitor test validity throughout the run.

Smaller audiences need longer test periods to accumulate meaningful data. Testing with 1,000 people differs dramatically from testing with 100,000.

The platform provides power estimates before launch, but these remain estimates. Actual performance affects whether tests reach conclusive results.

For low-traffic campaigns, consider testing higher-funnel metrics first. Click-through rate tests reach significance faster than conversion tests because clicks happen more frequently than purchases.

Meta displays test results directly in Ads Manager. The results dashboard shows performance metrics for each version alongside the confidence level.

Look beyond the declared winner. Sometimes Version B wins on click-through rate while Version A wins on cost per conversion. Which matters more depends on campaign objectives.

The platform highlights the primary metric selected during setup, but examining secondary metrics reveals deeper insights. An ad with higher engagement but lower conversion rates might attract the wrong audience, even if it technically "won" the engagement test.

Inconclusive tests happen. Budget runs out, performance differences remain too small, or audience size limits data collection.

This outcome still provides information. If two substantially different approaches produce statistically identical results, neither offers an advantage. Stick with the simpler or less expensive version.

Alternatively, the test might indicate the wrong variable was isolated. If testing blue versus red buttons produces no difference, the button color probably doesn't matter—focus on testing more impactful elements like the offer or headline instead.

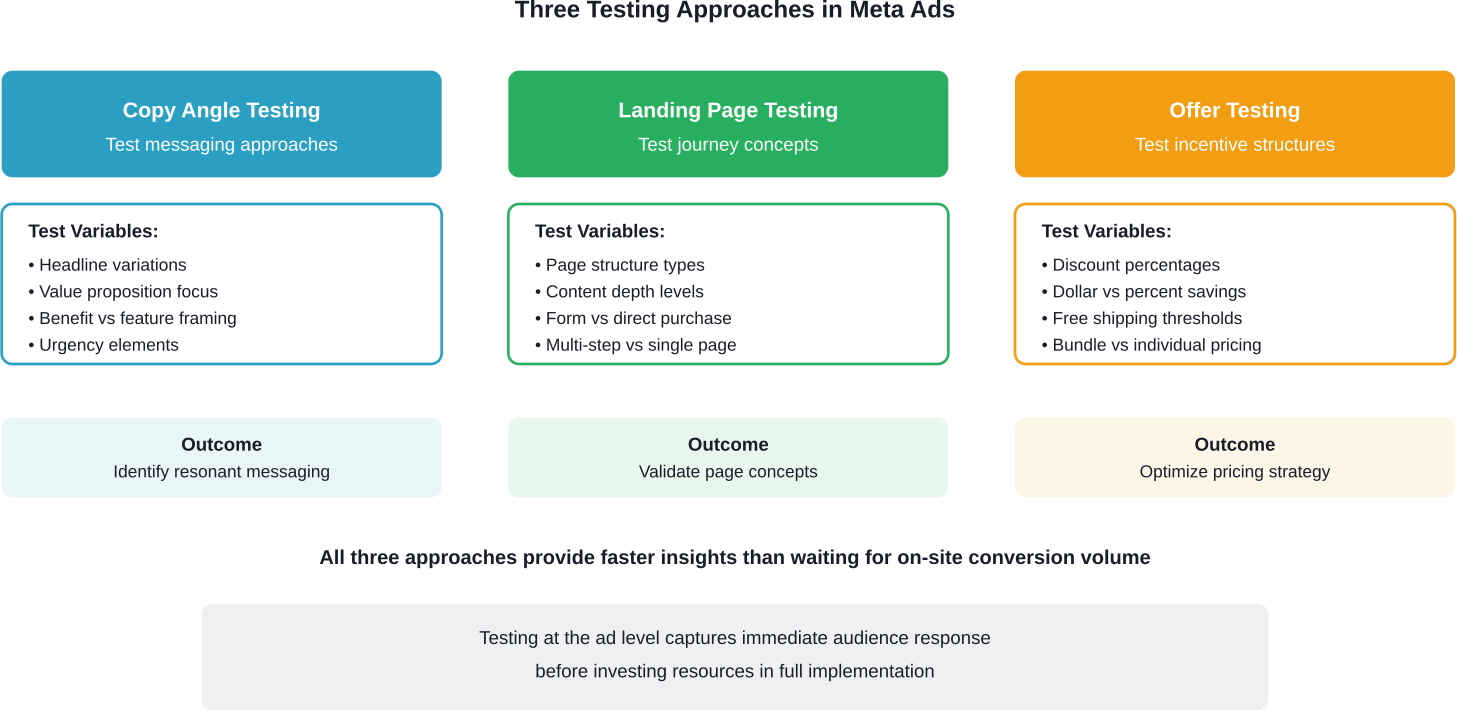

Different test types serve different strategic purposes. Here's what tends to work well at each stage.

In startup environments, traffic often feels like a luxury, with limited capacity for frequent A/B testing. Ad platforms offer a solution by providing immediate traffic volume for testing messaging approaches.

A sustainability-focused company might test environmental benefits against cost savings. The ad test reveals which message resonates before investing in landing page development around that angle.

Keep copy tests focused. Change the core message while keeping creative consistent. If both headline and image change, it becomes impossible to attribute performance to either element.

Ad tests can validate landing page concepts before building them. Create two ad versions promoting different benefits, each linking to the same landing page. The ad performance predicts which landing page approach would work better.

This upstream testing saves development resources. Instead of building three landing page variations and waiting for conversion volume, test three ad messages and build the page around the winner.

Price points, discounts, and incentive structures impact conversion rates dramatically. But changing prices site-wide carries risk.

Test offers through ads first. Run identical creative with different promotional approaches: "20% off" versus "Buy 2 Get 1 Free" versus "Free Shipping." The winning offer informs broader promotional strategy.

This approach particularly helps e-commerce advertisers determine optimal discount levels without training customers to expect constant sales.

Getting accurate test data requires methodical execution. Small setup errors compromise entire tests.

The most common mistake involves testing multiple changes simultaneously. Changing headline, image, and call-to-action together makes it impossible to identify which element drove performance.

Test sequentially instead. Win the headline test, then test images using the winning headline. This builds on validated improvements.

According to Meta's testing setup recommendations, tests should target at least 80% statistical power. Meta estimates this during setup based on historical performance.

Underfunded tests waste money without reaching conclusions. If the platform suggests a higher budget for statistical validity, either increase the budget or expect inconclusive results.

Similarly, ending tests early risks false positives. Day-one performance often differs dramatically from week-two performance as the algorithm optimizes delivery.

Define success before launching tests. An engagement test measures different outcomes than a conversion test.

For brand awareness campaigns, impressions and reach matter most. For lead generation, cost per lead determines winners. For e-commerce, return on ad spend provides the clearest picture.

Testing the wrong metric leads to wrong decisions. An ad that maximizes clicks but generates expensive conversions wins the battle while losing the war.

Black Friday traffic behaves differently than February traffic. Running tests during major events, holidays, or promotional periods introduces confounding variables.

Test during representative periods. Results from anomalous timeframes don't predict normal performance.

Build institutional knowledge by recording test results, hypotheses, and insights. This prevents repeating failed tests and identifies patterns across multiple experiments.

Note why tests were run, not just which version won. Context helps future decision-making.

Meta's A/B testing tool provides valuable insights, but it's not perfect for every situation.

Research from the American Marketing Association highlights a fundamental challenge with digital ad platform testing. As platforms personalize which users receive which ads, test groups can end up with diverging audience mixes even when the split appears random.

The landscaping company example illustrates this: the sustainability ad reached outdoor enthusiasts while the aesthetics ad reached home decor fans—not because of targeting settings, but because the platform's algorithm optimized delivery based on predicted engagement.

Meta's testing tool mitigates this through controlled splits, but complete algorithmic neutrality remains impossible. The platform still optimizes within each test group.

Niche targeting creates sample size problems. Testing with 2,000 total users means 1,000 per variation—potentially too small for statistical significance unless conversion rates or costs differ dramatically.

For small audiences, test broader elements first. Audience tests work better than creative tests when working with limited reach.

Tests require dedicated budget beyond normal campaign spending. Each test version needs sufficient delivery to generate meaningful data.

Budget-constrained advertisers should prioritize high-impact tests. Testing minor copy tweaks costs the same as testing fundamental strategy shifts—focus on the latter.

Winning a test is just the beginning. The real value comes from applying learnings systematically.

When a test reaches significance, Meta allows scaling the winner immediately. The platform can automatically allocate budget to the better-performing version and pause the loser.

But consider the broader implications. If audience testing reveals that parents of young children respond better than the original target, should the entire marketing strategy shift? Test results often have applications beyond the specific campaign tested.

Create a feedback loop between testing insights and strategic planning. Regular testing rhythm builds competitive advantages as accumulated learnings compound over time.

Meta Ads A/B testing transforms advertising from educated guessing into data-driven decision making. The platform's built-in testing tools handle the complex work of audience splitting, statistical analysis, and results reporting.

But the tool is only as good as the strategy behind it. Testing random variations produces random insights. Testing systematically—one variable at a time, with clear hypotheses and sufficient budget—builds compounding knowledge that improves every subsequent campaign.

Industry practitioners advise not waiting for statistical significance in all cases, and should instead focus on testing small changes methodically. Meta provides the infrastructure to do exactly that at scale.

Start with high-impact variables like core messaging and audience targeting. Win those tests, then optimize details like creative elements and placement. Each validated improvement becomes the new baseline, creating an upward performance spiral.

The advertisers who win aren't necessarily the ones with the biggest budgets. They're the ones who test consistently, learn systematically, and implement relentlessly. Meta's A/B testing tools make that process accessible to anyone willing to move beyond assumptions and let data lead the way.

Ready to start testing? Open Ads Manager, select a campaign, and launch your first experiment. The insights waiting in your data will surprise you.