Creative Testing Strategy for Meta & TikTok Ads (2026)

Master creative testing for Meta and TikTok ads with proven frameworks. Learn budget allocation, testing methodologies, and platform-specific tactics.

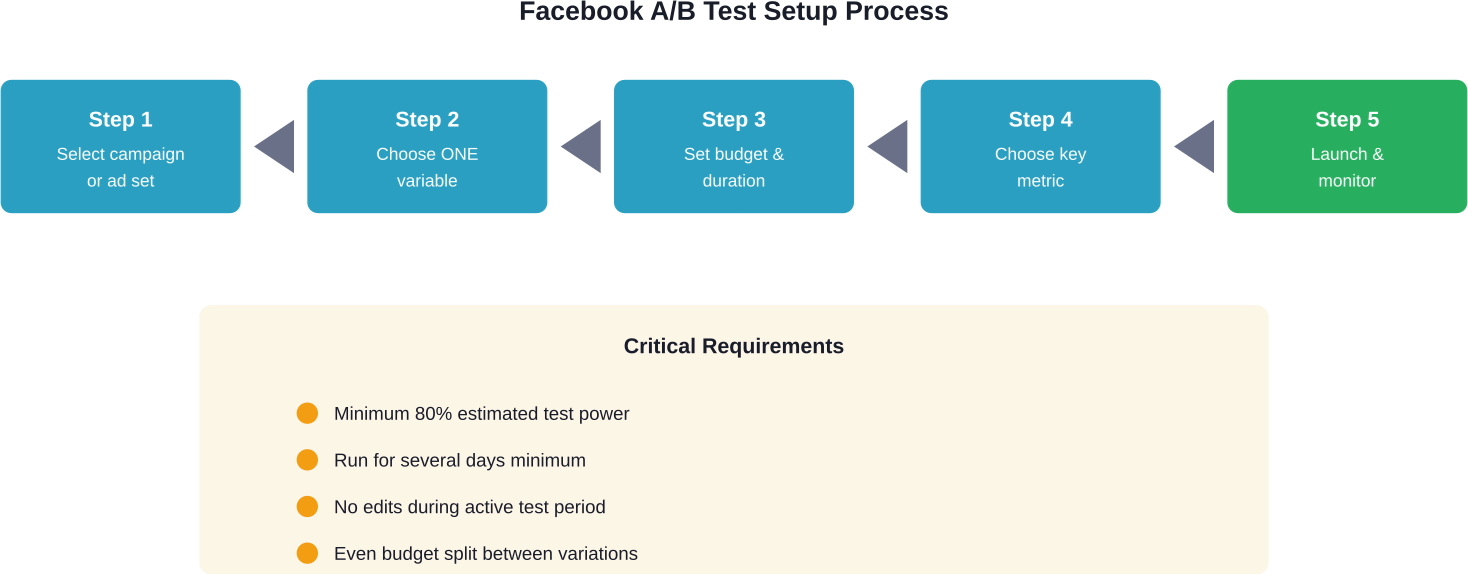

A/B testing Facebook ads involves creating two or more variations of an ad element (headline, image, audience, or placement) and running them simultaneously to determine which performs better. Facebook's built-in A/B testing tool requires at least 80% estimated power for reliable results, tests one variable at a time, and typically runs for several days to gather statistically significant data before declaring a winner.

About 1.6 billion people globally connect with small businesses on Facebook. That's not just a number—it's an opportunity.

But here's the thing: throwing ads at this massive audience without testing what works is like shooting arrows in the dark. Some might hit, most won't. A/B testing removes the guesswork and replaces it with data.

The challenge? Many advertisers either skip testing entirely or approach it wrong. They test too many variables at once, don't wait for statistical significance, or misinterpret results. According to Harvard Business School research (December 2025), companies often slow themselves down by over-relying on traditional A/B testing methods that emphasize statistical significance to a fault.

This guide cuts through the noise. It covers what actually matters when testing Facebook ads, which variables drive results, and how to set up experiments that deliver actionable insights.

Facebook A/B testing—also called split testing—compares two or more versions of an ad to determine which performs better. The concept is simple: change one element, show each version to a similar audience, and measure which drives more of your desired outcome.

That outcome might be clicks, conversions, engagement, or any other metric tied to campaign goals. The key is isolating a single variable so results point clearly to what's working.

Facebook's built-in A/B testing tool handles the mechanics. It splits the audience, distributes budget evenly, and runs tests until reaching statistical significance. When setting up a test, Facebook generates an estimated power percentage—the likelihood of returning a statistically significant result based on budget and test duration.

According to best practices, tests should run with at least 80% estimated power. Anything lower risks inconclusive results that waste budget without providing clear direction.

The difference between Facebook's A/B testing feature and simply running multiple ad variations within an ad set matters. When multiple ads run in the same ad set, Facebook's algorithm favors whichever performs better early on, gradually showing it more often. That's optimization, not testing. Real A/B tests split traffic evenly throughout the entire test period, ensuring fair comparison.

Testing reveals what resonates with the target audience. Assumptions about effective messaging, imagery, or targeting often prove wrong when exposed to real user behavior.

Consider this: Based on analysis of over 55,000 page views, 0.9% of users watched a homepage video. Of those who did, nearly 50% watched it completely. Without testing, teams might assume low view rates meant poor engagement—but the data showed those who engaged were highly committed.

The same principle applies to Facebook ads. An image that seems perfect might underperform against something unexpected. A headline crafted for clarity might lose to one optimized for curiosity.

Testing also improves return on ad spend. Small improvements compound. A 10% increase in conversion rate doesn't just mean 10% more conversions—it means the same budget delivers more value, which can be reinvested for exponential growth.

Real talk: advertising without testing is expensive guesswork. Testing transforms it into a systematic process of improvement.

According to HubSpot's analysis, let's say a content creator with a $50,000 annual salary publishes five articles weekly, totaling 260 per year. Testing which content formats perform best could redirect effort toward what actually drives results, maximizing that salary investment.

The same logic applies to ad spend. Budgets are finite. Testing ensures every dollar works as hard as possible.

But there's a caveat. Research from Drexel University's LeBow College of Business (November 2019) found that A/B testing can hinder profits when marketers spend excessive time testing. when marketers spend too much time testing instead of acting on insights. The balance matters—test enough to gain confidence, then scale what works.

When setting up an A/B test for Facebook ads, Extuitive can help at the stage before anything goes live. The platform predicts likely ad performance before launch using AI models validated against live campaign results. That gives teams a way to compare creative variants and review different ad options before budget is spent on the test.

Use Extuitive to:

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

Facebook's A/B testing tool requires testing one variable at a time. That constraint isn't a limitation—it's a feature. Changing multiple elements simultaneously makes it impossible to know which drove the result.

Here are the primary variables worth testing:

Images and videos are the first things users notice. Different visuals can produce dramatically different results, even when targeting the same audience.

Test variations like:

Video deserves special attention. Engagement potential makes video creative testing particularly valuable.

The words matter. Headlines can emphasize different benefits, use different emotional triggers, or vary in length and structure.

Ad body copy offers room to test messaging angles, detail levels, and calls to action. A direct approach might outperform a storytelling style, or vice versa—the only way to know is testing.

Different audience segments respond differently to the same ad. Testing audiences reveals which groups offer the best return.

Worthwhile audience tests include:

Facebook offers multiple placement options: News Feed, Stories, right column, Instagram Feed, Messenger, and more. Performance varies significantly across placements.

Automatic placements distribute ads wherever Facebook predicts they'll perform best. Testing specific placements against each other can reveal whether that automation serves campaign goals or if manual selection performs better.

The CTA button text influences click behavior. "Learn More" versus "Shop Now" versus "Sign Up" can produce different conversion rates even when everything else remains identical.

Facebook's testing tool lives within Ads Manager. The setup process is straightforward, but getting it right requires attention to detail.

Navigate to Ads Manager and locate the campaign, ad set, or ad to test. Click the checkbox next to it, then select "A/B Test" from the menu.

Facebook creates a duplicate and opens the testing interface. This is where the variable gets selected and test parameters are configured.

Select one variable from the available options: Creative, Audience, Delivery Optimization, Placement, or Product Set (for dynamic ads).

Remember: test only one variable. Testing multiple changes simultaneously produces ambiguous results. If the headline and image both change, there's no way to know which influenced performance.

Define the test duration and budget. Facebook recommends running tests for at least several days to accumulate sufficient data.

The platform displays estimated power—the probability of achieving statistically significant results. According to Seer Interactive's guidance, aim for at least 80% estimated power. Lower percentages indicate the test likely won't produce conclusive results.

If estimated power is too low, increase the budget or extend the test duration. Both provide more data points, improving the chance of reaching significance.

Choose which metric determines the winner. Options include cost per result, cost per click, click-through rate, conversion rate, and others.

This metric should align with campaign objectives. If the goal is conversions, optimize for cost per conversion or conversion rate. If it's brand awareness, focus on reach or impressions.

Review all settings, then publish. Facebook splits the audience evenly and begins delivering both versions simultaneously.

During the test, avoid making changes. Editing ads mid-test resets the learning phase and invalidates results.

Setting up tests correctly is half the battle. Following best practices ensures the data collected is meaningful and actionable.

This bears repeating because it's the most common mistake. Changing multiple elements creates confusion. If an ad with a new headline and new image outperforms the control, which element drove the improvement?

The answer is unknowable without isolating variables. Test the headline first, identify the winner, then test images using that winning headline.

Ending tests prematurely produces unreliable results. Facebook's algorithm needs time to optimize delivery and for sufficient users to interact with each variation.

Generally speaking, tests should run for at least three to seven days, depending on audience size and budget. Larger audiences and higher budgets can reach significance faster.

Watch the estimated power metric. When it reaches 80% or higher and Facebook declares statistical significance, the test can conclude.

Small sample sizes produce misleading results. If only 50 people see each ad variation, random chance heavily influences outcomes.

Facebook's estimated power calculation accounts for this, but as a rule of thumb, aim for at least several hundred interactions per variation before drawing conclusions.

Tests where neither variation wins conclusively still provide value. They indicate the tested variable doesn't significantly impact performance, allowing focus on other elements.

Some variables simply don't matter for certain audiences or offers. Discovering that saves time and budget that can be redirected toward more impactful tests.

Testing builds institutional knowledge. Without documentation, insights get lost and tests get repeated.

Maintain a testing log that records:

This creates a searchable history that informs future decisions and prevents duplicate efforts.

Research from Drexel University highlights a critical point: excessive testing can hinder progress. The goal isn't testing for its own sake—it's finding what works and scaling it.

Once a clear winner emerges, act on it. Scale the winning variation, then move to the next test. Continuous testing without implementation wastes the primary benefit of testing: improved performance.

When the test completes, Facebook displays results in Ads Manager. The interface shows performance for each variation across the selected metric.

Look for the "Winner" designation. Facebook declares a winner when one variation performs statistically significantly better—meaning the difference is unlikely due to random chance.

But don't stop at the headline metric. Examine secondary metrics too. An ad might win on cost per click but lose on conversion rate. Understanding the full picture prevents optimizing for the wrong outcome.

Statistical significance means the observed difference is probably real, not a fluke. Facebook calculates this automatically, but understanding the concept helps interpret results.

A result is typically considered significant when there's less than 5% probability it occurred by chance. That's the standard threshold in most testing scenarios.

However, Harvard Business School research cautions against over-reliance on traditional significance testing. The December 2025 publication notes that companies often slow decision-making by waiting weeks for statistical significance when faster, iterative approaches might serve better.

The takeaway? Use significance as a guide, not an absolute requirement. If a clear trend emerges early and aligns with business goals, acting on strong directional data can beat waiting for perfect statistical confirmation.

When a clear winner emerges, implement it immediately. Update active campaigns, use the winning element in future ads, and document the insight.

If results are inconclusive, consider whether the test had enough power. Low sample sizes or short durations might explain the ambiguity. Re-run the test with more budget or time if the variable seems important.

If the test definitively shows no difference, that's valuable too. It means the variable doesn't impact this audience or objective, so effort can shift elsewhere.

Facebook's built-in A/B testing tool isn't the only option. Alternative approaches offer different advantages depending on goals and constraints.

Create separate campaigns or ad sets with different variables manually. This approach offers more control but requires careful audience segmentation to avoid overlap.

The main advantage is flexibility. Test more than two variations simultaneously or test variables that Facebook's tool doesn't support.

The downside is complexity. Manual tests require monitoring budget allocation, ensuring even splits, and calculating significance independently.

Instead of traditional A/B testing, dynamic creative lets Facebook test multiple elements automatically. Upload multiple headlines, images, and descriptions, and the algorithm finds the best combinations.

This approach tests many variations quickly but sacrifices the control and clear insights of structured A/B tests. It's useful for discovering unexpected combinations but less helpful for understanding why something works.

Run one ad variation, measure results, then run another and compare. This isn't true A/B testing because external factors (time, seasonality, audience fatigue) can influence results.

But when budget constraints prevent simultaneous testing, sequential tests provide directional insights. Just acknowledge the limitations when interpreting results.

Even experienced advertisers fall into testing traps. Avoiding these common errors improves result quality.

The temptation to test everything at once is strong. Resist it. More variables mean exponentially more combinations, requiring massive sample sizes to reach significance.

Focus on high-impact variables first. Test creative before minor copy tweaks. Test audiences before placement details.

Early results often mislead. An ad might perform well in the first few hours then regress to the mean. Or the opposite—slow starters sometimes win over time.

Wait for Facebook's significance declaration or meet the predetermined test duration before making decisions.

Tests running during holidays, major events, or seasonal shifts can produce results that don't replicate in normal conditions.

Account for context when scheduling tests and interpreting results. An ad winning during Black Friday might not win in February.

One-off tests provide one-off insights. Audience preferences shift, creative fatigues, and competitive landscapes evolve.

Ongoing testing keeps campaigns optimized and builds the knowledge base needed for long-term success.

Random testing wastes resources. Before launching a test, form a hypothesis about what will happen and why.

This forces strategic thinking about what matters and creates a framework for interpreting results. If the hypothesis proves wrong, that's valuable learning. If it's right, confidence in the underlying principle increases.

Once basic testing becomes routine, advanced strategies unlock additional insights and efficiencies.

Test variables in priority order, using winners from each test as the control for the next. This builds incrementally optimized ads.

Start with creative (usually highest impact), then test copy, then audiences, then placements. Each test starts from a better baseline than the last.

Reserve a small percentage of the audience as a control group that sees the original ad throughout all tests. This measures cumulative improvement across multiple testing iterations.

If the latest optimized ad performs 40% better than the original holdout group, that quantifies the total value of the testing program.

Test whether different audience segments respond differently to the same variables. An image that wins for 25-34 year olds might lose for 45-54 year olds.

This requires more budget but produces nuanced insights that enable segment-specific optimization.

Facebook A/B testing transforms advertising from guesswork into a systematic process of improvement. The mechanics are straightforward—isolate one variable, split the audience, measure results—but the impact compounds over time.

Each test builds knowledge about what resonates with the target audience. Those insights accumulate, informing not just ad optimization but broader marketing strategy.

The key is balance. Test enough to gain confidence, but not so much that action gets delayed. Use statistical significance as a guide, but don't let perfect become the enemy of good. And most importantly, implement what works.

Start with high-impact variables like creative and copy. Run tests with at least 80% estimated power. Document results systematically. Scale winners aggressively.

That approach, consistently applied, turns Facebook advertising into a predictable growth channel rather than an expensive experiment.

Ready to optimize Facebook ad performance? Set up the first A/B test today. Choose one variable that could meaningfully impact results, configure the test with adequate budget and duration, and let the data reveal what actually works for the audience. The insights gained from that single test will pay dividends across every future campaign.