Meta Ads Minimum Budget for Testing 2026: Real Numbers

Meta's $1 minimum won't work. Learn the actual minimum budget for testing Meta ads in 2026, learning phase requirements, and smart allocation strategies.

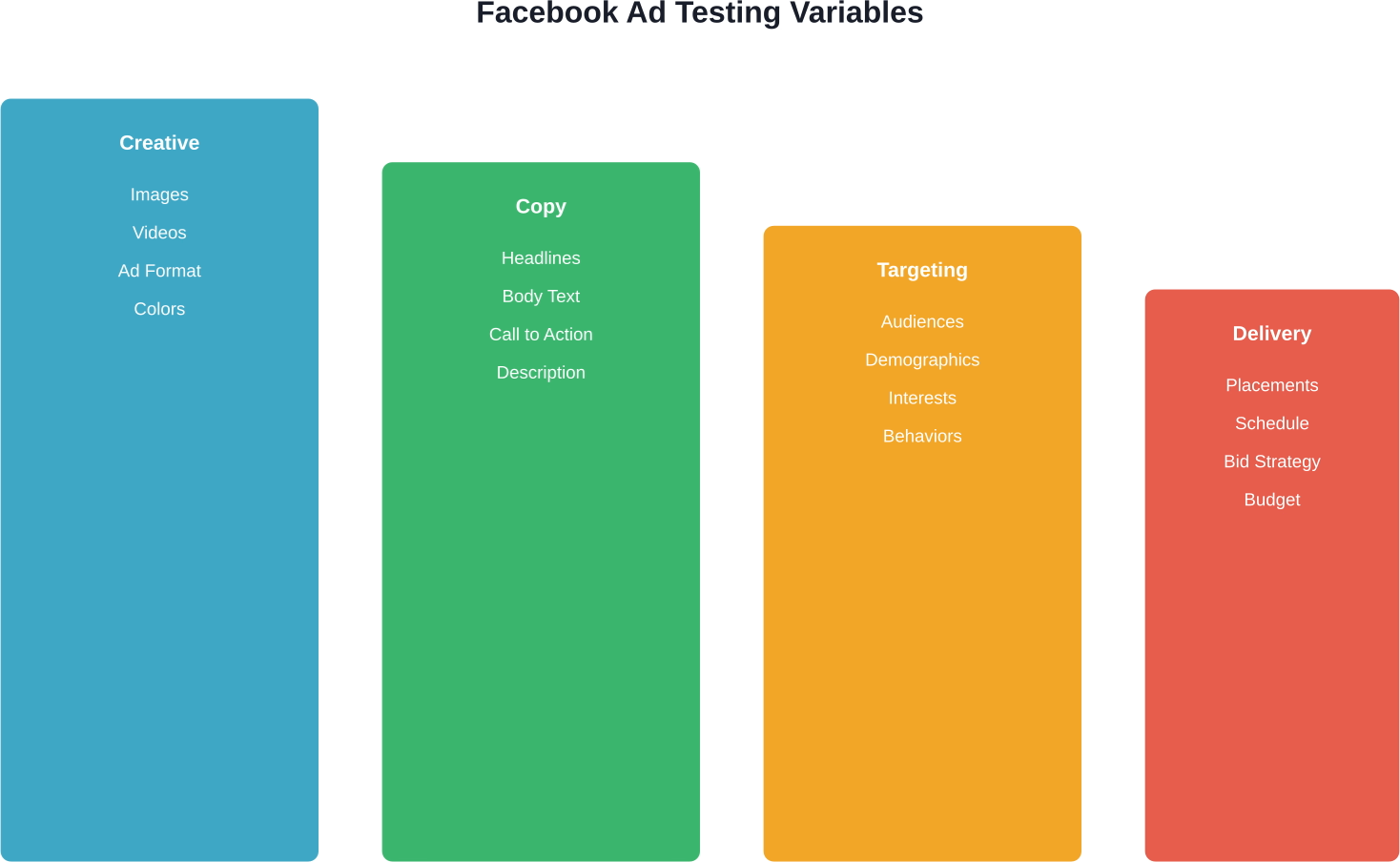

A/B testing Facebook ads involves creating multiple versions of your ads and systematically comparing their performance to identify which elements drive better results. By testing variables like images, headlines, audiences, and placements, marketers can make data-driven decisions that improve campaign performance and lower costs.

Running Facebook ads without testing is like throwing darts blindfolded. Sure, something might hit the target, but it's mostly guesswork.

According to Penn State Extension's article on A/B testing to improve online marketing, testing the impact of small changes can significantly improve marketing outcomes. The principle applies directly to Facebook advertising, where even minor variations in ad elements can dramatically affect performance.

But here's the thing—most marketers either skip A/B testing entirely or do it incorrectly. They test too many variables at once, don't run tests long enough, or misinterpret the results.

This guide breaks down exactly how to run Facebook A/B tests that actually produce actionable insights. No fluff, just the testing framework that works.

A/B testing, also called split testing, is a controlled experiment where two or more versions of an ad run simultaneously to determine which performs better.

The concept is straightforward. Create multiple ad versions that differ in one specific element. Show each version to a similar audience. Measure which version achieves better results based on your objective.

Facebook's native A/B testing tool handles the technical setup, ensuring audiences don't overlap and each version gets fair exposure. The platform automatically splits your budget and calculates statistical significance.

Penn State Extension's framework for A/B testing in online marketing emphasizes that effectiveness comes from testing one variable at a time. Change the headline in one test. Test different images in another. This isolation lets you pinpoint exactly what drives performance differences.

A 2024 Stanford Graduate School of Business article highlights that A/B testing has evolved significantly for the digital age. The increasing complexity of online platforms means testing methods must adapt to handle multiple variables and audience segments.

For Facebook advertisers, this matters because the platform has substantial global reach, according to industry data. Without testing, marketers rely on assumptions about what resonates with their specific audience segments.

Testing reveals the truth. An ad design that one team member loves might underperform compared to a simpler alternative. A targeting parameter that seems irrelevant could unlock significantly better results.

Facebook's advertising system offers numerous variables worth testing. Not all deserve equal attention, though.

Visual components often show the most dramatic performance differences. Images, videos, carousel formats—these catch attention first.

Test different image styles. A product photo versus a lifestyle shot. A person looking at the camera versus looking at the product. Background colors or environments.

Video length matters too. Does a 15-second clip outperform a 30-second version? What about square versus vertical formats?

Words drive action. Small copy changes can shift conversion rates significantly.

Headlines deserve priority testing. Try different value propositions, ask questions versus statements, or include numbers versus avoiding them.

Body text length varies. Some audiences respond to detailed explanations. Others prefer brevity. Testing reveals preferences for specific segments.

Call-to-action buttons offer limited but important testing opportunities. "Learn More" versus "Shop Now" versus "Sign Up"—the right choice depends on campaign objectives and audience readiness.

Different audience segments respond differently to identical ads. Testing audiences helps allocate budget toward the most responsive groups.

Compare broad versus narrow targeting. Test lookalike audiences based on different source groups. Experiment with interest combinations or exclude certain segments.

Age ranges and geographic locations often show surprising performance variations. An ad that works for 25-34 year olds might flop with 45-54 year olds.

Facebook offers multiple ad placements—Feed, Stories, Reels, right column, and more. Automatic placement lets Facebook optimize, but testing specific placements can reveal efficiency opportunities.

Instagram placements behave differently than Facebook ones. Stories demand different creative approaches than Feed ads. Testing helps match creative to placement.

A/B testing Facebook ads still depends on the quality of the ads going into the test. Extuitive predicts Facebook ad performance before launch. It uses AI models validated against live campaign results to help teams review creatives before putting budget behind them. That makes it relevant for advertisers who want another way to assess ads before running an A/B test.

Use Extuitive to:

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

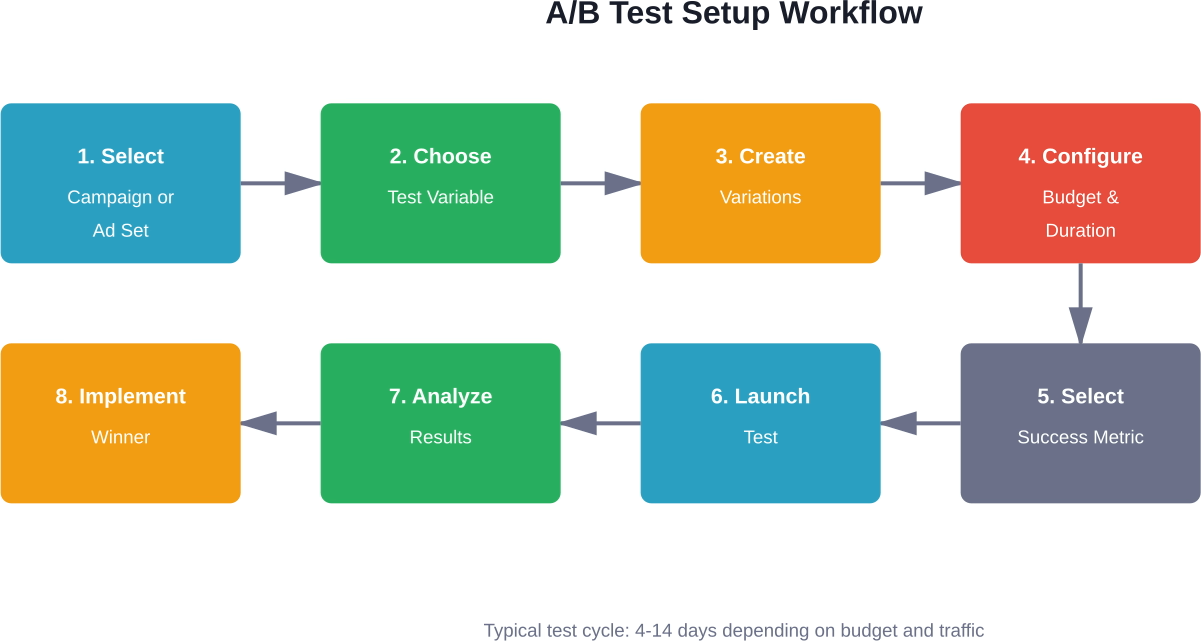

Facebook's native A/B testing tool lives in Ads Manager. The setup process is straightforward, but details matter.

Start by selecting a campaign or ad set to duplicate. Click the A/B Test button. Facebook guides you through variable selection and test parameters.

Facebook calculates test confidence levels automatically. The platform aims for 80% estimated power, meaning an 80% likelihood of detecting a meaningful difference if one exists.

Test duration affects reliability. Shorter tests might not capture day-of-week variations or audience behavior patterns. Longer tests provide more data but delay implementation of winning variations.

Budget size matters too. Tests with tiny budgets take longer to reach statistical significance. Facebook displays estimated power based on your settings, helping you balance duration and budget.

Running tests is easy. Getting meaningful results requires discipline.

This rule cannot be emphasized enough. Change the headline or change the image—not both simultaneously.

When multiple variables change, determining which caused performance differences becomes impossible. Was it the new headline or the new image? The data can't answer.

Sequential testing takes longer but produces actionable insights. Test creative first. Then test copy. Then test audiences. Each test builds knowledge.

Small sample sizes produce unreliable results. A test that reaches 100 people might show dramatic differences that disappear at scale.

Facebook's confidence calculation helps here, but general guidelines suggest thousands of impressions per variation minimum. For conversion-focused tests, aim for at least 100 conversions per variation if possible.

Budget and audience size determine how long reaching adequate sample size takes. Niche audiences or limited budgets may need extended test periods.

Day of week matters. Weekend behavior differs from weekday behavior. A test running only Monday through Wednesday might miss important patterns.

Seasonal factors affect results too. Holiday periods, sales events, or industry-specific timing can skew data. Consider these when interpreting results.

What does "better" mean? Lower cost per click? Higher conversion rate? More link clicks?

Different metrics sometimes point to different winners. An ad with higher click-through rate might have lower conversion rate. Deciding which matters more beforehand prevents cherry-picking results afterward.

Facebook displays test results directly in Ads Manager. The Results column shows the winner once statistical significance is reached.

But the declared winner is just the starting point. Dig deeper into the data.

Facebook marks tests as significant when confidence reaches a threshold, typically 80-90%. This means the performance difference is unlikely to be random chance.

However, statistical significance doesn't guarantee practical significance. An ad that costs $0.02 less per click is statistically different but may not justify changing strategy.

Look at magnitude of difference, not just whether a difference exists. A 5% improvement might not warrant action. A 50% improvement certainly does.

The primary metric determines the winner, but secondary metrics provide context.

An ad that wins on cost per click might show concerning patterns in other areas. Perhaps click quality is lower. Perhaps bounce rate is higher. Perhaps downstream conversion suffers.

Review the full funnel. Optimization for one metric can negatively impact others.

Sometimes tests produce no clear winner. Performance is too similar or neither variation reaches significance.

This outcome provides value too. It confirms the tested variable doesn't strongly impact performance for this audience and objective. Focus testing efforts elsewhere.

Inconclusive results might also indicate insufficient test duration or sample size. Consider extending the test or increasing budget.

Even experienced marketers fall into testing traps. Recognizing these patterns helps avoid wasted budget and misleading conclusions.

Early results often mislead. An ad might start strong then fade, or vice versa. Patience matters.

Let tests run their full scheduled duration unless one variation performs dramatically worse and is burning budget unnecessarily. Facebook's duration recommendations exist for good reason.

The temptation to test everything at once is strong. Resist it.

Multivariate testing has its place, but it requires much larger sample sizes and more complex analysis. For most Facebook advertisers, simple A/B tests produce better insights with less complexity.

When manually creating test variants outside Facebook's native tool, audience overlap becomes a risk. The same person might see multiple versions, contaminating results.

Facebook's built-in testing prevents this. Custom setups require careful audience exclusion to maintain test integrity.

Changing "Buy Now" to "Purchase Now" probably won't move the needle. Neither will adjusting a shade of blue by 5%.

Test meaningful differences. Bold versus conservative creative. Discount versus no discount. Benefit-focused versus feature-focused copy.

Small refinements matter eventually, but start with big swings. Find major performance drivers first, then optimize details.

Once basic testing becomes routine, more sophisticated approaches can extract additional value.

Build a testing roadmap. After identifying winning creative, test copy variations with that creative. After finding optimal copy, test audiences.

Each test narrows in on the combination that maximizes performance. This systematic approach compounds improvements over time.

Document results. A testing log prevents redundant tests and builds institutional knowledge about what works for specific products or audiences.

This approach measures incremental impact by withholding ads from a control group. Facebook supports brand lift studies using this methodology for brand awareness campaigns.

Holdback testing answers: "Does this advertising actually drive results, or would these conversions happen anyway?" The insight is valuable but requires larger budgets to implement effectively.

Cold audiences respond differently than warm audiences or past customers. What works for prospecting might fail for retargeting.

Test the same variables separately for different funnel stages. Creative that attracts new prospects might not be ideal for re-engaging cart abandoners.

Facebook's native testing tools handle most needs, but additional resources can streamline processes.

Ads Manager's A/B test function is the starting point. It handles technical setup, audience splitting, and statistical calculations automatically.

Dynamic creative is another native option. Upload multiple headlines, images, descriptions, and calls-to-action. Facebook automatically tests combinations and serves top performers.

This approach sacrifices some control but works well when testing numerous creative variations quickly.

Various platforms offer enhanced testing capabilities—more sophisticated statistical analysis, easier test management across multiple accounts, or better reporting visualizations.

These tools add cost and complexity. For most advertisers, Facebook's native features suffice. Larger organizations managing dozens of simultaneous tests might benefit from additional infrastructure.

Online significance calculators help verify Facebook's results or analyze tests run outside the native tool. Input impressions, clicks, and conversions to calculate confidence levels.

These calculators are particularly useful when comparing historical campaign performance to new approaches.

Theory is useful. Examples make concepts concrete.

An online retailer might test product photography styles. White background versus lifestyle setting. Single product versus multiple products. Model wearing item versus flat lay.

Results often vary by product category. Fashion items might perform better with lifestyle shots. Electronics might convert better with clean product images.

Testing reveals these category-specific patterns, enabling different creative strategies for different product types.

Form length significantly impacts lead generation performance. Longer forms gather more information but may reduce completion rates.

Testing different form lengths helps balance lead quantity versus lead quality. Sometimes fewer, higher-quality leads deliver better ROI than larger volumes of low-quality leads.

Value proposition matters too. Testing different offers—free guide versus free consultation versus discount—identifies what motivates the target audience.

Local businesses benefit from testing geographic radius. Does a 10-mile radius perform better than 25 miles? What about targeting by zip code versus city?

Schedule testing matters for local advertisers too. Restaurants might find lunch hours perform differently than dinner hours. Service businesses might see weekend versus weekday differences.

Testing only creates value when insights inform decisions and actions.

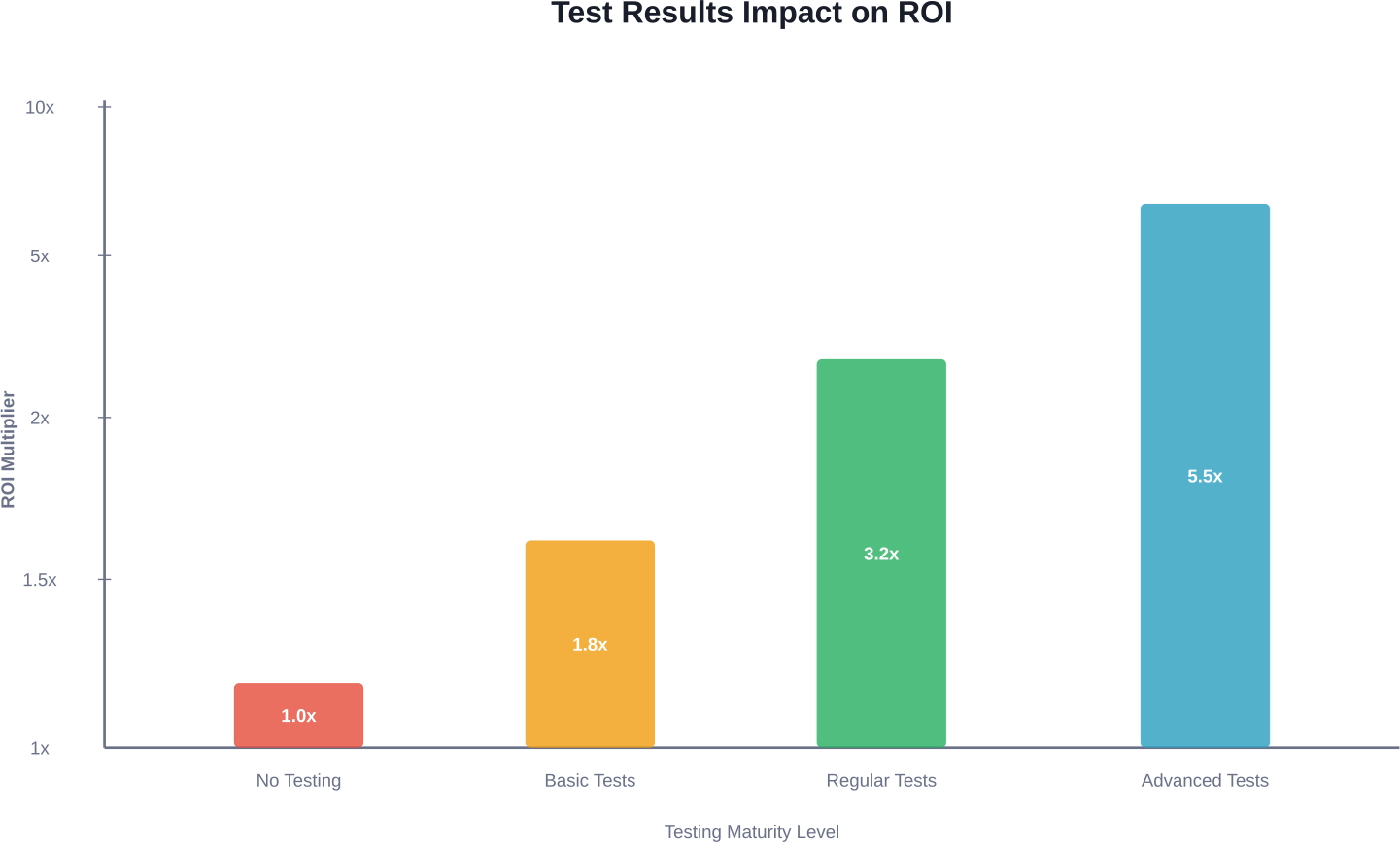

Organizations that test consistently outperform those that test occasionally. Making testing routine rather than exceptional changes outcomes.

Allocate a percentage of budget specifically for testing. This ensures testing continues even when budgets tighten.

Share test results across teams. What works in Facebook ads might inform email marketing, landing page design, or product positioning.

A winning test variation with $50 daily budget might not maintain performance at $500 daily budget. Audience sizes, auction dynamics, and saturation effects change at scale.

Scale gradually. Increase budget by 20-30% every few days while monitoring performance. Dramatic budget increases can destabilize campaign delivery.

Test results aren't permanent. Audience preferences shift. Competitors change tactics. Creative fatigues.

Retest winning variations periodically. Quarterly retests make sense for evergreen campaigns. Seasonal businesses might retest each season.

Major platform changes warrant retesting too. When Facebook introduces new ad formats or targeting options, previous conclusions may no longer hold.

Small budgets don't eliminate testing opportunities, but they require strategic choices.

With limited resources, focus on variables likely to produce meaningful differences. Test audiences before testing minor copy tweaks. Test creative before testing CTA buttons.

Impact potential should guide testing priority. Changes affecting more people or larger purchase decisions deserve attention first.

When budget doesn't support simultaneous testing, sequential comparison against historical performance offers an alternative.

Run the current approach for two weeks. Document performance. Switch to the new approach for two weeks. Compare results.

This method is less rigorous than simultaneous testing but works when resources are constrained. Control for seasonality and external factors as much as possible.

Facebook and Instagram live within the same advertising platform, but user behavior differs between them.

According to eMarketer data from 2026, 70% of Gen Z consumers say user-generated content (UGC) is very helpful to their buying journey, with 60% of all consumers considering UGC the most genuine form of advertising. This preference particularly affects Instagram, where UGC-style content often outperforms polished brand content.

Test the same ads separately on each platform. An ad that succeeds on Facebook might underperform on Instagram, or vice versa. Visual styles, copy length, and format preferences vary.

Instagram users typically prefer more visual, less text-heavy ads. Facebook users often engage with longer-form content. These generalizations don't apply universally—testing reveals platform preferences for specific audiences.

A/B testing transforms Facebook advertising from guesswork into science. Every test produces insights. Every insight informs better decisions. Better decisions drive better results.

The process is straightforward—isolate one variable, create meaningful variations, run tests long enough to reach significance, analyze results thoroughly, and implement winners systematically.

Consistency matters more than sophistication. Regular testing of basic variables outperforms occasional testing of complex combinations. Start simple. Test one element at a time. Build knowledge incrementally.

Testing never truly ends. Markets change, audiences evolve, and competitors adapt. What works today might not work next quarter. Continuous testing keeps campaigns optimized as conditions shift.

Start testing today. Pick one ad currently running. Create one variation changing a single element. Set up the test in Ads Manager. Let it run for a week. Analyze results and implement the winner.

That's one test. One insight. One improvement. Repeat this process consistently, and compound effects will dramatically improve campaign performance over time.