200+ Salon Name Ideas That Stand Out in 2026

Discover 200+ unique salon name ideas for hair, beauty, and spa businesses. Get expert tips to choose a memorable name that attracts clients and builds your brand.

Split testing Facebook ads involves creating controlled experiments where you test one variable at a time—like audience, creative, or placement—to determine which performs better. Facebook's native split testing tool divides your budget evenly between variations and delivers each to similar audiences, ensuring statistically valid results that help optimize campaign performance and ROI.

Split testing Facebook ads isn't optional anymore. It's the difference between guessing what works and knowing exactly what drives results.

Every advertiser faces the same challenge: which creative resonates? Which audience converts? Which placement delivers the best return? Without split testing, those questions get answered with hunches instead of data.

The platform itself has evolved significantly. Meta's built-in split testing tools now handle the statistical heavy lifting, but that doesn't mean running effective tests is automatic. Common mistakes still waste budgets daily—testing too many variables at once, ending tests too early, or misinterpreting results.

Here's the thing though—split testing done right transforms campaign performance. The approach separates profitable campaigns from money pits.

Split testing, also called A/B testing, is a controlled experiment where two or more versions of an ad run simultaneously to determine which performs better. Facebook's split testing feature creates separate, randomized audiences for each variation and distributes budget evenly between them.

The key word there? Controlled. Unlike simply running multiple ad sets, Facebook's split testing tool ensures each variation gets exposed to comparable audiences under similar conditions. This eliminates bias and produces statistically valid results.

According to a Journal of Marketing study published by the American Marketing Association (2025), traditional A/B testing on digital ad platforms faces a hidden challenge called "divergent delivery": platform algorithms personalize ad delivery in ways that can skew results. When testing two different ads, the platform may show them to fundamentally different audience segments, making it difficult to isolate what actually caused performance differences.

That's exactly why proper split testing methodology matters. Testing one variable at a time while keeping everything else constant allows advertisers to identify the true performance driver.

The advertising landscape changes constantly. What worked last quarter might fail today. Audience preferences shift. Creative fatigue sets in. Platform algorithms evolve.

Split testing provides the feedback loop needed to adapt. Rather than relying on assumptions about what audiences want, testing reveals actual preferences through behavior.

Research from Drexel University's Lebow College of Business (2019) highlights an important consideration: while A/B testing has become standard for determining which marketing communications resonate with consumers, spending too much time testing can actually hinder profits in some cases. The research led to development of better approaches for determining optimal sample sizes and test duration.

That's the balance advertisers need to strike—testing enough to gain valid insights, but not so much that opportunity costs outweigh the benefits.

Community discussions among advertisers consistently emphasize that even with great creative and compelling offers, there's no guarantee an ecommerce store will perform well without testing different audiences. The algorithm's predictions about which audiences will perform don't always match reality when campaigns actually run.

Split testing starts with the ads you choose to run. Extuitive predicts Facebook ad performance before launch, helping teams review creatives before they enter the test. It is built to compare ad options early, before budget is spent on running them.

Use Extuitive to:

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

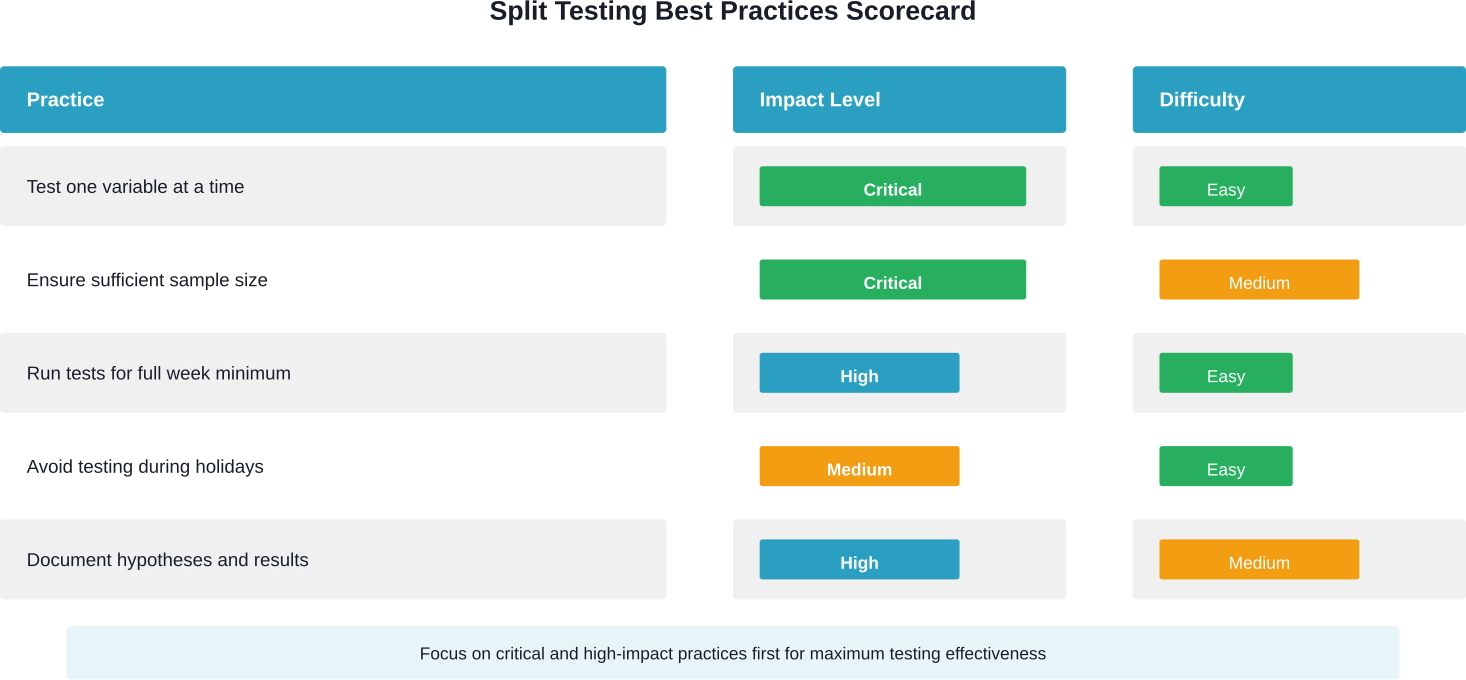

Not all variables deserve equal testing priority. Some drive significant performance differences, while others produce negligible impact. Focus testing efforts on high-impact variables first.

Audience tests often produce the most dramatic performance differences. The same creative shown to different demographics, interests, or behaviors can generate vastly different conversion rates.

Test broad audiences against narrow ones. Compare cold audiences to warm remarketing segments. Experiment with lookalike percentages—1% versus 5% lookalikes often perform differently.

Geographic targeting matters too. What works in one region may flop in another due to cultural differences, economic factors, or competitive landscapes.

Creative testing encompasses images, videos, copy, and design elements. This is where many advertisers start, though audience testing frequently delivers bigger wins.

For image tests, try different compositions, color schemes, or subject matter. Video length makes a difference—short-form versus long-form content appeals to different consumption preferences.

Ad copy deserves systematic testing too. Headlines drive attention, so test different hooks. Body copy should address various pain points or benefits to see which messaging resonates.

The Journal of Marketing study (published by AMA in 2025) provides a relevant example: a landscaping company tested two advertisements—one focused on sustainability, another on aesthetics. Platform personalization meant these ads reached groups with different interests, demonstrating how creative choices interact with algorithmic delivery.

Facebook offers numerous placement options: News Feed, Stories, Reels, right column, Audience Network, and more. Each placement has different user contexts and engagement patterns.

Automatic placements let the algorithm optimize, but testing specific placements reveals where ads perform best. Feed placements might excel for detailed product showcases, while Stories work better for quick-hit brand awareness.

Single image, carousel, video, collection, instant experience—format choice influences how audiences interact with content. Carousels allow showcasing multiple products or telling sequential stories. Videos demonstrate products in action.

Test formats that align with campaign objectives. Lead generation might benefit from instant experiences, while catalog sales could leverage collection ads.

Facebook's native split testing tool simplifies the technical setup, but strategic decisions still matter.

Running tests is straightforward. Running tests that produce actionable insights requires more discipline.

This rule can't be emphasized enough. Testing audience and creative simultaneously means no way to determine which drove performance differences. Was the winning variation better because of the audience, the creative, or the specific combination?

Isolate variables. Test audiences first to identify the best target market. Then test creative variations for that winning audience. Build knowledge sequentially.

Small sample sizes produce unreliable results. A variation might appear to win with 50 clicks, but that difference could easily be random chance.

Facebook's algorithm determines when results reach statistical significance, but generally plan for at least 1,000 impressions per variation for basic tests. Conversion-focused tests need more—ideally 100+ conversions per variation for confident conclusions.

Day-of-week effects and time-of-day patterns influence ad performance. A test that runs only on weekdays might miss weekend behavior differences.

Run tests for at least four days, preferably a full week, to capture daily variation patterns. This ensures results represent typical performance rather than temporary fluctuations.

Holidays, major events, or promotional periods create atypical user behavior. Test results from Black Friday won't predict normal performance in February.

Schedule tests during representative periods. If running evergreen campaigns, test during normal business weeks, not during major shopping events or industry-specific peak seasons.

Memory fails. Assumptions change. What seemed obvious during setup becomes fuzzy weeks later.

Record test hypotheses before launching. Document which variable was tested, why, and what outcome was expected. When results arrive, compare actual performance against predictions. This builds institutional knowledge about what works.

Facebook declares winners automatically once statistical significance is reached, but surface-level metrics don't tell the complete story.

Look beyond the winning variation to understand why it won. Did it drive lower cost per result? Higher conversion rates? Better quality leads that closed at higher rates?

Check metrics across the funnel. A variation might generate cheaper clicks but lower-quality traffic that doesn't convert. Conversely, higher cost-per-click might be worthwhile if conversion rates increase proportionally.

Consider external factors that might have influenced results. Did any variations run during times with different competitive intensity? Were there external events that could have affected one variation more than others?

Statistical significance means results likely weren't due to chance, but it doesn't guarantee practical significance. A variation that wins by 2% might be statistically significant but operationally irrelevant if the difference doesn't meaningfully impact profitability.

Even experienced advertisers fall into testing traps that waste budgets and produce misleading results.

Impatience kills test validity. Checking results after 100 impressions and picking a winner based on early fluctuations throws away the entire point of controlled testing.

Trust Facebook's significance indicators. If the platform hasn't declared a winner yet, the test needs more data. Period.

Testing audience, creative, placement, and copy simultaneously creates a combinatorial explosion. With four variables and two options each, that's sixteen possible combinations. Getting statistically significant results for sixteen variations requires massive budgets and extended timelines.

Narrow focus produces faster insights. Test one thing, find a winner, then test the next variable.

When testing audiences manually (outside Facebook's split testing tool), overlapping audiences create contamination. The same person might see multiple variations, which defeats the purpose of controlled comparison.

Facebook's split testing tool prevents this by creating exclusive audience segments. But when running separate ad sets, audience overlap becomes a problem.

Ten conversions per variation isn't enough to declare a winner confidently. Small samples produce wide confidence intervals—the true performance could be anywhere within a broad range.

The more conversions collected, the narrower the confidence interval and the more reliable the conclusion. Aim for at least 100 conversions per variation for conversion-focused tests.

Once basic split testing becomes routine, more sophisticated approaches unlock additional optimization opportunities.

Rather than one-off tests, sequential testing builds on previous results. Test audiences first, identify the winner, then test creative variations for that winning audience. Next, test placements for the winning audience-creative combination.

This layered approach compounds improvements. A 20% improvement from audience testing, followed by 15% from creative optimization, and another 10% from placement refinement creates substantial cumulative gains.

When scaling winning variations, set aside a small percentage of budget to continue testing new approaches. The current winner won't stay optimal forever—creative fatigue, audience saturation, and competitive changes erode performance over time.

Allocate 10-20% of budget to ongoing testing. This creates a pipeline of validated alternatives ready to deploy when the current approach deteriorates.

Instead of testing two variations, test three or four simultaneously. This accelerates learning when multiple viable options exist.

The tradeoff? Each variation receives less budget and takes longer to reach significance. Multi-cell testing works best with higher budgets or when testing variables expected to produce large performance differences.

Split testing isn't always the right answer. Some situations call for different approaches.

Very small budgets struggle to generate sufficient data for meaningful tests. With only $10 daily budget, weeks might pass before reaching statistical significance. In these cases, rapid iteration based on directional data might be more practical than rigorous testing.

Brand new campaigns lack baseline data to inform test design. Run initial campaigns to understand general performance before optimizing through split testing.

Highly seasonal or event-driven campaigns don't allow time for proper testing. When advertising for a one-time event two weeks away, there's no opportunity to test, learn, and optimize. Launch with best practices and accept that testing won't be possible.

Winning a test is just the beginning. The real value comes from applying those insights to scale successful campaigns.

When a clear winner emerges, don't immediately dump the entire budget into that variation. Scale gradually—increase budget by 20-50% every few days while monitoring performance stability.

Rapid budget increases can disrupt the algorithm's optimization and cause performance to deteriorate. Gradual scaling gives the system time to adjust and find additional high-quality conversions.

Apply learnings across campaigns. If a particular audience performs well in one campaign, test it in others. If a creative style wins consistently, develop more assets in that direction.

Testing creates institutional knowledge about what resonates with target audiences. Building a library of documented test results creates a competitive advantage—insights that inform strategy across all marketing efforts, not just individual campaigns.

The most successful advertisers never stop testing. Each campaign, each quarter, each product launch brings new opportunities to refine targeting, messaging, and creative approaches. Split testing transforms advertising from guesswork into a systematic process for continuous improvement.

Ready to optimize campaign performance? Start with a single split test on the highest-impact variable—audience targeting. Let data reveal which segments convert best, then build from there. The difference between mediocre and exceptional campaign performance often comes down to consistent, disciplined testing.