How Do Teachers Check for AI in 2026? Detection Methods

Learn how teachers detect AI-generated work using detection tools, manual methods, and conversation. Discover accuracy rates and limitations of AI checkers.

Facebook's Test and Learn tool provides data-driven experimentation for ad campaigns, allowing advertisers to run controlled A/B tests and measure true incrementality. The platform enables comparison of audience segments, creative variations, and campaign strategies through structured experiments that eliminate confounding variables inherent in traditional ad reporting.

Analyzing Facebook ad campaigns often feels like navigating a maze of metrics without a clear path forward. Conversion numbers fluctuate. Attribution windows shift. And determining what actually drives results becomes guesswork wrapped in spreadsheets.

Facebook introduced the Test and Learn tool specifically to solve this measurement challenge. Unlike standard campaign reporting that shows correlation, this platform reveals causation through controlled experiments.

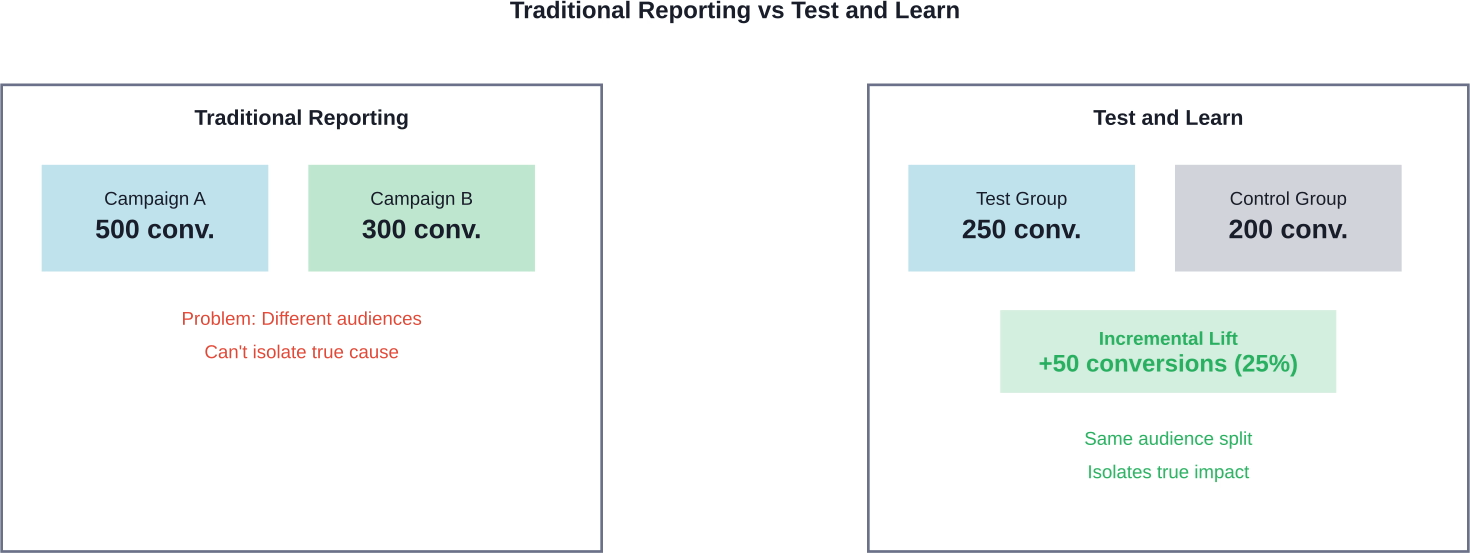

The distinction matters. Regular reporting might show that Campaign A generated 500 conversions while Campaign B generated 300. But did Campaign A truly perform better, or did it simply reach an audience already primed to convert? Test and Learn answers that question definitively.

Facebook's Test and Learn tool operates as an experimentation platform within Meta for Business. It runs controlled tests that isolate specific variables while holding other factors constant.

The platform measures incrementality, not just correlation. This means determining whether a particular strategy caused an increase in conversions, rather than merely being associated with them.

Standard conversion reporting shows outcomes. Test and Learn reveals which actions produced those outcomes. The difference shapes how advertisers allocate budgets and develop creative strategies.

Here's how it works fundamentally: The tool splits audiences or campaigns into control and test groups, applies a specific treatment to the test group, then measures the difference in outcomes. Statistical analysis determines whether observed differences reflect genuine impact or random variation.

Traditional Facebook campaign reporting aggregates conversion data across all users who saw ads. It attributes conversions based on touchpoint interactions within specified windows.

Test and Learn operates differently. It establishes a control group that doesn't receive the treatment being tested. This baseline comparison reveals the true lift generated by the test variable.

Consider a practical scenario: Standard reporting shows 1,000 conversions from a campaign. Test and Learn might reveal that only 200 of those conversions were incremental—the campaign caused 200 additional conversions beyond what would have occurred naturally.

According to research published by the American Marketing Association, this distinction becomes critical when platforms personalize ad delivery. Users interested in specific topics receive certain ads, while different users see alternative creative. This targeting creates diverging audience mixes that confound simple performance comparisons.

The study examined a landscaping company that created two advertisements—one focused on sustainability, another on aesthetics. Platform personalization delivered these ads to fundamentally different audience segments. Traditional reporting couldn't isolate whether creative differences or audience composition drove performance variations.

Facebook Test and Learn helps measure results after campaigns run. Extuitive is built for an earlier stage. It helps teams predict ad performance before launch, using AI models trained against real campaign outcomes. That makes it useful when ads need to be reviewed before budget is spent on them.

Use Extuitive to:

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

The Test and Learn tool lives within Meta for Business, though not all advertisers immediately see it in their interface. Access typically requires meeting specific spending thresholds or working with a Facebook business partner.

Advertisers who qualify can find the tool through their Business Manager dashboard. Navigate to the Analytics section, where Test and Learn appears alongside Events Manager and other measurement tools.

The interface displays past experiments, active tests, and options to create new studies. Three primary test types are available: Conversion Lift, Brand Lift, and Holdout tests.

Account requirements vary. Smaller advertisers might not see the option initially, though Facebook periodically expands access. Those without direct access can still apply testing principles through campaign split testing and A/B test features available in Ads Manager.

Facebook offers multiple testing frameworks, each designed for specific measurement goals. Understanding which test type fits particular scenarios determines experimental success.

Conversion Lift tests measure whether ads drive incremental purchases, sign-ups, or other conversion events. The platform randomly splits a target audience into test and control groups.

The test group sees ads normally. The control group doesn't receive ads from the tested campaign. Facebook then measures conversion rates in both groups and calculates the lift attributed to ad exposure.

This methodology isolates the causal impact of advertising. If the test group converts at 5% and the control group at 4%, the campaign generated a 25% lift in conversion rate.

Conversion Lift studies require sufficient scale. Running these tests with tiny budgets produces inconclusive results. Community discussions on testing strategies note that advertisers should budget at least 50 times their average cost per conversion to achieve statistical significance.

Brand Lift tests measure changes in awareness, consideration, and perception metrics rather than direct conversions. These studies poll users who saw ads versus those who didn't.

Facebook delivers brief surveys asking about brand recall, message association, or purchase intent. Comparing responses between exposed and control groups reveals whether campaigns shifted brand metrics.

This test type serves campaigns focused on upper-funnel objectives. Advertisers running awareness campaigns can quantify whether creative actually increased brand recognition.

Holdout tests measure overall advertising effectiveness by completely excluding a segment from all campaigns. This control group receives no ads from the advertiser across an extended period.

The methodology reveals baseline conversion rates without advertising influence. Comparing holdout group behavior against exposed audiences quantifies total advertising ROI.

These tests run longer than other study types—typically several weeks to months. The extended timeframe captures delayed conversions and full customer journey impacts.

Campaign comparison tests evaluate performance differences between advertising strategies. This test type compares distinct approaches—different creative themes, audience targeting methods, or bidding strategies.

Setting up these experiments requires careful planning. The test must isolate one variable while keeping other factors constant. Testing multiple changes simultaneously makes identifying the success driver impossible.

Start by identifying the specific question the test should answer. Examples include: Does video creative outperform static images? Do broad audiences convert better than narrow targeting? Does manual bidding beat automated strategies?

Frame hypotheses clearly. A good hypothesis states: "Video ads will generate 20% more conversions than image ads among audiences aged 25-34." This specificity guides test design and success criteria.

Determine required test duration and budget. Most tests need at least one week to account for day-of-week performance variations. Budget should allow each test cell to generate sufficient conversions for statistical confidence.

Community testing discussions emphasize budget adequacy. If average cost per purchase sits at $50, and the test includes one ad set with 3-5 ads, daily budget should reach at least $150 to provide the algorithm adequate learning data.

Test cells represent the different conditions being compared. In a creative test, Cell A might contain video ads while Cell B contains carousel ads. In an audience test, Cell A targets broad audiences while Cell B uses detailed targeting.

Structure campaigns to ensure clean separation. Each test cell should run as its own campaign or ad set, depending on what's being tested. Mixing test variables within single campaigns introduces confounding factors.

Assign equal budgets to test cells when possible. Unequal budget allocation can skew results, especially when testing audience sizes or creative approaches that perform differently at various spending levels.

That said, scalability matters. Community discussions note that performance at $10 daily budget doesn't necessarily predict performance at $100 or $1,000 daily. Winners should be re-tested at target spending levels before scaling.

Proper audience splitting ensures test validity. The goal is creating comparable groups that differ only in the variable being tested.

For creative tests within the same audience, use Facebook's built-in split testing feature. This tool automatically divides the target audience into non-overlapping groups that see different creative variations.

For strategy comparisons, create separate campaigns targeting identical audiences. Monitor frequency carefully—if audiences overlap accidentally, users might see ads from multiple test cells, contaminating results.

Geographic splitting works for some scenarios. Test Cell A runs in certain states or countries while Cell B runs in others. This approach requires markets with similar characteristics and sufficient volume in each region.

Effective testing follows systematic approaches rather than random experimentation. Several frameworks have proven successful across different business types and campaign objectives.

This approach tests one element at a time while holding everything else constant. It sacrifices speed for clarity—learning happens incrementally, but each insight is definitive.

Start with the highest-impact variable. Creative typically influences performance more than small targeting adjustments. Testing ad format or core messaging provides clearer wins than testing minor audience refinements.

Run the test until reaching statistical significance. Stopping tests early because one variation appears to be winning leads to false conclusions. Performance fluctuates daily, and early trends often reverse.

Document results systematically. Build a testing log that records hypotheses, test parameters, results, and insights. This knowledge base prevents redundant tests and reveals patterns across experiments.

Some advertisers use rapid testing to evaluate multiple variables quickly. This method runs several variations simultaneously, identifying broad patterns before drilling into specifics.

The approach works for creative testing with sufficient budget. Launch 5-7 ad variations with different images, videos, or copy approaches. Let them run for 3-5 days, cut the bottom performers, then test variations of the winners.

Budget distribution matters here. Allocate equal budgets initially to give each variation fair exposure. The Meta algorithm needs adequate data to optimize delivery effectively.

This protocol identifies promising directions faster than sequential single-variable tests. However, it provides less insight into why specific variations win. Trade-offs exist between speed and understanding.

Testing different audience segments reveals which targeting strategies drive efficient conversions. But audience tests introduce complications—group characteristics vary beyond just the targeting parameters.

Effective audience testing compares segments of similar size and composition. Testing a 50,000-person lookalike audience against a 500,000-person interest-based audience confounds size effects with targeting quality.

Layer tests progressively. First, compare broad versus targeted approaches. If targeted wins, test specific targeting types against each other. This funnel narrows options systematically.

Monitor metrics beyond just conversion rate. Cost per conversion matters, but so does customer quality. Some audiences convert efficiently but generate low lifetime value. Connect ad performance to downstream revenue when possible.

Even experienced advertisers make testing mistakes that invalidate results or lead to wrong conclusions. Recognizing these traps prevents wasted budget and misguided strategy changes.

Running tests with budgets too small to generate meaningful data produces inconclusive results. The algorithm can't optimize properly, and random variation overwhelms actual performance differences.

As noted in community testing discussions, campaigns that go several days without conversions won't allow Meta's algorithm to optimize properly. If average cost per purchase is $50, setting a $10 daily budget nearly guarantees failure.

Calculate required sample sizes before launching tests. Online calculators determine how many conversions each test cell needs to detect meaningful differences with statistical confidence.

Early performance trends mislead. An ad set leading after two days might lag after seven. Day-of-week effects, audience saturation, and delivery fluctuations all impact short-term results.

Establish test duration upfront based on conversion volume, not calendar days. A test should run until each cell generates at least 50-100 conversions, or until reaching a predetermined end date—whichever comes first.

Resist the urge to declare winners prematurely. Patience in testing pays dividends through more reliable insights.

Changing audience targeting AND creative format AND bidding strategy in a single test makes identifying success drivers impossible. Which element caused the performance difference? The data won't reveal it.

Isolate variables strictly. If testing creative, keep audience and bidding identical across test cells. If testing audiences, use the same creative in each cell.

The exception is rapid testing protocols that deliberately test multiple creative variations to identify general directions. But even then, follow-up tests should isolate specific elements.

Seasonality, news events, competitor actions, and market conditions affect ad performance. Running a test during an unusual period can produce misleading results.

Avoid testing during major holidays, sales events, or crisis periods unless specifically measuring performance during those conditions. Week-to-week comparisons work better than comparing Black Friday performance to mid-January.

Account for external changes when analyzing results. If one test cell ran when a competitor launched a major promotion, note that context in conclusions.

Raw performance numbers don't tell the complete story. Effective analysis interprets results within context, identifies true patterns, and translates findings into actionable strategies.

A test cell showing 10% better performance might reflect genuine superiority or random chance. Statistical significance determines which explanation is more likely.

Facebook's Test and Learn tool calculates confidence intervals automatically. For manual tests in Ads Manager, use external statistical significance calculators.

Generally speaking, aim for 95% confidence before declaring winners. This threshold means the observed difference has only a 5% probability of occurring by chance.

Lower confidence thresholds work for directional decisions with easy reversibility. Higher thresholds suit major strategic shifts that will guide substantial budgets.

Conversion rate and cost per conversion provide important signals, but secondary metrics add depth. Click-through rate reveals creative engagement. Landing page conversion rate shows post-click experience quality.

Revenue per conversion matters more than conversion volume for many businesses. An audience generating twice the conversions at half the average order value underperforms financially despite seemingly better conversion metrics.

Time-to-conversion patterns reveal whether test cells attract different customer types. Fast converters versus researchers indicate audience mindset differences that impact nurturing strategies.

A winning test cell during one period might underperform in different conditions. Seasonal factors, competitive landscape changes, and audience maturation all influence ongoing performance.

A 2025 Journal of Marketing study analyzed by the American Marketing Association found that combining traditional media with digital channels can significantly enhance brand performance. This finding suggests that test results for digital campaigns might shift when advertisers also run television or outdoor advertising, as research indicates media channels create synergies that amplify results.

Walmart demonstrated this principle by integrating in-store promotions with digital advertising through Walmart Connect, achieving significant year-over-year revenue growth in its advertising division. The synergy between channels means testing one element in isolation doesn't capture full-stack performance.

Document external conditions during test periods. This context helps determine when to retest strategies and how to adjust interpretations.

Identifying a winning variation represents only half the challenge. Scaling that success without degrading performance requires careful execution.

Dramatic budget increases disrupt Meta's algorithm optimization. A campaign performing well at $50 daily doesn't automatically maintain efficiency at $500 daily.

Scale budgets gradually—increasing 20-30% every few days allows the algorithm to adapt. Monitor performance during scaling, and pause increases if efficiency degrades significantly.

Community discussions emphasize this limitation: success at one budget level doesn't guarantee success at higher spending. Re-testing at target budget levels confirms scalability before committing substantial resources.

Winning ad creative eventually saturates its initial audience. Frequency climbs, response rates decline, and efficiency erodes.

Expand to new audiences incrementally. If a test wins with a 1% lookalike audience, try 2-3% lookalikes or related interest groups. Test these expansions formally rather than assuming they'll perform identically.

Geographic expansion offers another scaling path. A strategy winning in one region might succeed in similar markets. But regional differences in culture, competition, and economic conditions require validation testing.

Even winning creative fatigues. Tracking frequency metrics reveals when refresh becomes necessary—typically when frequency exceeds 3-4 impressions per user over the attribution window.

Develop creative variations that maintain core winning elements while introducing fresh execution. If video testimonials won the original test, create new testimonial videos rather than switching formats entirely.

Test refreshed creative against established winners before wholesale replacement. Sometimes fresh creative underperforms despite novelty.

Test and Learn insights inform decisions beyond Facebook advertising. Patterns discovered through experimentation reveal customer preferences that guide broader marketing approaches.

Messaging that resonates in Facebook ads often works across other platforms. A value proposition winning in ad creative might improve email campaigns, landing pages, or even product positioning.

Test creative themes on Facebook first due to relatively fast feedback and granular data. Once validated, adapt winners to channels with slower testing cycles like traditional media or product packaging.

But recognize platform differences. Video that performs well on Facebook might need format adjustments for TikTok or YouTube. The core message can transfer, but execution should fit platform norms.

Audience testing reveals which customer segments respond to different value propositions. These insights extend beyond ad targeting to inform product development, pricing strategies, and market positioning.

If sustainability-focused creative wins with specific demographics while price-focused creative wins with others, that pattern suggests segmented positioning strategies. Product lines, messaging hierarchies, and even distribution channels might align with these preference patterns.

Many experts suggest treating Test and Learn as a research tool, not just an optimization platform. The goal isn't merely improving Facebook metrics—it's understanding customers more deeply.

Test and Learn results should challenge and refine attribution models. If holdout tests show that attributed conversions exceed incremental conversions by 5x, standard reporting overestimates advertising effectiveness.

This discrepancy doesn't mean advertising fails—it means measurement methods require adjustment. Calibrating internal models with experimentally validated incrementality produces more accurate ROI calculations.

According to American Marketing Association research published in 2025, the persistent challenges around ROI measurement in influencer marketing highlight similar issues. Short-term metrics often fail to capture true partnership value, just as standard ad reporting can misrepresent causal impact.

Beyond basic A/B tests, sophisticated advertisers employ advanced methodologies that uncover deeper insights and optimize multiple variables simultaneously.

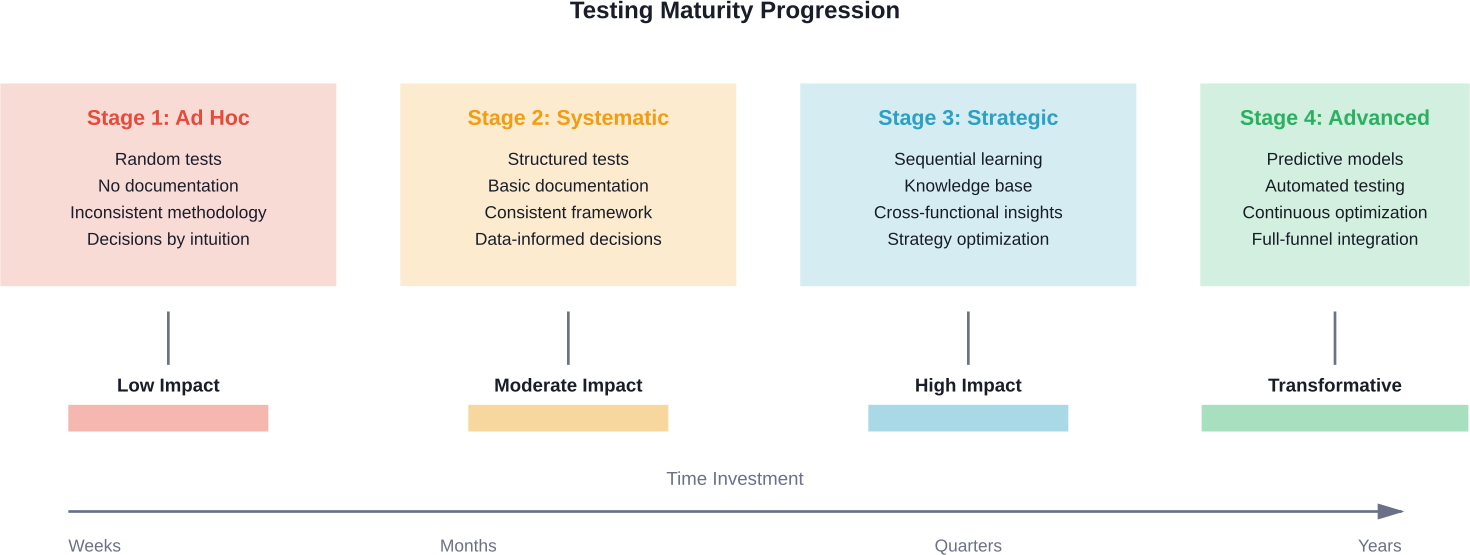

Sequential testing builds a knowledge base progressively. Each test builds on insights from previous experiments, creating a cumulative understanding of performance drivers.

Start broad, then narrow. First, test major strategic directions—product-focused versus benefit-focused messaging. Winners advance to round two, where variations test specific executions within the winning theme.

This approach develops expertise systematically. After running 10-15 sequential tests, patterns emerge about what consistently works for specific products, audiences, or objectives.

Document each test thoroughly. The learning compounds over time, but only if insights are captured and accessible when designing future experiments.

Multivariate tests evaluate multiple variables simultaneously by testing all combinations. A test might examine three headlines with two images, creating six total variations.

This methodology identifies interaction effects—situations where element combinations produce results different from what individual element performance would predict.

But multivariate tests require substantial traffic. Each variation needs sufficient exposure to reach statistical significance. Testing six variations demands six times the budget of a simple A/B test.

Reserve multivariate approaches for high-volume campaigns where budget allows proper execution. Smaller advertisers benefit more from sequential single-variable tests.

Some tests evaluate performance across different time periods rather than comparing concurrent variations. This approach suits scenarios where audience overlap poses problems.

Run strategy A for two weeks, then strategy B for two weeks, using identical audience targeting. Differences in performance reveal which approach works better for that audience.

Time-based tests introduce greater risk of confounding factors. Market conditions might shift between periods. Control for this by running multiple time periods—A-B-A-B rather than just A-B.

This methodology works particularly well for testing frequency caps, dayparting strategies, or other temporal variables where concurrent testing doesn't fit.

Testing methodologies continue evolving as platforms develop more sophisticated measurement tools and automation capabilities. Several trends shape where testing is heading.

Meta increasingly automates elements of campaign optimization. Dynamic creative automatically tests combinations of headlines, images, and descriptions. Advantage+ campaigns let algorithms handle targeting and placement decisions.

This automation shifts testing focus from tactical elements to strategic ones. Rather than testing specific audience segments, advertisers test campaign objectives, creative themes, and offer strategies while letting automation optimize execution.

The testing skill becomes knowing what to test rather than how to structure tests mechanically. Strategic questions require human judgment, while tactical optimization increasingly happens algorithmically.

Customers interact with brands across multiple platforms—Facebook, Instagram, Google, TikTok, email, and offline channels. Isolating Facebook's specific contribution becomes more complex but also more valuable.

Testing frameworks that measure incremental impact across the entire marketing mix provide clearer ROI pictures. This requires data integration and more sophisticated analytics, but reveals which channels truly drive growth versus which merely capture existing demand.

Research from the American Marketing Association found that combining traditional and digital media can boost brand metrics by up to 50% when optimized properly. This suggests that future testing should evaluate channel combinations rather than individual platforms in isolation.

Privacy regulations and platform changes limit granular user tracking. iOS privacy changes, cookie deprecation, and GDPR restrictions reduce available data.

Testing methodologies adapt by focusing on aggregated patterns rather than individual user tracking. Conversion modeling fills gaps where direct measurement isn't possible. Holdout tests and lift studies become more important since they work with aggregated data.

This shift requires accepting slightly less precision in exchange for privacy compliance. The fundamentals remain—controlled experiments reveal causality better than observational data, even when measurement becomes less granular.

The path to effective testing doesn't require immediate access to advanced tools or massive budgets. Starting with basic principles builds capability progressively.

Begin with creative testing using Ads Manager's A/B test feature. Test two distinct creative approaches—video versus static image, or two different value propositions.

Set adequate budgets based on conversion costs. Allocate at least 50 times the average cost per conversion to each test cell. Run tests for a full week minimum.

Document everything. Create a simple spreadsheet tracking test hypotheses, configurations, results, and insights. This record becomes invaluable as testing sophistication increases.

Don't overcomplicate initial tests. Simple questions with clear answers build confidence and demonstrate value to stakeholders who might question testing investment.

After running 5-10 basic tests, patterns emerge. Certain creative themes consistently outperform. Specific audience segments convert more efficiently. These patterns inform hypothesis development for subsequent tests.

Expand testing scope gradually. Add audience tests after establishing creative winners. Try format variations—different video lengths, carousel versus single image, or interactive elements.

Invest in analysis skills. Understanding statistical significance, interpreting confidence intervals, and recognizing confounding variables improves decision quality substantially.

Connect testing to business outcomes. Track not just ad metrics but downstream revenue, customer lifetime value, and profitability. This connection demonstrates testing's strategic value beyond tactical optimization.

Meta's official Business Help Center provides detailed documentation on Test and Learn tools, A/B testing features, and measurement methodologies. These resources explain technical setup and interpretation.

Community discussions on platforms like Reddit offer practical insights from advertisers testing across different industries and budgets. Real-world experiences complement official documentation.

Statistical education strengthens testing skills. Understanding concepts like sample size requirements, confidence intervals, and hypothesis testing enables more sophisticated experimental design.

Marketing experimentation books and courses provide frameworks applicable beyond just Facebook. Testing principles transfer across channels and marketing functions.

Facebook's Test and Learn approach transforms advertising from creative guesswork into systematic optimization. The methodology reveals which strategies genuinely drive results rather than merely correlating with outcomes.

Effective testing requires discipline. Isolate variables carefully. Run tests long enough to achieve statistical confidence. Budget adequately for meaningful results. Document insights systematically.

But the payoff justifies the investment. Advertisers who test systematically compound learning over time. Each experiment builds on previous insights, creating competitive advantages that compound.

The testing mindset extends beyond ad optimization. Patterns discovered through Facebook experiments inform product development, pricing strategies, market positioning, and cross-channel marketing approaches.

Start testing today, even with limited resources. Begin with simple creative comparisons. Document results. Build capabilities progressively. The advertisers who commit to systematic testing will increasingly outpace those relying on intuition and best-practice following.

Testing isn't a one-time project—it's an ongoing practice that continuously refines strategy and execution. Markets shift, audiences evolve, and competitors adapt. Only systematic experimentation keeps pace with these changes while identifying new opportunities.

Ready to transform Facebook ad performance through data-driven testing? Start by auditing current campaigns to identify the highest-impact test opportunities. Then design one well-structured experiment and execute it properly. That first test begins the journey toward measurement-driven marketing excellence.