Best Shopify Marketing Agencies in Fort Worth: Scaling Your Sales

Discover leading Shopify marketing agencies in Fort Worth to boost sales and scale with paid ads, SEO, and expert strategies for real e-commerce growth.

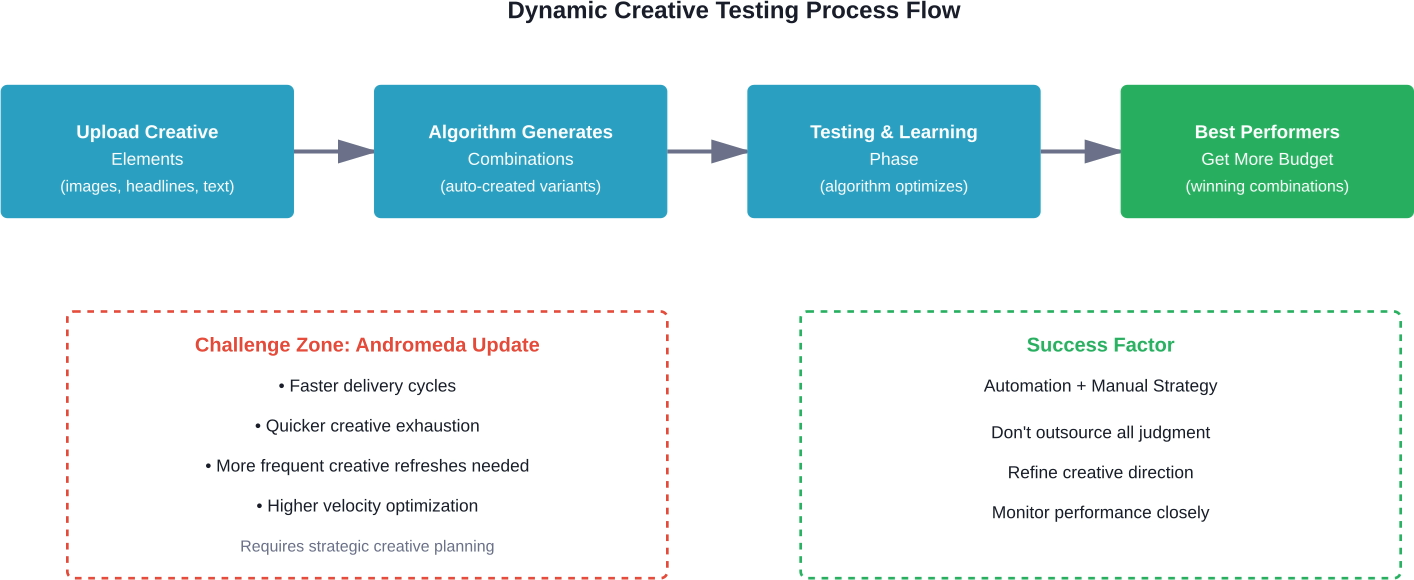

Dynamic creative testing on Facebook Ads automates the process of testing multiple ad variations by mixing different creative elements like images, headlines, and calls-to-action. Meta's algorithm automatically identifies winning combinations, though recent updates like Andromeda have accelerated creative exhaustion. For best results, combine dynamic creative with strategic manual testing rather than relying solely on automation.

Facebook's dynamic creative feature promises to solve one of advertising's most persistent headaches: figuring out which creative elements actually work. But here's the thing—Meta wants advertisers to trust the machine completely, and that doesn't always work out as planned.

Dynamic creative testing lets advertisers upload multiple images, videos, headlines, descriptions, and calls-to-action. Meta's algorithm then automatically generates different combinations and serves them to users, learning which variations perform best. Sounds perfect, right?

The reality is more nuanced. Recent algorithm updates have changed how dynamic creative behaves, and advertisers who blindly trust automation are finding themselves with exhausted creatives faster than ever before.

Dynamic creative optimization works by testing creative elements simultaneously rather than sequentially. Instead of running traditional A/B tests where advertisers manually create different ad variations, the system generates combinations automatically.

Upload five images, three headlines, and two descriptions, and Meta creates up to 30 different ad combinations. The algorithm serves these variations to different audience segments, tracking which combinations generate the best results based on campaign objectives.

According to academic research on e-commerce advertising, dynamic creative optimization can be structured as a two-stage cascaded system that balances effectiveness with efficiency. The first stage simulates complex interactions between creative elements to rank different combinations. The second stage uses real-time delivery models to select optimal ads for immediate deployment.

That said, this automation comes with tradeoffs. Advertisers sacrifice granular control over which combinations get tested and how budget gets allocated across variations.

The Andromeda update, released in January 2026, represents the fastest and most advanced iteration of Meta's ad-retrieval system to date. According to AdExchanger (published January 28, 2026), this update dramatically changed the rhythm of Meta Ads.

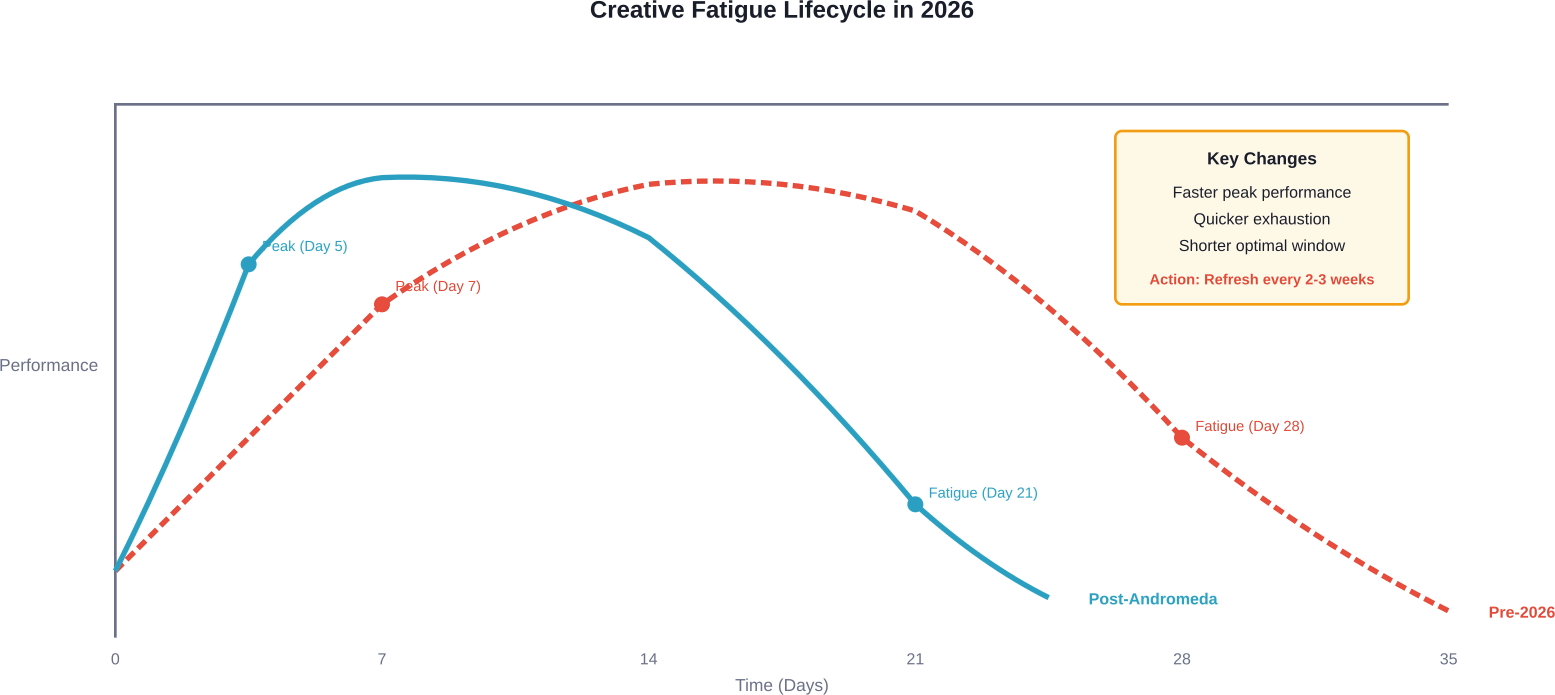

Delivery cycles now move faster. Creative gets picked up and exhausted with remarkable speed. Optimization feels more dynamic than ever before—but this increased velocity creates new challenges for advertisers using dynamic creative testing.

Creative fatigue happens faster under Andromeda. What used to perform well for weeks might now exhaust in days. The brands seeing the greatest lift from this update haven't outsourced judgment to the algorithm—they've refined their creative strategy instead.

Real talk: automation doesn't always deliver better results than manual campaign management. Research by Haus examining 640 incrementality tests over 18 months found that Meta's Advantage+ automated campaigns don't consistently outperform manual management. The average brand in that study spent just over $1 million monthly on Meta advertising, equating to roughly $14 million in annual spend.

A lot of testing decisions come down to creative selection before any spend is turned on. Extuitive helps teams compare ad creative before launch. The platform forecasts likely ad performance using AI models trained against real campaign outcomes. This gives advertisers a way to check multiple creative options before putting budget behind them.

Use Extuitive to:

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

Dynamic creative works best in specific scenarios. Not every campaign benefits from this approach.

Testing new creative directions once a product has proven market fit makes sense for dynamic creative. Community discussions reveal that advertisers often use this feature after establishing a winning product to refine messaging and visual approaches.

Limited testing budgets benefit from dynamic creative efficiency. Instead of creating dozens of manual ad variations, advertisers can test multiple elements simultaneously with fewer resources.

Ecommerce brands with catalog-based advertising see strong results. Dynamic creative allows rapid testing of different product images, pricing overlays, and promotional messaging. According to reporting on creative testing platforms, in some cases a yellow shirt with a "BOGO" copy might perform two times better than a blue hat with a "20% off" overlay for a spring fashion sale.

Scaling campaigns that need fresh creative regularly can leverage dynamic combinations to avoid fatigue without constant manual intervention.

New product launches often require more control than dynamic creative provides. Testing radically different messaging angles or value propositions benefits from isolated, controlled A/B tests.

Brand campaigns where message consistency matters shouldn't risk random combinations. Dynamic creative might generate awkward pairings that damage brand perception.

Complex funnels with distinct audience segments often need customized creative strategies rather than algorithm-driven mixing.

Getting results from dynamic creative requires strategic setup, not just throwing elements into the system and hoping for the best.

Limit the number of variations per element type. Testing too many combinations dilutes learning and extends the optimization period. Three to five options per element (images, headlines, descriptions) provides sufficient variation without overwhelming the algorithm.

Make creative elements genuinely different. Small tweaks won't generate meaningful insights. Test contrasting visual styles, distinct value propositions, and varied calls-to-action.

Ensure every element can logically pair with others. Random combinations that don't make sense waste impressions and budget during the learning phase.

Visual assets drive initial attention. Test different product angles, lifestyle versus product-focused imagery, and contrasting color schemes.

Headlines communicate primary value. Test benefit-focused versus feature-focused messaging, question-based versus statement-based approaches, and different emotional appeals.

Descriptions provide supporting details. Test length variations, bullet points versus paragraph format, and technical versus accessible language.

Calls-to-action influence conversion intent. Test action-oriented phrases, value-focused alternatives, and urgency-based messaging.

Set appropriate learning budgets. Dynamic creative needs sufficient spend to generate statistically significant results across combinations. Underfunded campaigns won't exit the learning phase effectively.

Monitor frequency carefully. Post-Andromeda, creative exhaustion happens faster. Track frequency metrics and prepare replacement creative before performance degrades.

Use clear naming conventions for creative elements. Organized asset libraries make performance analysis easier and help identify which specific elements drive results.

Meta provides breakdowns showing performance by creative element. These reports reveal which specific images, headlines, and descriptions generate the best results.

But here's where things get tricky. The algorithm optimizes for campaign objectives, not necessarily business objectives. An ad combination might maximize clicks while delivering poor conversion rates.

Cross-reference Meta's reporting with external analytics platforms. Track downstream metrics like conversion rate, average order value, and customer lifetime value—not just platform-reported metrics.

Click-through rate shows initial engagement but tells an incomplete story. Track CTR by element combination to identify attention-grabbing assets.

Conversion rate reveals actual business impact. High CTR with low conversion suggests messaging misalignment between ad creative and landing experience.

Cost per result indicates efficiency. Monitor whether certain element combinations drive lower acquisition costs.

Frequency determines creative lifespan. Rising frequency with declining performance signals creative exhaustion.

Consumer preferences are shifting toward interactive advertising experiences. Amazon Ads research (published January 6, 2026) surveying more than 7,800 people found that the majority of viewers say interactive ads are more engaging and attention-grabbing than standard video ads.

Specifically, 79% find them more engaging, 78% say they're more attention-grabbing, and 72% perceive them as more relevant. Viewers appreciate seeing pricing, discovering deals, and interacting with products directly within ads.

This trend suggests dynamic creative testing should eventually incorporate interactive elements, not just static combinations of traditional assets.

The fundamental tension with dynamic creative testing is between efficiency and control. Meta's automation handles tactical execution better than humans can manually. But strategic direction still requires human judgment.

Successful campaigns in the post-Andromeda environment combine both approaches. Let the algorithm optimize tactical combinations while maintaining strategic oversight of creative direction, messaging strategy, and brand consistency.

Set clear boundaries for what the algorithm can mix. Provide compatible elements that maintain brand standards regardless of combination.

Establish refresh schedules based on performance patterns rather than arbitrary timelines. Monitor leading indicators of creative fatigue and prepare new elements before performance degrades.

Dynamic creative testing increases creative asset consumption. Faster optimization cycles mean more frequent refreshes.

Develop systematic creative production processes. Template-based approaches allow rapid generation of on-brand variations without starting from scratch each time.

User-generated content provides authentic, diverse creative assets. Customer photos and testimonials offer fresh perspectives that professional creative sometimes lacks.

Repurpose organic social content that already demonstrates engagement. Posts generating strong organic performance often translate well to paid advertising.

Dynamic creative isn't the only way to test Facebook ads. Several alternative approaches offer different tradeoffs.

Campaign Budget Optimization with manual ad variations provides more control. Create distinct ad sets with specific creative combinations, letting Meta's algorithm allocate budget based on performance.

This approach requires more setup time but offers clearer attribution. Advertisers know exactly which creative combination drove results rather than inferring from element-level breakdowns.

Meta offers dedicated creative testing functionality separate from dynamic creative. This feature allows structured comparison of complete ad variations with proper holdout groups and statistical validation.

Testing features provide cleaner experimental design than dynamic creative's continuous optimization approach.

Specialized creative testing platforms provide more sophisticated analysis than Meta's native reporting. These tools can test creative before spending media budget, using panel audiences to predict performance.

Such platforms work to demystify creative testing in ecommerce by taking data-driven approaches beyond traditional methods.

Advertisers commonly make several mistakes when implementing dynamic creative testing.

Ending tests too early produces unreliable results. Dynamic creative needs time to explore combinations and identify patterns. Minimum testing periods depend on daily spend and audience size, but generally require at least several days of consistent delivery.

Constantly tweaking creative elements prevents the algorithm from completing the learning process. Make changes only when clear performance trends emerge, not based on day-to-day fluctuations.

Small sample sizes produce misleading results. Ensure each element combination receives sufficient impressions before drawing conclusions about performance.

Even winning combinations eventually exhaust. Post-Andromeda, this happens faster than before. Monitor performance trends and refresh creative proactively rather than waiting for significant declines.

Meta continues investing heavily in automation and machine learning for advertising. The trajectory points toward even more sophisticated creative optimization capabilities.

Academic research on two-stage dynamic creative optimization suggests future systems may better handle sparse and ambiguous data. Transformer-based rerank models could improve how algorithms separate ambiguous samples and extract ranking knowledge.

Such advances would help address current limitations where dynamic creative struggles with under-represented creative variations that lack sufficient historical data for accurate performance prediction.

Integration of generative AI for creative production represents another frontier. Instead of just mixing existing elements, future systems might generate entirely new creative variations based on performance patterns.

Dynamic creative testing offers powerful capabilities when applied strategically. The automation handles tactical optimization efficiently, freeing advertisers to focus on creative strategy and brand positioning.

But the Andromeda update fundamentally changed how these systems behave. Faster delivery cycles and quicker creative exhaustion mean advertisers can't simply set campaigns and forget them.

Success requires balancing automation with strategic oversight. Provide the algorithm with quality creative elements that maintain brand standards regardless of combination. Monitor performance closely and refresh creative proactively based on data rather than arbitrary schedules.

Start with controlled tests comparing dynamic creative against manual approaches for specific campaign objectives. Measure not just platform metrics but downstream business impact. Some advertisers will find dynamic creative delivers significant efficiency gains. Others may discover manual testing provides better control and clearer insights.

The brands seeing the best results haven't fully outsourced judgment to Meta's algorithm. They've refined their creative strategy, developed sustainable creative production pipelines, and maintained active oversight of campaign performance.

Test dynamic creative as one tool in a broader testing toolkit rather than a complete replacement for strategic creative development. The technology continues evolving rapidly, and staying current with platform changes matters as much as mastering any single feature.