Black Friday Marketing Ideas That Drive Sales in 2026

Discover proven Black Friday marketing ideas for 2026. From tiered discounts to omnichannel tactics, learn strategies that convert browsers into buyers.

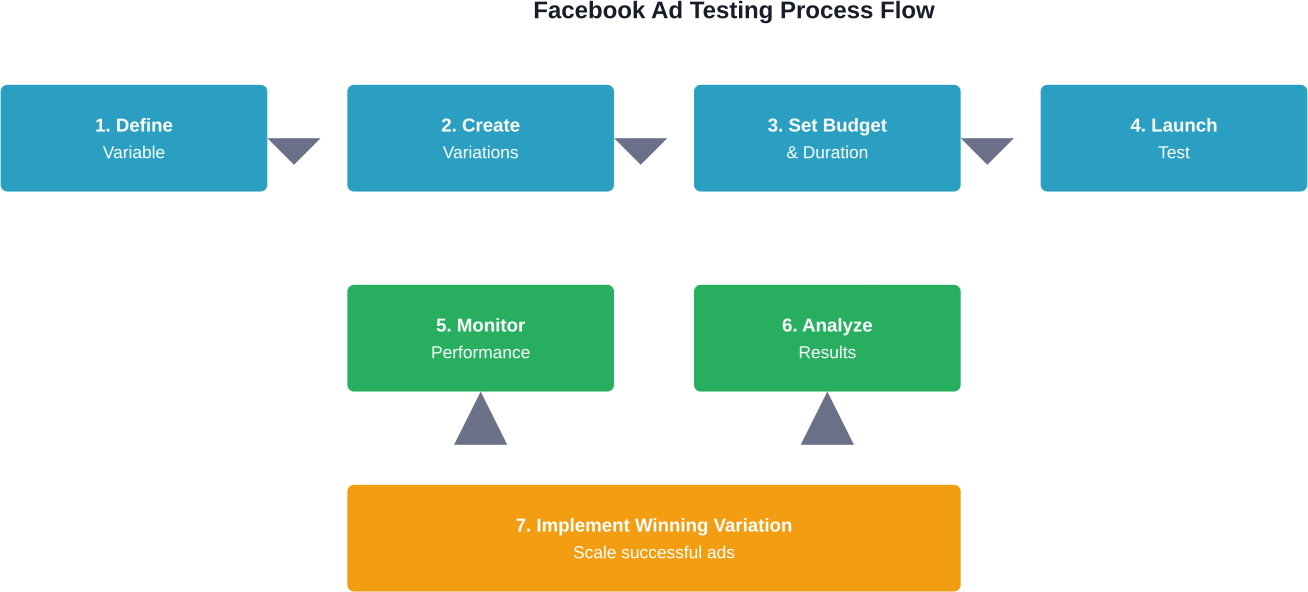

Testing Facebook ads before going live involves using Facebook's built-in A/B testing tools, creating multiple ad variations with controlled variables, and running tests with sufficient budget and duration to achieve statistically significant results. The testing process should focus on one element at a time—whether audience, creative, or copy—and requires at least 80% estimated power for reliable outcomes.

Launching Facebook ads without testing is essentially burning money. The difference between a profitable campaign and a complete failure often comes down to what happens before hitting that publish button.

According to Harvard Business School research published in 2025 on measuring marketing effectiveness, understanding which efforts have been successful and which have not is crucial for making wise budget allocation decisions. Testing provides that clarity.

The good news? Facebook has built sophisticated testing tools directly into its platform. But knowing they exist and actually using them correctly are two different things entirely.

A/B testing for Facebook ads means running controlled experiments where one variable changes while everything else stays constant. The goal is isolating what actually drives results versus what just looks good in theory.

Think of it as a scientific experiment for advertising. Change one thing, measure what happens, then make decisions based on actual data rather than gut feelings.

Facebook's split testing automatically divides the audience into random, non-overlapping groups. Each group sees a different version of the ad. This eliminates bias and ensures fair comparison.

Here's the thing though—effective testing requires discipline. Testing multiple variables simultaneously makes it impossible to know which change caused which result. That's where most campaigns go wrong.

The advertising landscape has evolved dramatically. Privacy changes, iOS updates, and increased competition have made every dollar count.

Without proper testing, advertisers are essentially guessing. And guessing doesn't scale. Community discussions across advertising forums consistently show that campaigns with structured testing processes outperform those without them.

Testing also builds institutional knowledge. Each test reveals something about the audience, the messaging, or the creative approach. Over time, this accumulates into genuine competitive advantage.

Most pre-launch testing for Facebook ads is limited. You review creatives, maybe get internal feedback, and then rely on live campaigns to see what actually works. That’s where most of the uncertainty and wasted spend comes from.

Extuitive adds a step before that. It simulates how people respond to your ads using AI agents, so you can evaluate different creatives and angles before putting any budget behind them. Instead of going live with a wide set of assumptions, you start with options that already show stronger signals.

If you want to reduce guesswork and go into campaigns with more confidence, run your ads through Extuitive before launching.

Setting up tests correctly determines whether the results will actually be useful. The process follows a logical sequence that can't be skipped or rushed.

The first decision determines everything else. What exactly needs testing?

Most campaigns benefit from testing these core elements:

But here's the critical part: test only one variable at a time. Testing audience and creativity simultaneously means there's no way to know which drove the results.

Statistically significant results require adequate sample size. Too small a budget or too short a duration produces unreliable data that leads to wrong conclusions.

Facebook's testing tool calculates estimated power when setting up tests. According to best practices documented in testing guides, tests should run with at least 80% estimated power. This represents the likelihood of the test returning a statistically significant result based on budget and duration.

The platform takes these factors into account automatically when generating power estimates, but understanding the underlying principles helps with planning.

Just because one variation performs better doesn't mean it's actually superior. Random chance can create apparent winners that disappear when scaled.

Statistical significance indicates the probability that observed differences reflect real performance gaps rather than random variation. Facebook's testing tool calculates this automatically and flags results that meet confidence thresholds.

Real talk: declaring a winner too early is one of the most expensive mistakes in advertising. Let tests run their full course even when early results look promising.

A/B testing is now managed through the 'Experiments' tool or by comparing existing ad sets. The native 'Split Test' toggle during the initial campaign creation flow was deprecated by Meta.

The interface walks through variable selection, variation creation, and test parameters. It's more user-friendly than it used to be, though it still requires understanding the underlying testing logic.

The setup process has specific steps that need attention:

The key metric selection matters enormously. Testing for lowest cost per click when the actual goal is conversions leads to optimizing for the wrong outcome.

Once tests run their course, Facebook presents results showing performance across variations. The interface highlights statistically significant differences and recommends winners when confidence thresholds are met.

Look beyond just the winning variation. Understanding why something won provides insights for future tests and overall strategy.

Did the winning audience skew younger than expected? That informs targeting strategy. Did the winning creative emphasize benefits over features? That guides the copywriting approach.

Audience testing often delivers the biggest performance improvements. The same creative and offer can perform dramatically differently depending on who sees it.

The challenge is that Facebook provides almost unlimited targeting possibilities. Testing everything isn't feasible or practical.

Start with hypotheses based on existing customer data or logical assumptions about who needs the product or service. Then test those hypotheses systematically.

Effective audience tests compare distinct segments rather than minor variations. Testing broad categories first, then drilling down into specifics produces a decision tree of insights.

For example, testing "fitness enthusiasts" versus "nutrition-focused audiences" versus "weight loss seekers" identifies which general category resonates. Follow-up tests can then refine within the winning category.

Lookalike audiences provide another powerful testing avenue. Testing different lookalike percentages (1%, 5%, 10%) reveals the optimal balance between similarity and reach.

Based on available data from testing processes, audiences should be given enough budget to generate meaningful impressions before making determinations about performance.

One issue that undermines many tests is audience overlap. When testing audiences that share significant overlap, the variations essentially compete against themselves in the auction.

Since Meta has deprecated the specific 'Audience Overlap' tool within the testing suite that allowed for automated auction-level isolation, advertisers must now use the 'Exclude' feature in targeting or rely on the 'Experiments' section to minimize overlap manually

Visual creative and ad copy work together to capture attention and drive action. Testing these elements reveals what messaging resonates with the target audience.

Creative testing should explore fundamentally different approaches rather than minor tweaks. Different image subjects, video concepts, or carousel sequences represent meaningful variations.

Copy testing examines messaging angles, tone, length, and calls-to-action. The same product can be positioned as a solution to a problem, an aspirational lifestyle choice, or a limited-time opportunity.

Visual assets grab attention in the feed. Testing different creative approaches identifies what stops the scroll.

Common creative test variations include:

Videos require special consideration for testing. The first three seconds determine whether users keep watching, so testing different opening hooks can dramatically impact performance.

Ad copy testing examines how messaging impacts response. Different audiences respond to different messaging angles even for identical offers.

Test variations might include:

The winning copy often reveals important insights about what motivates the audience. Save this intelligence for future campaigns and marketing materials beyond just Facebook ads.

Testing without proper tracking is useless. The goal isn't just collecting data but collecting the right data to make informed decisions.

Facebook Pixel implementation provides the foundation for conversion tracking. The pixel needs to be installed on the website and configured to track relevant events before launching tests.

Standard events like ViewContent, AddToCart, and Purchase capture the customer journey. Custom conversions allow tracking of specific actions unique to the business.

Proper conversion tracking connects ad performance to actual business outcomes. Without it, optimization happens based on proxy metrics that may not correlate with revenue.

The tracking setup process involves:

MIT Sloan research published in 2026 on marketing performance measurement emphasizes the importance of capturing key performance indicators to assess marketing performance within allocated budgets. The tracking infrastructure makes this possible.

While tests shouldn't be stopped prematurely, monitoring progress helps identify technical issues or budget pacing problems.

Check tests daily to ensure:

Resist the temptation to declare winners based on early data. Statistical significance calculations account for the full planned duration and sample size.

When tests complete, the analysis phase determines which insights emerge and what actions to take.

Facebook's interface displays results with statistical confidence indicators. Look for the "Confidence" column which shows the probability that observed differences represent real performance gaps.

Generally speaking, 95% confidence represents the industry standard threshold. Results below this level suggest differences might be due to random chance rather than actual superiority.

The winning variation becomes the foundation for scaled campaigns. But scaling requires its own methodology to maintain performance.

According to best practices from testing and scaling processes documented in advertising guides, campaigns should scale gradually rather than immediately jumping to massive budgets.

Increasing the daily budget by 20-30% every few days allows Facebook's algorithm to adjust while maintaining performance. Doubling or tripling budgets overnight typically causes temporary performance degradation.

Not every test produces a clear winner. Sometimes variations perform essentially identically. That's valuable information too.

Failed tests might indicate:

Document all tests—winners and failures—to build institutional knowledge over time. Patterns emerge across multiple tests that inform overall strategy.

Once basic testing methodology is established, more sophisticated approaches can extract additional performance gains.

Sequential testing involves running tests in a planned sequence where each test builds on learnings from the previous one. Test audience first, then test creative specifically for the winning audience, then test copy for the winning audience-creative combination.

This approach is more time-intensive but produces highly optimized campaigns tailored precisely to what works.

Multivariate testing examines multiple variables simultaneously, testing all possible combinations. This requires significantly larger budgets and more complex analysis.

For example, testing three audiences, three creatives, and three copy variations requires 27 different combinations. Each needs adequate budget and impressions to reach statistical significance.

Most advertisers should stick with single-variable testing. Multivariate approaches make sense only with substantial budgets and sophisticated analytics capabilities.

The most successful advertisers treat testing as ongoing rather than one-time events. Continuous testing programs always have experiments running to incrementally improve performance.

Forrester research published in 2025 on feature management and experimentation notes that progressive delivery approaches involve deploying features with flags turned off, testing in production, and gradually rolling out to larger audiences. This principle applies equally to advertising.

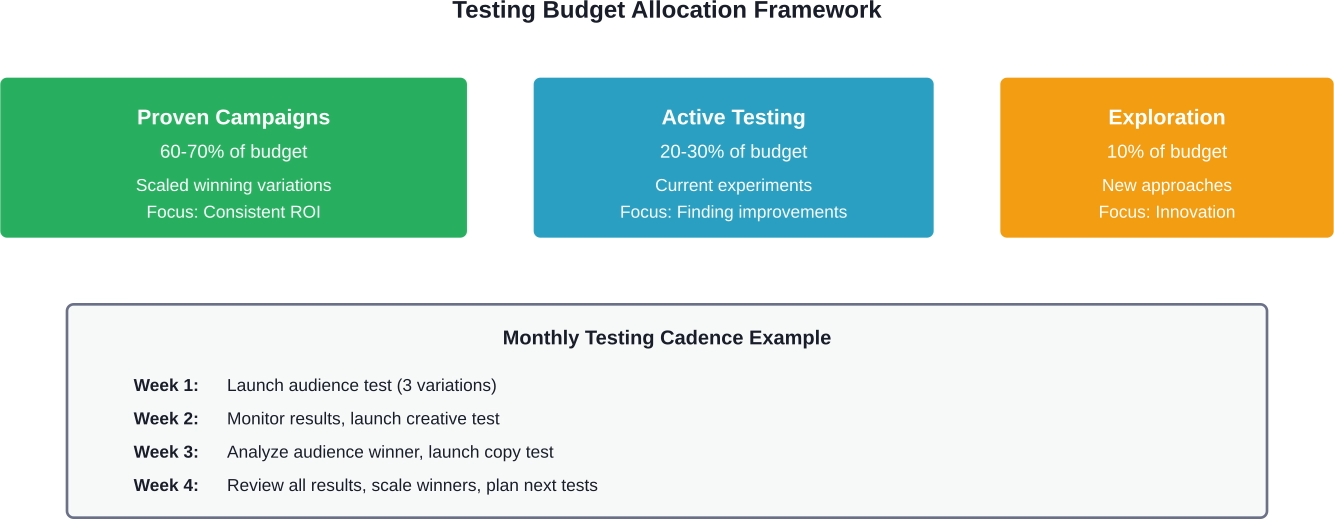

Allocate a percentage of total ad budget specifically to testing. The exact percentage depends on account maturity and risk tolerance, but 10-20% provides room for meaningful experimentation without jeopardizing overall performance.

Even experienced advertisers fall into testing traps that waste budget and produce misleading results.

Testing too many variables simultaneously is the most common error. It's tempting to test everything at once to save time, but this makes it impossible to attribute causation.

Another frequent mistake is stopping tests too early. Seeing one variation ahead after a day creates pressure to declare victory and reallocate the budget. But early leads often disappear as more data accumulates.

Insufficient sample size produces random results rather than reliable insights. This happens when budget or duration is inadequate for the tested variables.

Low-traffic scenarios require longer test durations or higher budgets to accumulate sufficient data. There's no shortcut—statistical significance requires a minimum number of events.

For conversion-focused campaigns, each variation needs at least 50-100 conversions to reliably identify performance differences. With low conversion rates, this can require substantial investment.

Tests don't happen in a vacuum. External factors like seasonality, news events, or competitor activity can influence results.

Running tests during major shopping holidays like Black Friday produces results that won't replicate during normal periods. Testing during unusual circumstances leads to conclusions that don't hold up when scaled.

Account for external factors when scheduling tests and interpreting results. Ideally, run tests during typical periods representative of ongoing conditions.

Once testing identifies winners, the scaling phase begins. This is where testing investment pays off through improved campaign performance.

Scaling isn't simply increasing the budget. The winning test variation needs to be implemented correctly in the campaign structure for optimal results.

Create new campaigns or ad sets using the winning elements. Keep the original test running at its current budget to ensure performance remains stable as the scaled version launches.

Facebook's algorithm optimizes delivery based on historical performance data. Sudden massive budget increases reset this learning and typically cause temporary performance degradation.

Scale budgets gradually using the 20-30% rule. Increase daily budget by this amount every 3-5 days, monitoring performance at each increment.

If performance degrades at any level, pause increases and allows the algorithm to stabilize before attempting further scaling.

Even winning variations eventually experience performance decline as audiences become saturated with the messaging.

Monitor frequency metrics as ads scale. When frequency exceeds 3-4 (meaning users see the ad multiple times), performance typically begins declining. This signals the need for creative refresh even for proven winners.

Continuous testing programs address this by always having new variations in the pipeline before current winners exhaust their effectiveness.

Testing Facebook ads before going live transforms advertising from guessing into strategic optimization. The difference between campaigns that waste money and those that scale profitably often comes down to systematic testing methodology.

Facebook provides sophisticated testing tools built directly into the platform. But the tools only work when used with disciplined testing principles: one variable at a time, sufficient budget and duration for statistical significance, and data-driven decision making over gut feelings.

The testing process doesn't end when campaigns launch. Continuous testing programs that always have experiments running create compounding improvements over time. Each test reveals something about the audience, the messaging, or the creative approach that informs future optimization.

Start with the fundamentals. Test audience targeting to identify who responds best. Test creative to determine what captures attention. Test copy to discover which messaging drives action. Build from these foundational insights toward increasingly sophisticated optimization.

The advertisers who consistently achieve the best results aren't necessarily the most creative or the biggest spenders. They're the ones who test systematically, learn continuously, and scale what works while ruthlessly cutting what doesn't.

Ready to implement structured testing for Facebook ads? Start with a single variable test today using Facebook's built-in split testing tool. The insights from that first test will immediately improve campaign performance and build the foundation for ongoing optimization.