The Top AI Marketing Tools Worth Your Time in 2026

Discover leading AI marketing platforms in 2026 that help teams create content faster, optimize campaigns, and automate workflows for better results.

A/B testing Meta ads involves comparing two versions of an ad element—such as creative, copy, or audience—to determine which performs better. Meta's built-in testing tools split traffic evenly between variants and measure results based on your chosen metrics. Testing works best when you focus on one variable at a time, allow sufficient budget and time for statistical significance, and use learnings to optimize future campaigns.

Most advertisers launch campaigns hoping their ads will work. They guess at headlines, creatives, and targeting.

But that's expensive guessing. A/B testing removes the uncertainty. It replaces assumptions with data—showing exactly which ad elements drive conversions and which burn budget.

According to research from Stanford Graduate School of Business (2024), A/B testing has helped shape the online world and remains a core experimentation method, though the increasing complexity of online platforms has revealed some of split testing's limitations. Yet when done correctly, A/B testing remains one of the most reliable ways to optimize digital advertising performance.

This guide covers everything needed to run effective A/B tests in Meta Ads Manager. From setting up proper test structures to choosing the right variables and interpreting results, the strategies here help advertisers test smarter and scale winners faster.

The advertising landscape has shifted. Privacy updates, algorithm changes, and rising costs mean every dollar needs to work harder.

A/B testing provides the framework to make informed decisions. Instead of running five different ad variations simultaneously and wondering which one actually drove results, proper testing isolates variables and measures their impact.

Research from Penn State Extension (updated April 27, 2023) emphasizes that the effectiveness of online marketing can be achieved by testing the impact of small changes systematically. Each test reveals how audiences respond to specific elements—whether that's an emoji in the subject line or a different call-to-action button.

Here's what testing delivers:

Industry data indicates that 1 in 7 A/B tests fails, with common pitfalls including limited audience size, short testing periods, poor research, or focusing on the wrong variables. The solution isn't to stop testing. It's to test correctly.

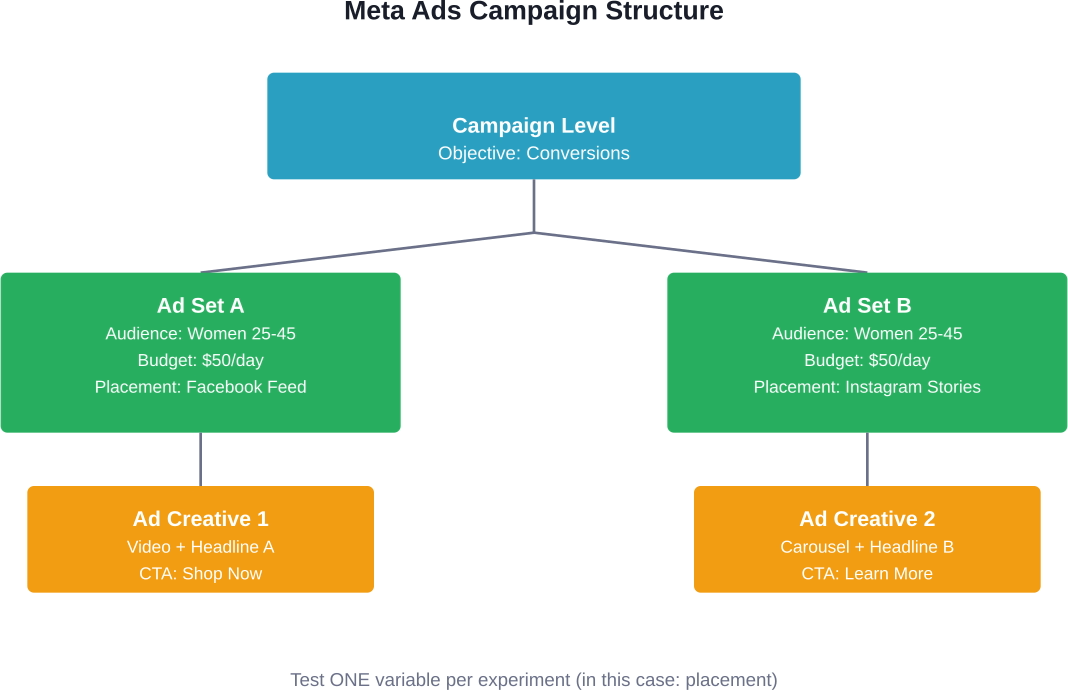

Before diving into testing strategies, understanding Meta's campaign structure is essential. This hierarchy determines how tests should be set up.

Meta organizes advertising into three levels:

For clean A/B tests, the ad set level provides the most control. Setting up separate ad sets for each variant ensures Meta's algorithm distributes budget evenly and doesn't favor one version prematurely.

Community discussions on testing approaches reveal confusion between Meta's official A/B testing feature and simply running multiple ads within one ad set. The difference matters.

When multiple ads run in the same ad set, Meta's algorithm automatically optimizes toward the best performer. That sounds good, but it means the algorithm decides the winner before statistical significance is reached. Tests end prematurely, and budget shifts before enough data is collected.

Meta's dedicated A/B testing feature (found in Experiments) splits traffic evenly between variants for the full test duration. This produces cleaner, more reliable results.

A/B testing Meta ads can get expensive when too many weak creatives make it into the test setup. Extuitive helps teams check Meta ad creative before campaigns go live. The platform forecasts likely ad performance using AI models trained against real campaign outcomes. For teams running A/B tests, this gives a way to compare multiple ad options before launch.

Use Extuitive to:

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

Meta offers two paths for testing: the Experiments tool (dedicated A/B testing) and manual setups using separate ad sets.

This is the cleanest approach for most tests. It's built specifically for A/B testing and handles traffic splitting automatically.

Here's how to access it:

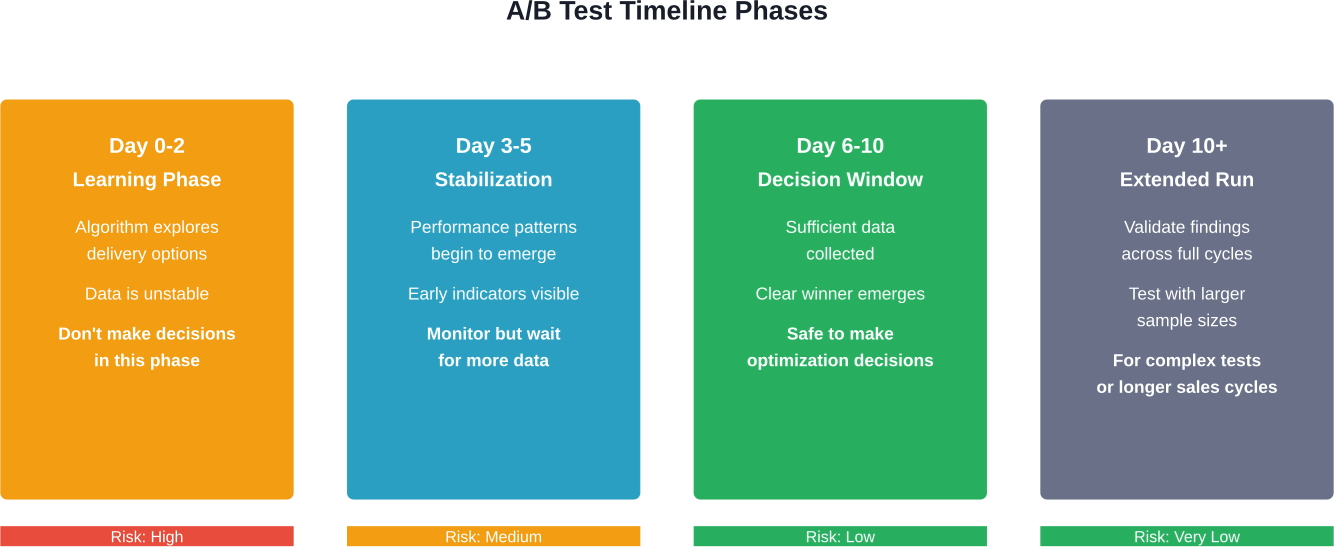

Most tests need a minimum of 5-7 days to collect reliable data, allowing Meta's algorithm to complete its learning phase. Shorter tests risk declaring winners before reaching statistical significance.

For more complex tests or situations where the Experiments tool doesn't support the needed configuration, manual setups work well.

The structure looks like this:

This approach requires discipline. It's tempting to change multiple things at once, but that destroys the test's validity. When audience, creative, and placement all differ, there's no way to know which variable caused performance differences.

Not all tests are created equal. Some variables have massive impact on performance. Others produce marginal gains that barely justify the effort.

Focus testing efforts on these high-impact areas:

This is where most performance differences show up. Testing image ads versus video ads, single images versus carousels, or static content versus animations often reveals significant preference patterns.

Different formats work better depending on the product, audience, and campaign objective. Video might outperform for engagement campaigns, while carousel ads often excel for product catalogs.

Words matter. Testing different headlines, body copy variations, and calls-to-action can dramatically shift click-through rates and conversions.

Common copy tests include:

Research from Penn State Extension highlights that even small changes—like adding an emoji to an email subject line—can impact open rates. The same principle applies to ad copy.

Who sees the ad is just as important as the ad itself. Testing different audience segments reveals which groups respond best to the offer.

Effective audience tests compare:

Research on Facebook ads for recruiting hard-to-reach populations found the first 3 ad campaigns were the most cost-effective, with mean cost per enrollment of $19.27 Canadian dollars. This demonstrates how testing audience approaches impacts acquisition costs.

Meta offers numerous placement options across Facebook, Instagram, Messenger, and Audience Network. Performance varies wildly between them.

Testing Facebook Feed versus Instagram Stories, or comparing Reels placements to in-stream video ads, often uncovers placement-specific performance patterns. Some products and offers simply work better on specific placements.

Testing doesn't stop at the ad. What happens after the click matters just as much.

According to guidance on using ads as part of conversion rate optimization roadmaps, Meta ads provide an excellent way to test landing pages and offers before investing in extensive on-site testing. Traffic acquisition costs money anyway—using that traffic to test different landing page approaches or promotional offers generates valuable insights.

Common tests include comparing discount offers (20% off versus $20 off), testing different lead magnet types, or evaluating landing page layouts.

The most common testing mistake? Not allocating enough budget or time for results to reach statistical significance.

Tests need sufficient data to produce reliable conclusions. End a test too early, and the "winner" might just be random variance. Starve it of budget, and the algorithm never gets enough conversions to optimize properly.

A practical rule: budget should be at least 2-3 times the average cost per desired action, multiplied by the number of variants being tested.

For example, if the average cost per purchase is $50 and you want to set up a campaign with 1 ad set and 3-5 ads, you should have a daily budget of no less than $50, though some practitioners recommend $100-$150/day. This ensures each variant gets enough delivery to generate meaningful data.

According to Reddit community discussions on Meta ad testing, if you set a tiny budget and go several days without a conversion, Meta's algorithm may not optimize your ad properly. The machine learning needs conversion events to learn from.

Most tests need 3-7 days minimum. Some require longer, especially when testing audiences or when conversion volume is low.

Why the minimum? Meta's delivery algorithm needs time to learn and stabilize. The first 24-48 hours are typically the "learning phase" where the system explores delivery options. Real performance data becomes clearer after this phase completes.

Tests should also run through complete purchase cycles. If customers typically research for several days before buying, a 3-day test won't capture full conversion behavior.

Data collection is only half the battle. Knowing what to do with the results determines whether testing drives actual improvement.

Focus on metrics that align with campaign objectives:

Don't get distracted by vanity metrics. An ad with high engagement but low conversions isn't a winner if the objective is sales.

This concept trips up many advertisers. A variant that's currently ahead might not be a true winner—it could be random luck.

Statistical significance measures confidence that performance differences are real, not random. Most testing platforms calculate this automatically. Look for at least 95% confidence before declaring a winner.

Industry best practices emphasize creating segments to improve test conclusiveness. Will a 4% drop in conversion of current customers be offset by a 7% increase in conversion of new visitors?

Once a clear winner emerges with statistical significance, it's time to scale. But scale gradually.

Doubling budget overnight can push campaigns back into learning phase and destabilize performance. Increase budgets by 20-30% every few days, monitoring for performance consistency.

Sometimes tests produce no clear winner. Both variants perform similarly, or both underperform expectations.

That's valuable information. It means the variable being tested isn't the constraint. Test something else—a different element might have the impact being sought.

This is where Stanford's research on A/B testing evolution becomes relevant. As platforms grow more complex, traditional testing approaches face limitations. Sometimes the winning combination involves multiple variables interacting in ways simple A/B tests can't capture.

Even experienced advertisers fall into these traps. Avoiding them saves budget and time.

The temptation is strong: test headline, image, audience, and placement all at the same time. But when performance changes, which variable caused it?

Impossible to know. Test one variable per experiment. It takes longer but produces actionable insights.

Testing with tiny audiences or minimal budgets produces unreliable results. If an ad only gets 50 impressions and 2 clicks, that's not enough data to draw conclusions.

As community discussions point out, limited audience size is one of the top reasons tests fail. Make sure target audiences are large enough to generate meaningful traffic to both variants.

Seeing one variant ahead after 24 hours doesn't mean it's the winner. Early performance often reflects random variation or time-of-day effects.

Let tests run their full planned duration. Patience pays off with more reliable results.

Running a test during a holiday, major news event, or seasonal shift can skew results. A Black Friday test might show very different performance than the same test in February.

Consider external context when interpreting results. Sometimes retesting during a more typical period confirms whether findings hold.

Tests produce valuable insights—but only if they're remembered. Create a simple system to document what was tested, results, and implications.

This builds institutional knowledge and prevents repeatedly testing the same things.

Once basic testing is mastered, these advanced approaches extract even more value.

Instead of one big test, run a series of smaller tests that build on each other. Test creative format first. Once a winner emerges, test copy variations within that winning format. Then test audiences with the winning creative-copy combination.

This sequential approach compounds improvements. Each test starts from a stronger baseline than the last.

For ongoing campaigns, set aside a small percentage of budget (5-10%) to continue running previous best performers. This validates that new winners are genuinely better, not just benefiting from recency or algorithm changes.

Meta's dynamic creative feature is mentioned in competitor content but specific details about automatic testing of multiple elements are not verified in provided source material. This approach works well for broad exploration when information is available, though it provides less control and transparency than manual A/B tests. Consider it a complement to, not replacement for, structured testing.

Don't just look at overall performance. Break down results by audience segments: age groups, genders, locations, devices.

Often a variant performs better overall but worse for a specific valuable segment. Understanding these nuances leads to smarter optimization decisions.

Testing costs money. The question is whether the investment pays off.

Enterprise-grade algorithmic optimization platforms carry price tags of $50-60K annually (with some enforcing $50K contract minimums, though valuing main Web products at $30K per year). For most businesses, that's prohibitive.

Meta's built-in testing tools provide robust functionality without additional cost. The real expense is the ad spend during testing—budget allocated to variants that might underperform.

But consider the alternative: running ads indefinitely without knowing whether better options exist. Testing a 20% improvement in cost per acquisition, even if the test itself costs $1,000 in spend, pays for itself quickly once the winner scales.

The key is testing thoughtfully. Don't test trivial variations. Focus on changes likely to produce meaningful differences.

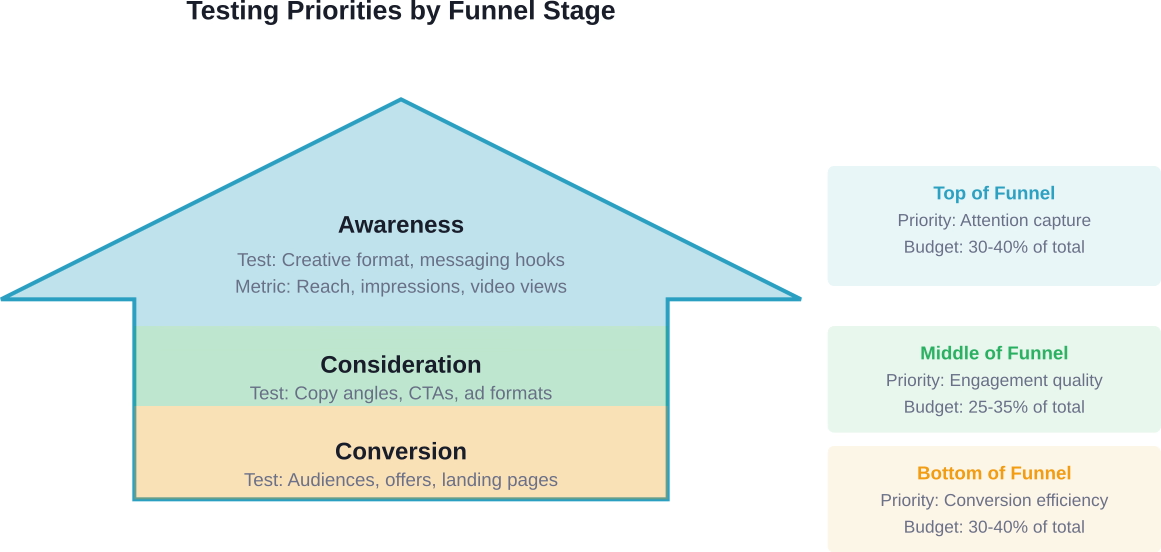

Different campaign objectives need different testing approaches.

For awareness campaigns, test for reach and engagement. Which creative formats get the most views and shares? Which messages resonate with cold audiences?

For consideration campaigns, test for clicks and engagement. Which copy and creative combinations drive people to learn more?

For conversion campaigns, test for actions. Which combinations drive purchases, signups, or leads at the lowest cost?

The mistake is using the same testing strategy across all objectives. Each funnel stage has different success metrics and different variables that matter most.

A/B testing Meta ads transforms guesswork into strategy. Every test reveals what audiences respond to, which creative elements drive action, and where budget should flow.

The framework is straightforward: isolate one variable, allocate sufficient budget and time, let tests reach statistical significance, and scale winners gradually. Avoid common pitfalls like testing too many things at once or stopping tests prematurely.

As Stanford research indicates, A/B testing continues evolving alongside advertising platforms. But the fundamentals remain: disciplined experimentation, data-driven decisions, and continuous iteration.

The advertisers who win on Meta aren't necessarily the most creative or those with the biggest budgets. They're the ones who test systematically, learn faster, and compound improvements over time.

Start with one test. Choose a high-impact variable—creative format or audience targeting. Set it up correctly with adequate budget and time. Measure results honestly. Then scale what works and test the next variable.

That's how testing compounds into sustainable competitive advantage.