Predict winning ads with AI. Validate. Launch. Automatically.

Meta Ads Creative Testing Best Practices 2026

Meta Ads creative testing in 2026 requires systematic frameworks that leverage Meta's Andromeda algorithm and AI-powered automation. The most effective approach combines structured testing protocols, aggressive kill criteria (pausing creatives within 48-72 hours or $50-100 spend), and creative diversification strategies that feed Meta's machine learning systems. With dynamic creative optimization triggering up to 40% faster campaign iteration, success hinges on disciplined testing methodologies rather than budget size or traditional targeting tactics.

The landscape of Meta advertising has fundamentally shifted. Brands pour millions into Facebook and Instagram campaigns, yet most struggle to crack the code on consistent performance.

Here's the thing though—the problem isn't budget constraints or audience targeting anymore. It's creative quality and how you test it.

According to Meta's internal performance data and industry benchmarks from Q1 2026, AI-powered creative automation tools can generate tailored ad variants that trigger up to 40% faster campaign iteration. Meta's advertising ecosystem has evolved beyond simple A/B tests into a sophisticated machine learning environment that demands disciplined, systematic testing frameworks.

Some community discussions across advertising forums highlight a critical shift: targeting's importance has diminished compared to creative strength. Meta's algorithm figures everything out when creatives are strong enough to feed its optimization systems properly.

This guide breaks down exactly how to build and implement creative testing frameworks that actually scale results in 2026.

Why Creative Testing Matters More Than Ever in 2026

The advertising game has changed dramatically. Meta's Andromeda algorithm enables processing of significantly higher volumes of ad variations and personalization at scale.

What this means for advertisers: the brands that feed Meta's machine learning systems with diverse, high-quality creative variations win. Those that don't get left behind with rising costs and declining returns.

The shift isn't subtle. Where advertisers once obsessed over audience segmentation and targeting parameters, the conversation has moved decisively toward creative quality and testing velocity. Real talk: most brands approach creative testing by throwing variations at the wall and hoping something sticks.

That doesn't cut it anymore.

Without systematic frameworks, campaigns produce inconsistent performance and waste ad spend. The brands scaling successfully in 2026 treat creative testing as a disciplined process, not a guessing game.

Understanding Meta's Andromeda Algorithm and Creative Diversification

Meta's Andromeda algorithm represents the next generation of personalized ads retrieval. It functions as the first-step algorithm within Meta's advertising recommendation pipeline.

The practical implication? The platform can now handle exponentially more creative variations and match them to increasingly granular user contexts.

According to academic references on Meta's Andromeda algorithm, the system coincides with adoption of generative creative tools. The system rewards accounts that provide creative diversity—multiple formats, messages, and visual approaches that the algorithm can test across different user segments.

Creative diversification has become a core best practice. Pumping out volume for volume's sake doesn't work. But providing the algorithm with genuinely different creative concepts—different hooks, angles, formats, and messaging frameworks—feeds the machine learning system exactly what it needs.

High-performing brands often run multiple distinct creative concepts simultaneously, each with format variations. They're not just changing button colors or headline words. They're testing fundamentally different value propositions and content approaches.

Review Meta Ad Creative With Extuitive Before Testing

Meta ad testing works better when creative is reviewed before launch. Extuitive helps teams do that by forecasting likely ad performance with AI models trained against real campaign outcomes. For 2026 testing setups, it gives teams a way to compare creative options before they enter the test.

Want to Compare Meta Ads Before Launch?

Use Extuitive to:

- predict ad performance before launch

- compare multiple creatives

- screen ads before budget is spent

👉 Book a demo with Extuitive to see how it predicts ad performance before launch.

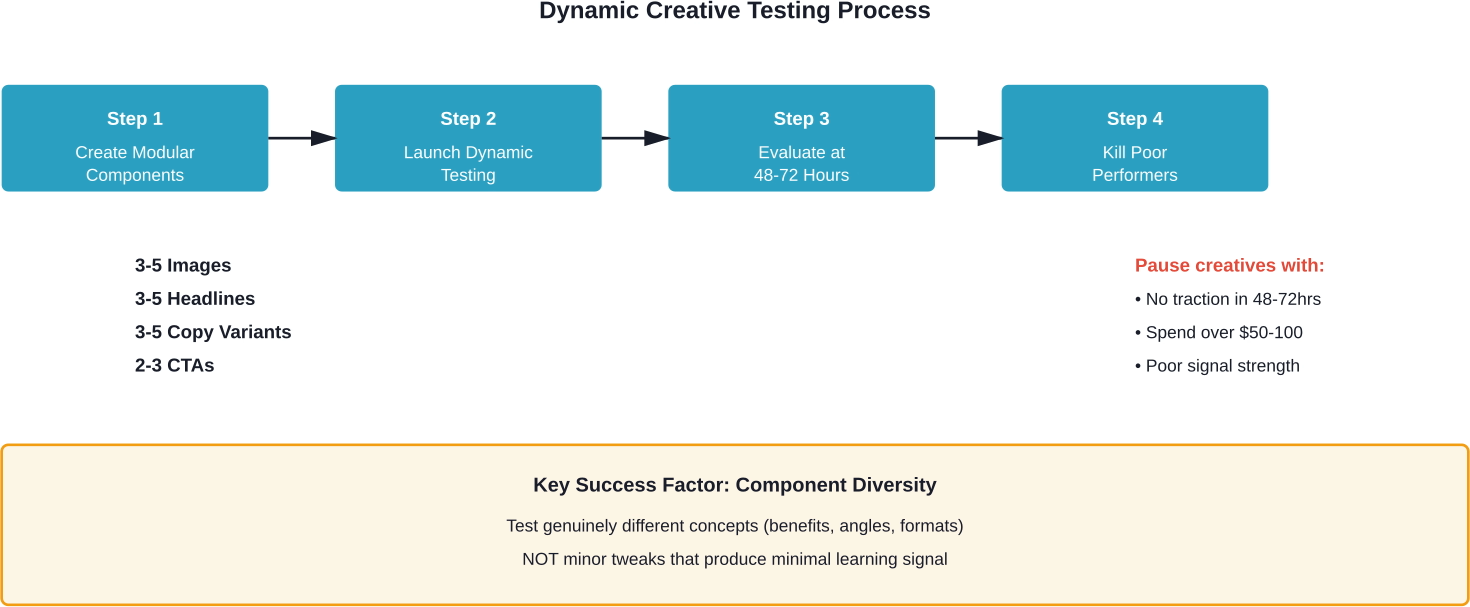

The Dynamic Creative Testing Framework

Dynamic creative testing leverages Meta's built-in automation tools to systematically test creative components. The platform mixes and matches different elements—images, videos, headlines, descriptions, and calls-to-action—to find winning combinations.

According to Meta Ads News published by St. Augustine University in March 2026, dynamic creative optimization (DCO) tools generate tailored ad variants based on user context.

Here's how to implement this framework effectively:

Start by breaking your creative into modular components. For each campaign, prepare 3-5 primary images or videos, 3-5 headlines, 3-5 ad copy variations, and 2-3 call-to-action buttons. The platform automatically tests combinations and allocates budget toward better performers.

But there's a catch. Dynamic creative works best when the components are genuinely different, not minor tweaks. Testing five headlines that essentially say the same thing produces minimal learning. Testing five headlines that emphasize different benefits—price, quality, speed, exclusivity, social proof—gives the algorithm real signal to work with.

The testing protocol should include aggressive evaluation windows. Many successful advertisers using dynamic creative set 48-72 hour checkpoints to assess which component combinations are gaining traction. Underperformers get paused quickly to concentrate budget on winners.

Structured A/B Testing with Isolated Variables

While dynamic creative testing handles multi-variable optimization, structured A/B testing remains essential for isolating specific performance drivers. The discipline here is testing one variable at a time.

Change the image but keep everything else constant. Test headline variations with identical visuals. Swap out the call-to-action while maintaining the same copy and creative.

This approach produces clear learnings. When performance changes, the cause is obvious because only one element varied. Most advertisers fail at A/B testing by changing multiple variables simultaneously, then wondering which change drove the result.

The practical implementation requires campaign structure discipline. Create duplicate ad sets with identical targeting, budget, and placement settings. The only difference should be the single creative element being tested.

Evaluation criteria should be statistically rigorous. Declaring a winner after 24 hours with 50 clicks leads to false conclusions. Best practice involves setting minimum thresholds—typically 100+ conversions per variant or 7 days of runtime, whichever comes first.

Based on industry testing methods, set aggressive kill criteria—pause any creative that doesn't show promising signals within 48-72 hours or $50-100 spend. This prevents wasting budget on clear losers while waiting for statistical significance on obvious trends.

Building Your Isolated Variable Testing Matrix

The most effective testing programs map out variables systematically. Start with the highest-impact elements and work down the priority list.

Priority one typically centers on the core creative concept—the overall angle, value proposition, and visual approach. Testing whether a product demo outperforms a lifestyle shot matters more than whether the button is blue or green.

Priority two covers format variations. Video versus static image. Carousel versus single image. Reels versus standard feed placement. Format choice dramatically impacts performance, particularly as social-native videos are increasingly promoted by Meta as high-performing format options.

Priority three addresses messaging elements—headlines, body copy, offers, and calls-to-action. These tests refine how the message resonates once the core concept and format are optimized.

The testing sequence matters. Running headline tests before validating the creative concept wastes time optimizing messaging for a fundamentally weak visual approach.

The Concept Testing Ladder Methodology

The concept testing ladder provides a framework for systematically validating creative directions before investing in polished production.

Step one: rapid concept validation. Create rough versions of 5-10 different creative concepts—simple static images with basic text overlays work fine. Launch these as a quick test to identify which core angles generate engagement.

Budget allocation at this stage stays minimal—$20-50 per concept provides sufficient signal. The goal isn't conversion optimization but identifying which concepts merit further development.

Step two: format expansion for winners. Take the top 2-3 concepts from initial validation and develop them across multiple formats. Create video versions, carousel adaptations, and different aspect ratios for various placements.

This stage tests whether the concept's strength holds across formats or if it only works in specific contexts. Some concepts crush as videos but flop as static images. Others perform consistently regardless of format.

Step three: polish and scale. The concepts proving strong across formats warrant full production investment—professional creative development, multiple variations, and proper budget allocation.

This ladder approach prevents the common mistake of investing heavily in polished creative production before validating that anyone actually cares about the concept. Better to test rough concepts cheaply and polish winners than to produce beautiful ads that nobody engages with.

Audience-Creative Matrix Testing

Despite the reduced importance of manual targeting in 2026, audience-creative matching still produces valuable insights. Different customer segments respond to different messages and creative approaches.

The matrix testing framework crosses creative variations with audience segments to identify which combinations drive the strongest performance.

Set up a grid structure. On one axis, place creative concepts—product-focused, benefit-focused, social proof-focused, etc. On the other axis, place audience segments—cold traffic, engaged users, past purchasers, high-intent shoppers.

Launch campaigns testing each combination. The results reveal patterns: maybe social proof creative crushes with cold audiences but underperforms with past customers. Perhaps product feature ads work great for high-intent shoppers but bore engaged users who want lifestyle content.

These insights inform both creative development and campaign structure. Knowing which creative angles resonate with which audiences allows for more strategic campaign organization as scaling begins.

The testing doesn't require exhaustive combinations of every creative with every audience. Start with the most distinct segments and concepts. The goal is identifying meaningful patterns, not testing every possible permutation.

Rapid Iteration Testing at Scale

Scaling Meta Ads performance in 2026 requires velocity. The brands winning aren't just testing more creatives—they're testing faster.

Rapid iteration testing means launching new creative variations weekly, sometimes daily. The cadence feeds Meta's algorithm with fresh content while continuously improving creative quality based on performance data.

The key is establishing production systems that support this velocity. Waiting weeks for creative agency deliverables kills iteration speed. Successful brands either build in-house creative teams or partner with agencies that can deliver on rapid timelines.

User-generated content and social-native video production enable faster iteration. These formats require less production overhead than polished studio content while often outperforming it on engagement metrics.

According to advertising community discussions, boosting organic posts has emerged as a particularly effective rapid testing method. Brands publish content to their Facebook or Instagram feeds organically, monitor which posts generate natural engagement, then boost the winners as paid ads.

This approach pre-validates content before ad spend. Posts earning strong organic engagement typically convert that momentum into paid performance. Posts that flop organically rarely succeed when promoted.

Establishing Your Creative Production Pipeline

Rapid iteration demands operational infrastructure. The testing framework fails without creative supply to feed it.

Effective pipelines typically include multiple content sources: in-house production, user-generated content from customers, influencer partnerships, and AI-powered creative tools for generating variations.

The production calendar should plan creative deliverables in batches aligned with testing cycles. If testing runs on two-week cycles, creative production needs to deliver new concepts every two weeks.

Brief templates streamline requests. Rather than writing novel-length creative briefs for every concept, establish standardized templates that communicate requirements quickly. Include target audience, core message, required format specifications, and example references.

Approval processes need speed prioritization. Creative bottlenecked in approval workflows defeats the purpose of rapid testing. Establish clear approval authority and turn-around expectations.

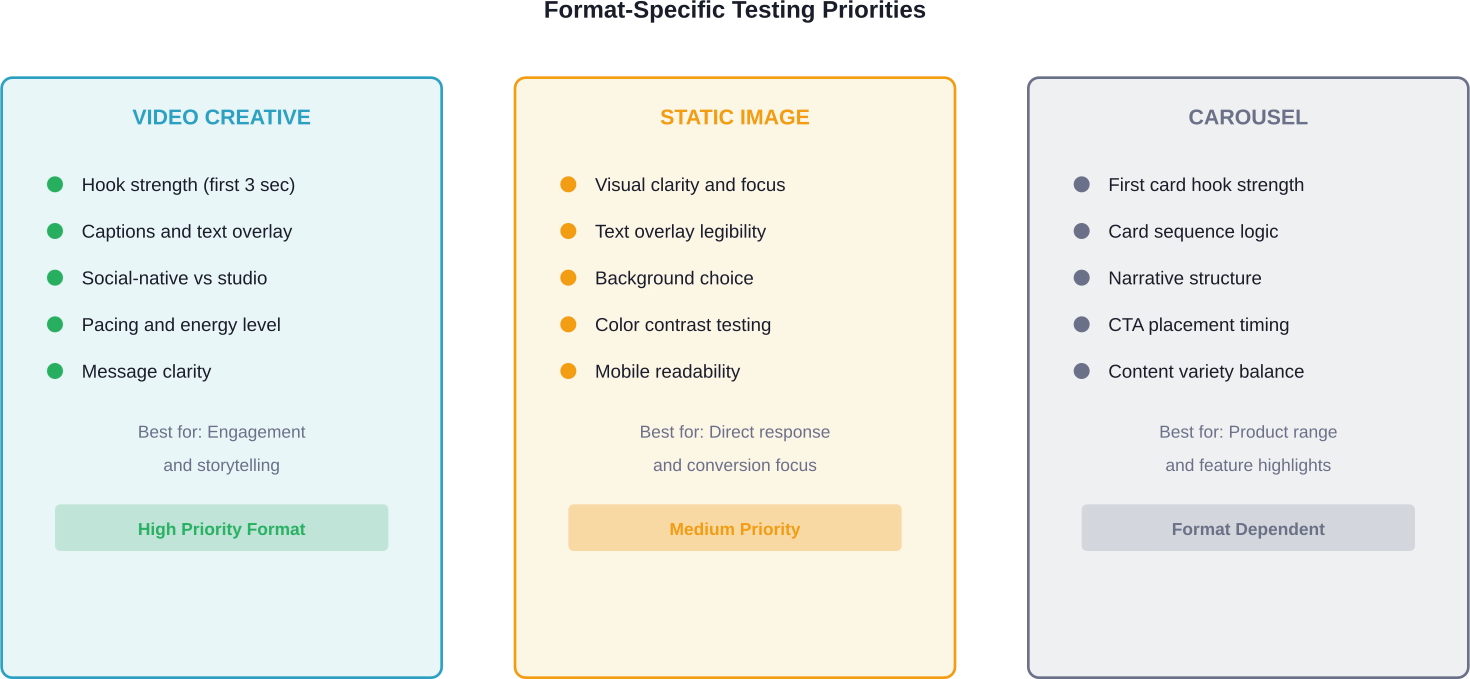

Format-Specific Testing Protocols

Different ad formats require different testing approaches. The variables that matter for video creative differ from those impacting static image performance.

Video Creative Testing

Video testing focuses on hook strength, pacing, and message clarity. The first three seconds determine whether users keep watching or scroll past.

Test different opening hooks aggressively. Pattern interrupts, bold statements, visual intrigue, immediate benefit callouts—try multiple approaches to identify what stops the scroll for the target audience.

Video length testing matters less than it used to. The platform optimizes delivery based on user behavior patterns. Some users prefer quick 15-second hits, others watch longer content. Focus on content quality over arbitrary length targets.

Captions are non-negotiable. Most users watch videos without sound. Videos that depend on audio to communicate the message underperform.

Social-native videos are increasingly promoted by Meta as high-performing format options. These typically outperform expensive studio productions in feed environments.

Static Image Testing

Static creative testing emphasizes visual clarity and message hierarchy. Users process static ads in seconds, so immediate comprehension matters.

Text overlay testing proves critical. Test minimal text versus more detailed overlays. Test headline placement, size, and color contrast. Ensure text remains legible on mobile devices at small sizes.

Background choice significantly impacts performance. Product shots on white backgrounds work for some categories, lifestyle settings for others. Test environmental context variations.

Color psychology deserves testing but avoid overthinking it. Test brand colors versus high-contrast alternatives. Some products pop with bright, saturated palettes while others suit muted, sophisticated tones.

Carousel Testing

Carousel ads enable storytelling across multiple cards. Testing focuses on card sequence, content variety, and call-to-action placement.

First card performance disproportionately impacts overall results since many users never swipe. The opening card must hook attention while making clear that additional content follows.

Test different narrative structures. Product feature progression. Before-and-after sequences. Problem-solution frameworks. Customer story arcs. Different structures resonate with different audiences and objectives.

Call-to-action timing matters. Testing shows mixed results between placing CTAs on the first card versus the final card. Test both approaches for specific use cases.

Performance Data Analysis and Winner Selection

Creative testing only works when coupled with rigorous performance analysis. Data interpretation separates successful testing programs from wasteful ones.

The first principle: define success metrics before launching tests. Deciding what constitutes a winner after seeing results introduces bias. Establish clear criteria upfront.

For direct response campaigns, cost per acquisition typically serves as the primary metric. For awareness campaigns, cost per thousand impressions or engagement rate might matter more. Match the metric to the objective.

Statistical significance matters but shouldn't paralyze decision-making. Waiting for perfect statistical confidence before making calls slows iteration speed. Most successful advertisers balance statistical rigor with practical decision velocity.

The 48-72 hour evaluation window with $50-100 spend provides reasonable signal for initial decisions. Creatives showing clear promise continue running for deeper validation. Obvious underperformers get cut quickly.

Look beyond primary metrics for contextual insights. A creative might have higher CPA but attract higher lifetime value customers. Another might have lower immediate conversion but stronger engagement indicating brand building value.

Building Your Performance Dashboard

Effective analysis requires clear visibility into performance data. Dashboards that surface the right metrics at the right time enable faster, better decisions.

Essential metrics for creative testing dashboards include:

- Spend per creative variation

- Impressions and reach

- Click-through rate

- Cost per click

- Conversion rate

- Cost per acquisition

- Return on ad spend

- Engagement rate (for awareness objectives)

Organize dashboards to compare creatives side-by-side. Absolute performance matters less than relative performance when determining winners.

Set up automated alerts for creatives crossing performance thresholds—both positive and negative. Get notified when creatives hit spend limits without conversions or when new creatives demonstrate strong early signal.

Regular reporting cadence keeps testing programs accountable. Weekly creative performance reviews establish discipline and ensure learnings inform future creative development.

Leveraging Advantage+ Campaigns for Creative Testing

Meta's Advantage+ campaign structure has become the default for many advertisers in 2026. The platform handles targeting, placement, and budget optimization automatically, allowing advertisers to focus on creative quality.

Within Advantage+ campaigns, creative testing takes a specific form. Rather than testing creatives in separate ad sets with controlled targeting, advertisers provide multiple creatives within single campaigns and let Meta's algorithm determine optimal distribution.

This approach aligns with how Meta's systems work. The Andromeda algorithm wants creative diversity to match with user contexts. Feeding multiple strong concepts into Advantage+ campaigns gives the system exactly what it needs.

Community discussions highlight the shift toward "Challenger ASC" structures—Advantage+ Shopping Campaigns with new creative concepts added regularly as challengers to existing winners. The continuous injection of new concepts maintains performance as individual creatives fatigue.

Testing within Advantage+ requires trusting Meta's optimization. The platform determines which users see which creatives based on predicted response probability. Advertisers lose some control but gain optimization sophistication.

The trade-off generally favors platform optimization in 2026. Manual optimization rarely outperforms Meta's machine learning when fed sufficient quality creative variations.

Creative Fatigue Management and Refresh Strategies

Every creative eventually fatigues. Performance degrades as the same users see the same ads repeatedly. Managing fatigue requires proactive refresh strategies.

Frequency monitoring provides early warning. When average frequency reaches 3-4 impressions per user for cold audiences or 5-7 for retargeting audiences, performance typically begins declining.

But don't wait for fatigue to hit. Proactive refresh schedules work better than reactive responses. Successful advertisers plan creative refreshes on regular intervals—typically every 2-4 weeks for active campaigns.

Refresh doesn't necessarily mean completely new concepts. Often, format variations or messaging tweaks to existing concepts extend creative lifespan effectively. Convert a static image to video. Adjust the headline. Change the color scheme. Small changes can reset frequency and restore performance.

The quarterly sales strategy some brands employ creates natural refresh points. Running promotional campaigns four times per year—tied to seasons, holidays, or product launches—provides regular creative refresh opportunities while maintaining pressure on revenue.

This "Four Peaks Theory" aligns creative refresh with business rhythm. Fresh creative supports each promotional push, preventing the fatigue that comes from running the same ads continuously.

Budget Allocation for Creative Testing

How much budget should go toward testing versus scaling proven winners? The balance determines testing velocity and risk management.

Generally speaking, allocating 20-30% of total ad spend to testing provides sufficient budget for meaningful experimentation without jeopardizing overall performance.

Testing budgets should scale with total spend. A campaign spending $1,000 monthly might allocate $200-300 for testing. A campaign spending $50,000 monthly should dedicate $10,000-15,000 to testing.

The allocation isn't static. During creative discovery phases—when building initial winning creative—testing budgets increase. During scaling phases with proven creative assets, testing budgets can decrease temporarily.

Individual creative tests need sufficient budget to generate signal. Too little spend produces inconclusive results. The $50-100 per creative minimum mentioned earlier provides adequate data for initial evaluation.

Structured testing schedules prevent budget from fragmenting across too many simultaneous tests. Rather than testing 20 variations with $25 each, test 5 variations with $100 each for clearer results.

Scaling Winners: From Testing to Growth

Identifying winning creatives means nothing without effective scaling strategies. The transition from testing to growth requires careful execution.

Vertical scaling—increasing budget on winning creatives—is the first approach. Gradually raise daily budgets on strong performers, typically in 20-30% increments every few days. Aggressive budget jumps can disrupt Meta's optimization.

Horizontal scaling involves duplicating winning creatives into new campaigns or ad sets. This approach provides more budget capacity but requires careful implementation to avoid auction competition between your own ads.

Within Advantage+ structures, horizontal scaling looks different. Rather than duplicating campaigns, advertisers typically launch new Advantage+ campaigns with different objectives, placements, or geographic targets while maintaining winning creative across campaigns.

Creative diversity supports scaling better than single-winner approaches. Rather than betting everything on one successful creative, maintain a portfolio of 3-5 strong performers. This protects against sudden fatigue and provides stability.

The scaling process should include ongoing testing even as winners scale. Allocate budget to both scaling proven performers and testing new concepts continuously. The 70-20-10 rule works well: 70% of budget on proven winners, 20% on recent strong performers, 10% on new concept testing.

Common Creative Testing Mistakes to Avoid

Even with solid frameworks, specific mistakes derail creative testing programs. Avoiding these pitfalls improves results.

- Testing too many variables simultaneously. Changing creative, copy, targeting, and objective all at once makes it impossible to identify what drove results. Isolate variables.

- Insufficient testing budgets. Spreading $100 across ten creatives produces noise, not signal. Concentrate budget for meaningful data.

- Declaring winners too quickly. Performance fluctuates, especially early. A creative that wins day one might underperform by day three. Allow sufficient evaluation time.

- Ignoring creative fatigue. Yesterday's winner becomes tomorrow's underperformer as frequency builds. Monitor fatigue metrics and refresh proactively.

- Testing without clear success criteria. Define what constitutes a winner before launching tests. Changing criteria mid-test to favor preferred creative introduces bias.

- Over-optimizing minor elements. Testing seventeen button colors while ignoring core creative concept wastes time. Prioritize high-impact variables.

- Neglecting mobile optimization. Most ad views happen on mobile devices. Creatives that look great on desktop but fail on mobile underperform overall.

- Failing to document learnings. Testing without recording insights wastes the opportunity. Document what works, what doesn't, and why for institutional knowledge.

Integrating AI and Automation Tools

AI-powered tools increasingly support creative testing workflows. Understanding how to leverage these tools without losing strategic control matters.

According to Meta Business News and official engineering blog updates in March 2026, dynamic creative optimization (DCO) tools generate tailored ad variants based on user context. These tools can produce headline variations, image adaptations, and format conversions at scale.

The practical application involves using AI for variation generation while maintaining human oversight on strategic direction. Let AI generate twenty headline variations, but humans select which five to test based on strategic alignment.

Generative AI tools for visual creative—creating images, videos, or design elements—show promise but require quality control. AI-generated creative elements work best when refined by human designers rather than used raw.

Meta's built-in automation features deserve full utilization. Advantage+ campaigns, dynamic creative, and automated placements leverage Meta's machine learning effectively. Fighting the automation generally produces worse results than embracing it.

That said, automation doesn't replace creative strategy. AI tools amplify human creativity and accelerate execution. They don't substitute for understanding what messages resonate with target audiences or why certain creative approaches work.

Industry-Specific Creative Testing Considerations

While core testing principles apply across industries, specific categories require adapted approaches.

E-commerce and DTC Brands

E-commerce creative testing emphasizes product presentation, offer clarity, and trust signals. Testing priorities include product-in-use versus product-only shots, lifestyle context variations, and different promotional angles.

User-generated content particularly shines for e-commerce. Customer photos and videos typically outperform studio shots for authenticity and relatability.

Advantage Shopping Campaigns provide the primary structure, with creative testing focused on feeding diverse concepts into the algorithm's optimization system.

B2B and Lead Generation

B2B creative testing focuses on problem identification, solution clarity, and credibility signals. The longer consideration cycles mean creative needs to address different awareness stages.

Testing different value propositions matters more than visual variations. Does the target audience respond better to efficiency gains, cost savings, competitive advantages, or risk mitigation messages?

Format testing typically favors longer-form content for B2B—videos that explain solutions, carousels that walk through processes, or lead ads that collect information directly.

Local Services and SMBs

Local business creative testing emphasizes proximity, availability, and social proof. Before-and-after content, customer testimonials, and behind-the-scenes looks at service delivery perform strongly.

Location-specific creative elements—recognizable local landmarks, community references, regional language—improve relevance and response rates.

Testing timing and offers matters as much as creative for local services. Seasonal promotions, availability messaging, and response time promises deserve systematic testing.

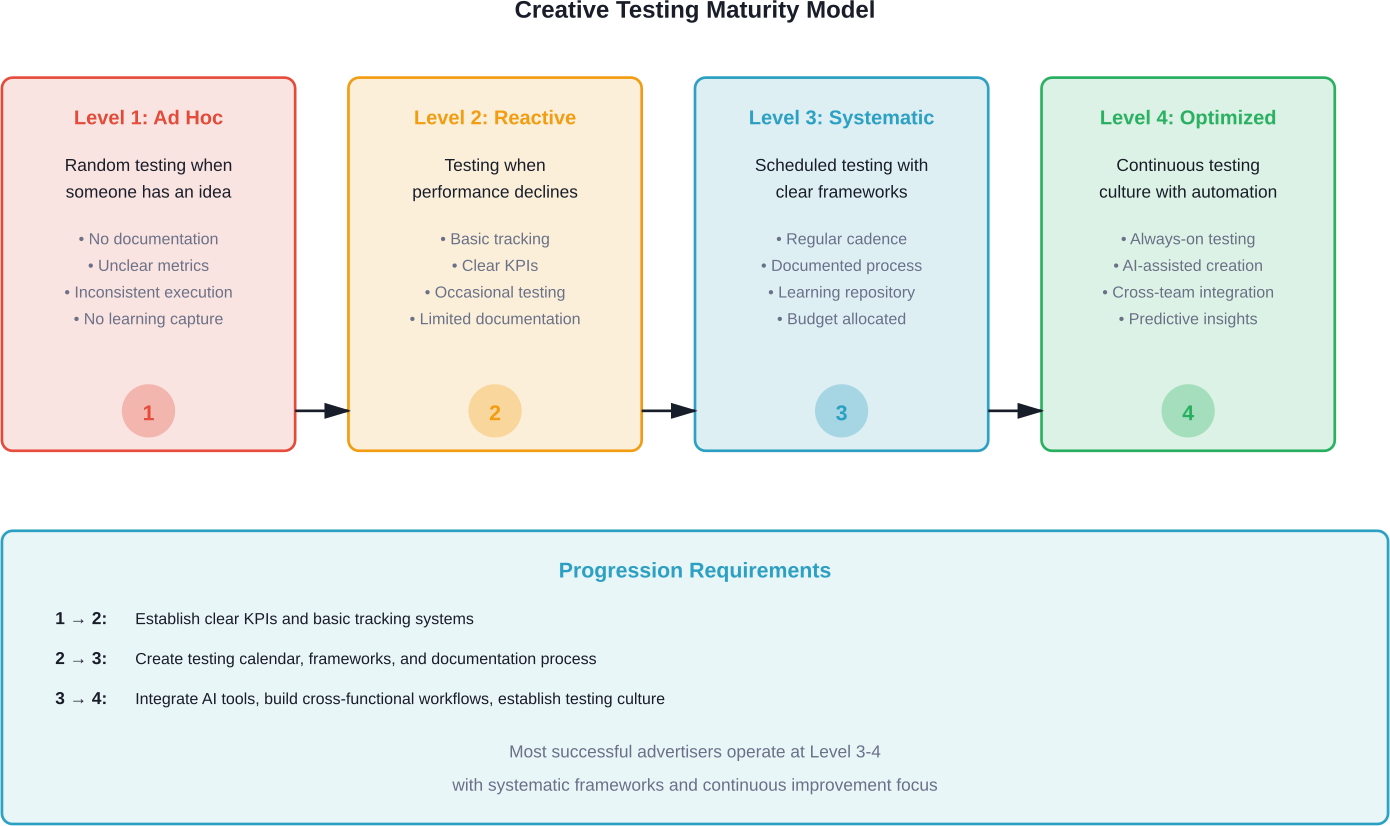

Building a Long-Term Creative Testing Culture

Sustainable creative testing success requires organizational commitment beyond individual campaign tactics. Building a testing culture ensures consistent execution.

Start with executive buy-in on testing allocation. Leadership must accept that testing budget produces learning value even when individual tests fail. Permission to fail is permission to discover what works.

Establish clear testing calendars and workflows. Ad hoc testing whenever someone has an idea produces inconsistent results. Scheduled testing cadences with defined processes work better.

Document everything. Create a creative testing repository that captures what was tested, results, insights, and applications. Future campaigns benefit from institutional knowledge.

Cross-functional collaboration improves testing quality. Creative teams that understand performance data make better creative. Performance marketers who understand creative principles make better testing decisions.

Celebrate learning, not just winning. Teams that fear testing failures become conservative. Teams that value insights from both successful and unsuccessful tests innovate more effectively.

Conclusion

Meta Ads creative testing in 2026 has evolved far beyond simple A/B comparisons. Meta's Andromeda algorithm enables processing of significantly higher volumes of ad variations, which demands that advertisers feed these systems with diverse, high-quality creative concepts through systematic testing frameworks.

Success comes down to discipline. Structured testing protocols, aggressive kill criteria, format-specific approaches, and rapid iteration velocity separate scaling campaigns from stagnant ones.

The brands winning on Meta aren't necessarily those with the largest budgets or most sophisticated targeting. They're the ones treating creative quality as the primary performance driver and testing as a disciplined process rather than a guessing game.

Build your testing framework. Establish clear evaluation criteria. Document learnings. Feed the algorithm with creative diversity.

The platform has given advertisers incredibly powerful machine learning systems. The question isn't whether those systems work—it's whether your creative testing program is sophisticated enough to leverage them effectively.

Start with one framework from this guide. Implement it systematically for 30 days. Measure results. Refine the approach. Build from there.

The advertisers who master creative testing in 2026 will dominate their categories. The ones who don't will watch costs rise while performance stagnates.

Which side of that divide do you want to be on?