Will AI Replace Radiologists? The Truth in 2026

AI won't replace radiologists—it augments them. Discover how AI and radiologists work together, backed by data from FDA, NIH, and clinical studies.

Facebook ads aren’t cheap, and guessing your way through them usually ends up costing more than it should. That’s where A/B testing comes in. It gives you a clear way to compare ideas, like swapping a headline or trying a different image, without betting your entire budget upfront.

In this article, we’ll walk through what A/B testing in Facebook ads actually is, how it works, what you can test, and why it still matters.

At Extuitive, we believe the era of launching ads just to see what happens is coming to an end. Traditional A/B testing forces teams to spend time and budget on trial campaigns, waiting for results that often arrive too late. We’ve replaced that loop with predictive intelligence – a system built to identify high-performing creatives before launch.

Our engine blends brand-specific historical data with a dataset of over 150,000 AI agent consumers, allowing us to simulate real-world reactions at scale. Each creative is scored for predicted CTR and ROAS, then classified as high, medium, or low potential – all before a single impression is paid for. This approach has achieved up to 81% accuracy in forecasting outcomes, while enabling teams to increase testing speed by more than 10x.

For creative and growth teams, that means fewer delays, fewer wasted cycles, and a faster path to scalable campaigns. Instead of testing to learn, you launch with confidence, using data, not guesswork, to decide what goes live. Predictive advertising isn’t just more efficient. It’s a structural upgrade to how performance marketing gets done.

A/B testing, sometimes called split testing, is a structured experiment. You take one ad as your baseline and create a second version that changes a single element. When using Meta’s built-in A/B testing tool, the platform splits the audience evenly into non-overlapping groups to ensure unbiased results.

For example, you might run the same ad image with two different headlines, the same copy with two different CTAs, the same creative shown to two different audiences.

After enough data is collected, you compare results and identify which version performed better based on your chosen metric.

This structure is what separates A/B testing from casual tweaking. You are not reacting to random performance swings. You are intentionally testing a hypothesis and letting the data answer it.

Facebook advertising has a lot of moving parts. Creative, copy, audience, placement, timing, and budget all affect results. Without testing, it is easy to blame the wrong thing when performance drops or assume success came from the wrong change.

A/B testing matters because it:

Even small improvements compound. A slightly higher click-through rate or a modest drop in cost per conversion can make a meaningful difference once you scale spend.

Testing Facebook ads is not the same as testing a landing page or an email subject line. Ads run inside a dynamic delivery system where auction pressure, competition, and user behavior constantly change.

Facebook ad testing behaves differently from most other channels because ads are delivered through real-time auctions. Performance can shift quickly as bids, audiences, and competing ads change. Creatives also fatigue fast, so an ad that performs well today may decline within days. When tests are set up manually, audience overlap can distort results, and if the test ends before the delivery system stabilizes, early data can be misleading.

Because of this, Facebook A/B tests need enough time and budget to reach stable delivery and meaningful volume. Drawing conclusions too quickly often leads to incorrect decisions, even when early results appear strong.

Facebook ads offer many elements that can be tested, but effective A/B testing requires focus. Not every possible variable should be tested at once, and the most reliable tests isolate a single element that directly influences user behavior.

Visuals are often the first thing people notice. Testing images or videos can reveal surprising preferences.

Common creative tests include static image vs video, different backgrounds or color contrast, product-focused vs lifestyle visuals, and close-up details vs wide context shots.

Words shape perception and intent. Small copy changes can lead to large performance differences.

You might test short copy vs longer explanation, benefit-focused vs problem-focused messaging, informational tone vs urgency-driven tone, and direct language vs softer phrasing.

Headlines frame the message, while primary text sets expectations. Testing structure often works better than testing random wording.

Examples:

CTAs guide user behavior. While Facebook offers preset CTA buttons, the surrounding copy often matters just as much.

You can test action-oriented CTAs vs exploratory ones, urgency-based language vs neutral prompts, and instructional phrasing vs emotional triggers.

Audience tests can reveal who actually converts, not just who clicks.

Useful audience tests include:

Placement affects how ads are consumed. Stories, feeds, and other placements behave differently.

Placement tests help answer where users engage more naturally, where conversions cost less, and which placements drive low-quality clicks.

Facebook provides a structured way to run A/B tests through its Experiments tool by splitting audiences evenly into non-overlapping groups. This eliminates audience overlap, reduces bias, and keeps delivery fair across variations.

The typical flow looks like this:

The most important part is consistency. Both versions must run under the same conditions, with equal budget distribution and similar audience size.

A test is only as useful as the metric behind it. Choosing the wrong metric can lead you to optimize for the wrong outcome.

Common metrics include:

For example, optimizing for clicks alone can increase traffic while hurting sales. The metric must match the purpose of the campaign stage you are testing.

A good Facebook ad A/B test should run for at least 7 days. However, real A/B test duration should be based on reaching statistical significance, which may take more or less time depending on traffic volume and campaign goals. That gives the algorithm enough time to exit the learning phase and reach stable delivery. Anything shorter often leads to misleading results, especially if your audience is small or your budget is limited.

A full week also helps account for fluctuations in user behavior across different days, like weekday vs weekend performance. If you're testing something with a longer sales cycle or a smaller conversion pool, you may need to run the test even longer to get results you can trust.

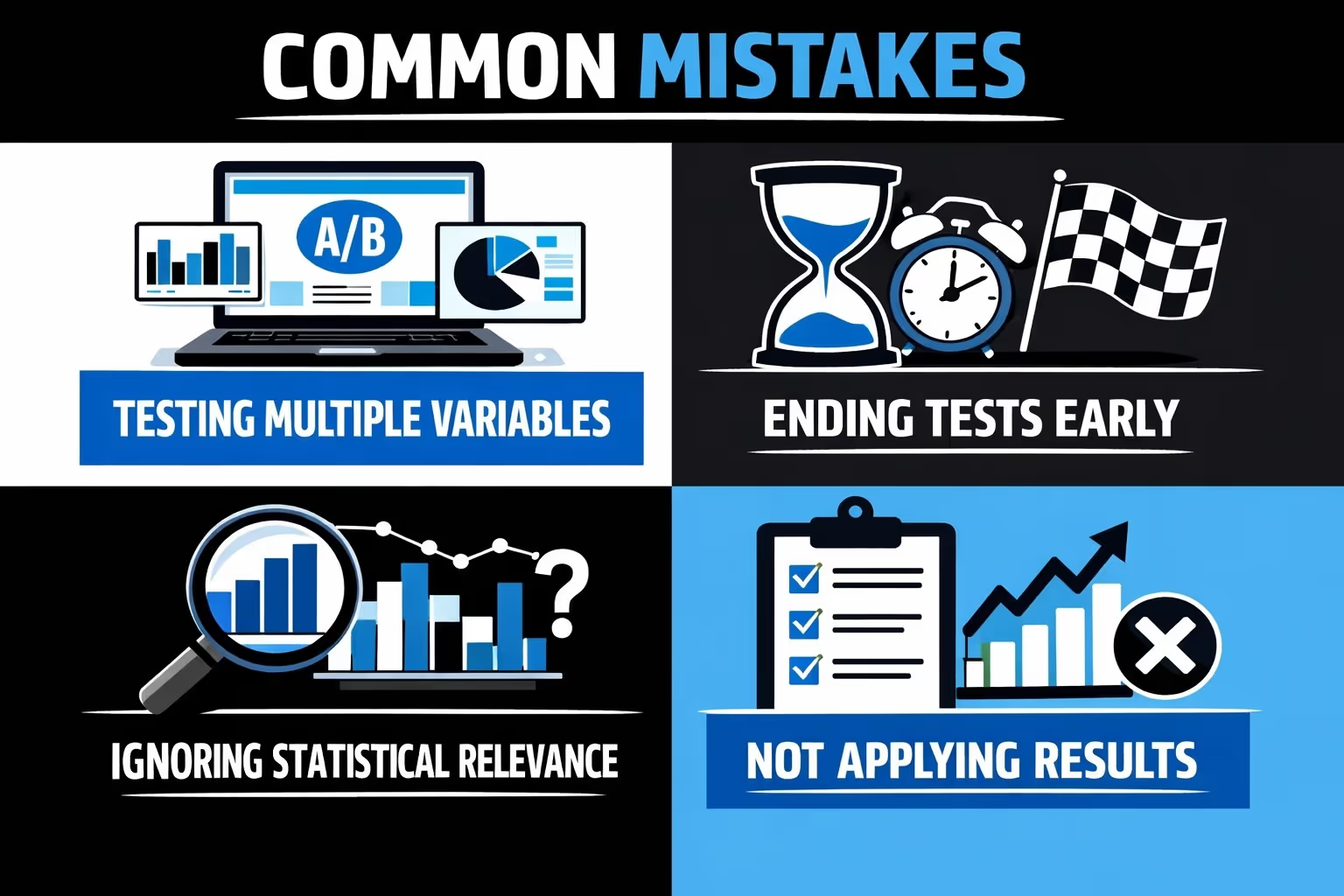

Even experienced advertisers make testing mistakes. Most issues come from trying to move too fast or test too much at once.

When you change several things in one test, like the image, the headline, and the CTA all at once, you end up with results you can’t really use. Even if one version performs better, there's no way to know which change actually made the difference. That kind of test might feel efficient, but it gives you nothing reliable to build on.

A lot of advertisers get excited when one version starts pulling ahead early, but short-term results are often just noise. Facebook’s delivery system takes time to even out, and performance can shift as the algorithm learns. Cutting a test too soon risks scaling the wrong ad and wasting more budget later.

Seeing a big jump in performance might look promising, but if it’s based on a tiny number of impressions or conversions, it’s probably not meaningful. Small sample sizes swing easily with a few clicks or sales. You need enough volume to be confident that what you're seeing is real and repeatable.

Running tests without acting on the results is more common than you’d think. Sometimes the winning variation never gets scaled, or teams move on without adjusting their broader strategy. If a test doesn’t change how you plan or spend, then it was just busywork—not a real improvement.

If you're testing things just to see what happens, chances are you won’t learn anything useful. A good test starts with a simple, focused question – something you’re actually trying to confirm or disprove. Without that clarity, even if one version wins, you won’t know why, and the insight won’t carry forward.

Facebook ads support different stages of the customer journey. Testing should match where the user is mentally.

At the top of the funnel, tests often focus on attention and interest.

You might test:

Here, clarity and trust matter more.

Tests may include:

At the bottom of the funnel, friction reduction matters most.

Effective tests include:

Testing isn’t something you do once and check off. Audiences shift, creative trends evolve, and competition doesn’t stand still. It makes sense to start every new campaign with a fresh round of testing.

Then, every few months, revisit major creative elements to see if they still hold up. And if your performance starts to plateau, that’s usually a sign it’s time to test again. Just because something worked last quarter doesn’t mean it’ll keep working today.

Data can be tempting. Seeing a big performance swing often triggers immediate action. Good testers resist that urge.

When reviewing results, look at trends, not spikes, compare cost efficiency, not just volume, and consider audience quality, not just clicks.

Testing is about long-term learning, not chasing short-term wins.

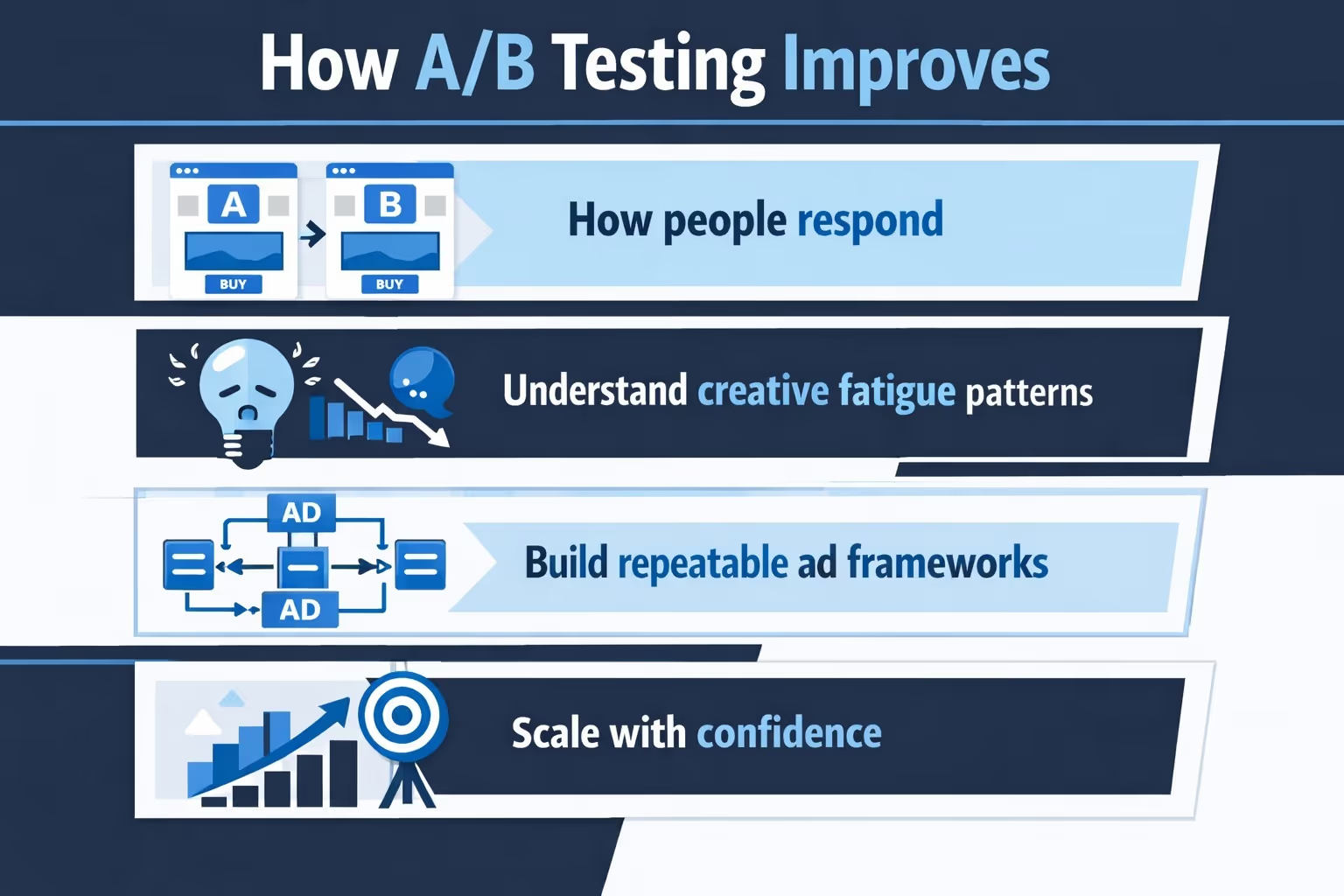

The real value of A/B testing is not a single winning ad. It is the accumulated understanding of your audience.

Over time, testing helps you:

This is what separates consistent advertisers from lucky ones.

A/B testing in Facebook ads is not complicated, but it does require discipline. The rules are simple: change one thing, measure it properly, and give the test enough time to mean something.

When done right, testing becomes less about finding magic ads and more about building a reliable system. You stop guessing. You stop reacting emotionally to performance swings. You make decisions based on evidence.

That shift alone can save budget, improve results, and make Facebook advertising feel far more predictable than it usually does.

In a platform that changes constantly, A/B testing is one of the few things that keeps your strategy grounded in reality.