Top Meta Ads Management Agencies in Nashville to Grow Your Business

Boost your Meta ad results with Nashville’s top agency. Smart targeting, AI insights, and real-world performance you can trust.

Most Facebook ad accounts don’t fail loudly. They drift. Costs inch up, results flatten, and suddenly you’re spending more time reacting than improving.

Optimization isn’t about chasing every new setting Meta releases. It’s about keeping control while the platform automates more of the work. This guide focuses on how to spot issues early, make cleaner decisions, and keep performance steady instead of swinging week to week. No tricks. Just a grounded way to think about Facebook ads optimization in how it actually works today.

At Extuitive, we believe Facebook ads optimization should begin before spend, not after. The traditional loop of launching ads, spending to learn, and then reacting is slow and costly. In today’s environment, too much budget is burned discovering what does not work, and too little learning carries forward.

We built Extuitive to answer a more useful question early: which creatives are most likely to drive higher CTR and stronger ROAS before launch. Predictive ad performance shifts optimization upstream, helping teams make better decisions before budget is committed.

Extuitive is a predictive advertising intelligence platform trained on your brand’s real performance data and enriched with large-scale consumer intelligence. Performance is contextual, so predictions are specific to your business, not generic benchmarks.

In practice, Extuitive:

Predictive ad performance reduces wasted testing and shortens feedback loops. Creative teams get faster signal on direction. Growth teams push more budget toward ads that are more likely to perform. Leadership teams gain predictability instead of constant guesswork.

In a world where platforms are opaque and learning is expensive, prediction is how optimization stays controlled instead of reactive.

A common mistake is treating optimization as a series of actions. Change the bid. Refresh the creative. Expand the audience.

A more useful way to think about it is system maintenance.

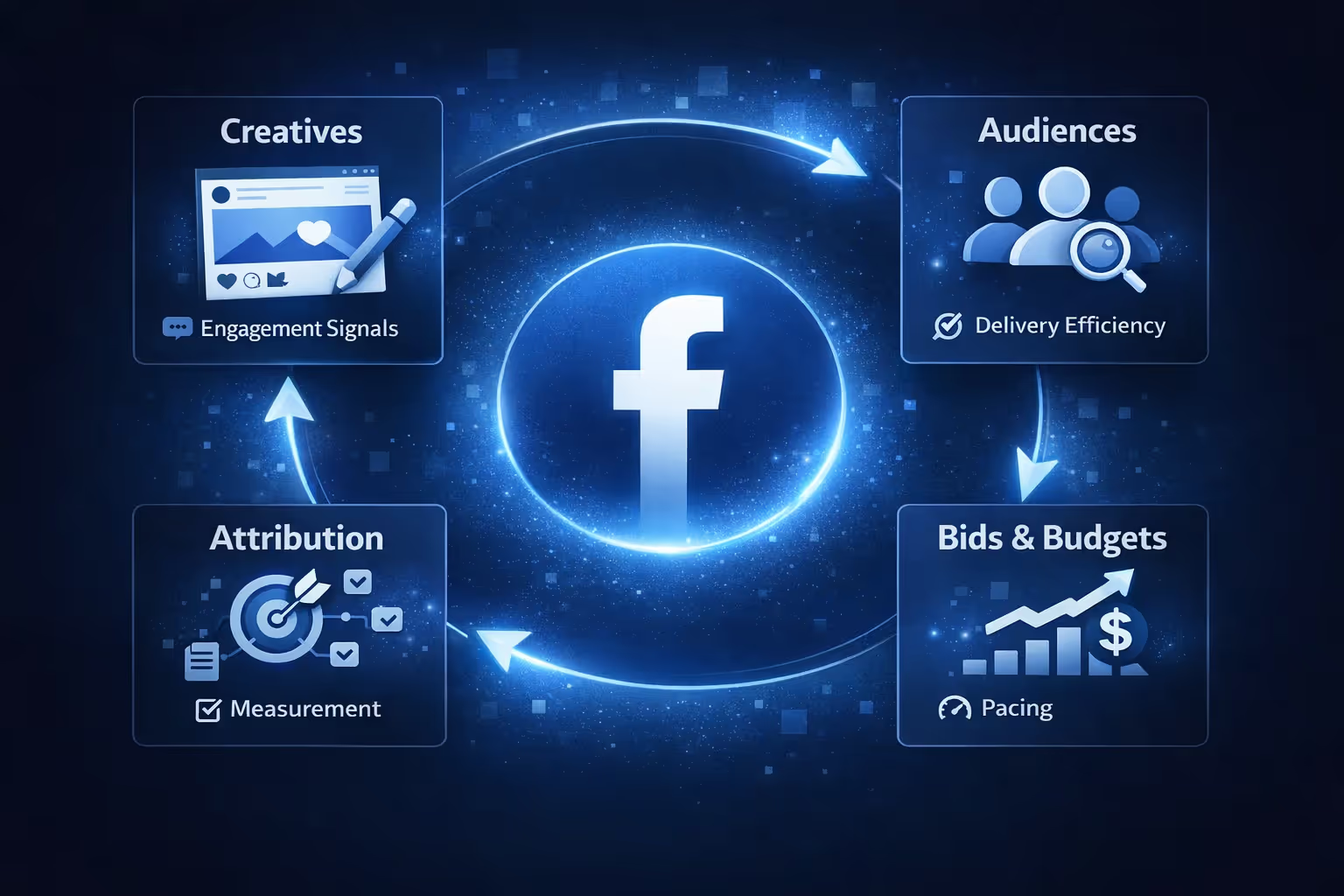

Every Facebook ad account is a system made up of inputs and feedback loops. Creatives feed engagement signals. Audiences shape delivery efficiency. Budgets and bids influence pacing. Attribution determines what looks successful.

When one part of the system weakens, the rest compensates. That is when drift begins.

Strong optimization is not constant change. It is making sure each part of the system keeps sending clean, consistent signals.

Structure matters more now than it did a few years ago. With fewer manual controls and heavier automation, messy accounts generate messy signals.

The goal of structure is not granularity. It is clarity.

Consolidated campaigns work best when:

Fewer campaigns mean more data per campaign. That helps the algorithm learn and reduces volatility.

Segmentation is still useful when:

The mistake is splitting for the sake of control. If separation does not create better decisions, it usually creates noise.

Facebook optimizes toward what you tell it to value. If that does not match your business reality, the system will drift even if metrics look fine.

Traffic campaigns are designed to find people who click easily. That does not mean they buy, sign up with intent, or move meaningfully down the funnel. Click volume can look healthy while real results stall, especially in performance-focused campaigns.

Engagement campaigns prioritize likes, comments, and shares. These signals can help with visibility or social proof, but they rarely correlate with revenue. Optimizing for engagement trains the system to find people who interact, not people who convert.

Lead campaigns often optimize for form completion speed, not lead quality. Without downstream signals like qualified leads or CRM feedback, the algorithm learns to prioritize easy submissions over genuine intent. Volume increases, but value quietly declines.

Before launching any campaign, ask a simple question:What behavior do I actually want the system to find more of?

If the answer is purchases, make sure your purchase signal is strong enough to support that. If it is not, the algorithm will optimize toward proxies instead.

Objective selection is not a setting. It is a commitment.

Facebook can only optimize what it can see. When signals weaken, the system starts guessing, and that guesswork leads to unstable delivery. Privacy changes, browser restrictions, and device-level limits all reduce event visibility, which creates a few predictable problems:

You do not need perfect tracking to counter this, but you do need consistency. Stronger signal hygiene comes from sending key events reliably, avoiding duplicate or broken events, passing basic identifiers when possible, and keeping event priorities aligned with actual funnel value. Clean signals do not guarantee performance, but weak signals almost guarantee instability.

Creative fatigue is rarely obvious when it starts. It shows up slowly and quietly. Click-through rates soften, frequency climbs, and costs begin to edge upward. Nothing looks broken at a glance, but performance is already eroding underneath.

The biggest mistake teams make is waiting for clear failure. By the time results drop sharply, the algorithm has already adjusted delivery and audiences are often oversaturated.

Every creative moves through a predictable lifecycle. It launches, gathers early engagement, settles into a period of stable performance, and then gradually declines as attention fades. Eventually, delivery becomes inefficient and costs rise.

Different formats decay at different speeds. Static images tend to fatigue faster, while video often lasts longer due to higher engagement. Even strong video, however, eventually loses effectiveness.

The key is understanding when decline usually begins in your account and rotating creatives before performance drops hard. Planned refreshes preserve stability far better than reactive swaps after results collapse.

Testing does not mean flooding the system with endless variations and hoping something sticks. When too many elements change at once, results become noisy and hard to trust. Effective creative testing is about control, not volume.

Effective testing follows a few clear principles:

Creative testing should produce answers you can reuse. If a test does not tell you why something worked or failed, it only adds uncertainty and makes optimization harder instead of easier.

Facebook pushes automation because it works well at scale. When data quality is strong and volume is high, automation can outperform manual control. Problems start when automation is treated as a strategy instead of a delivery mechanism.

The key is knowing when to let the system run and when human judgment needs to step in.

Automation should handle execution. Humans should handle direction. Optimization works best when the system is trusted to do what it is good at, while people step in to protect efficiency, interpret signals, and make judgment calls. Blind trust in automation is not strategy.

Budget changes are one of the fastest ways to destabilize campaigns. Large increases can reset learning and force the system to re-evaluate delivery from scratch. Sudden cuts can have the opposite effect, starving the algorithm of data and slowing optimization. Both scenarios introduce noise that makes performance harder to read and control.

When budgets move too quickly, the system spends more time adjusting than optimizing, which often shows up as short-term volatility and rising costs.

Scaling works best when budgets increase in small, controlled steps. Gradual changes give the system time to absorb additional spend without resetting learning or introducing unnecessary volatility.

Performance should remain stable for several days before any budget increase. Scaling on top of unstable results compounds noise and makes it harder to understand whether changes are helping or hurting performance.

Additional budget only works when there is enough strong creative to absorb it. Without fresh or scalable creatives, higher spend accelerates fatigue instead of driving more conversions.

Signals need to remain consistent during scaling. Changes in tracking quality, event priorities, or attribution windows confuse the algorithm and reduce its ability to optimize efficiently.

Not every campaign is built to scale indefinitely. Some hit natural ceilings where additional budget simply buys lower-quality impressions. Forcing scale beyond that point usually increases cost without increasing value.

In those cases, stability is a better outcome than growth. Holding spend steady preserves efficiency and creates space to address structural limits before pushing further.

Most performance issues appear long before results collapse. They show up as small shifts that are easy to ignore when looking at dashboards day to day. By the time numbers clearly look bad, the underlying problem has usually been in place for a while.

Optimization works best when you notice these early signals and respond calmly instead of reacting after damage is done.

A decline in click-through rate while impressions remain stable usually points to creative fatigue. The audience is still available at the same scale, but fewer people are engaging with the message. In this situation, structural changes or budget adjustments rarely help. A creative refresh is usually the right response.

If cost per acquisition increases while conversion volume remains steady, attribution lag or reporting delays are often involved. Modeled conversions, delayed attribution, or changes in reporting windows can distort short-term performance without reflecting a true decline in value.

Before making changes, compare performance across longer windows and relative benchmarks rather than reacting to daily fluctuations.

A sudden increase in CPM without changes to targeting or bids often signals declining signal quality or increased uncertainty in matching events to users. In these cases, checking tracking health and event consistency is usually more productive than changing creative or audience settings.

Optimization starts with diagnosis. Action comes second.

Retargeting is often where optimization drift turns into audience fatigue. When the same people see the same message too often, performance erodes quietly. Engagement drops, costs rise, and ads start to feel intrusive instead of relevant.

This usually comes from overexposure, repetitive messaging, or poor sequencing. Retargeting audiences are smaller, which means frequency climbs faster. Without active monitoring, even strong offers lose effectiveness.

Effective retargeting respects frequency, evolves messaging as intent changes, and excludes recent converters so budget is not wasted on people who have already acted. Its role is to support the main funnel by continuing the conversation, not competing with prospecting campaigns. When retargeting feels like a natural next step rather than pressure, performance stays stable and drift is easier to avoid.

Facebook ads optimization is not about clever moves or constant intervention. It is about discipline. Clear structure keeps learning focused. Clean signals give automation something reliable to work with. Predictable testing prevents chaos, and controlled budgets reduce unnecessary volatility.

When these basics are in place, campaigns do not need constant fixing. Performance stays balanced, changes are easier to interpret, and scaling becomes deliberate instead of reactive. That consistency is what prevents drift over time.

The hardest part of Facebook ads is not launching campaigns. It is keeping them from slowly sliding off course.

Optimization today is less about squeezing performance and more about preserving it. The teams that win are not the ones making the most changes. They are the ones making the right changes at the right time.

If you build systems that favor clarity over complexity, your campaigns will not just perform. They will stay steady.

And in a platform designed for constant motion, stability is a real advantage.