Best Agencies for Social Media Creative Testing

A practical list of agencies that specialize in testing social media creatives, improving ad performance through data, iteration, and real audience signals.

Ever poured money into a Facebook ad you thought would work, only to watch it tank? You're not alone. Running ads is half art, half science, but guessing isn’t a strategy. That’s where A/B testing comes in. It’s not just for split-testing headlines or button colors anymore. Done right, it’s your fastest route to understanding what actually drives clicks, conversions, and real return on ad spend.

In this guide, we’ll break down how to use Facebook’s built-in tools, when to go manual, what to test first, and how to avoid the classic traps marketers still fall into (looking at you, early test stoppers). Let’s get into it.

At Extuitive, we believe the "spend-to-learn" model is a relic of the past. Traditional A/B testing forces brands to waste up to 30% of their budget on underperforming ads just to find a signal. We’ve replaced this trial-and-error cycle with predictive advertising – a structural shift that identifies winners before you launch.

Using a polyintelligence framework, our engine unifies your brand’s historical performance with a proprietary dataset of 150,000+ AI agent consumers. This allows us to classify every asset as high, medium, or low performing in minutes. By scoring for CTR and ROAS pre-launch, we help brands cut CPA in half and quadruple creative throughput.

Predictive Advertising moves beyond platform "black boxes" to create a permanent memory layer for your brand. Instead of a "spray and pray" approach, you can eliminate weak assets and launch with the intention to scale. The era of guessing is over; the era of predictive advertising has begun.

A/B testing on Facebook means running two or more versions of an ad while changing only one variable at a time, such as the image, headline, call-to-action, audience, or placement. When you use Meta’s built-in A/B testing tools, the platform ensures that each person sees only one version, helping keep the results clean. With manual setups, this requires proper audience exclusions to avoid overlap.

The goal is simple: figure out which version performs better based on a clear metric like cost per purchase, click-through rate, or return on ad spend (ROAS). But the goal behind the goal is even more important: build a repeatable process for scaling winners and cutting waste fast.

You’d think with all the AI tools and automation available, testing would be a solved problem by now. It’s not. In fact, testing is more important than ever.

Bottom line: if you’re not testing, you’re flying blind.

Facebook (now Meta) offers a built-in A/B testing tool. It’s accessible through Ads Manager under the “Experiments” section. The tool splits your audience cleanly and tracks results automatically, but you’ll need to ensure equal budgets manually for a fair comparison. It’s great for audience testing or when you want simplicity.

Manual testing, on the other hand, gives you full control. You create separate ad sets or campaigns and adjust variables yourself. This is useful for testing creative variations, landing pages, or strategies Facebook’s tool doesn’t support directly.

Use the built-in tool when:

Use manual testing when:

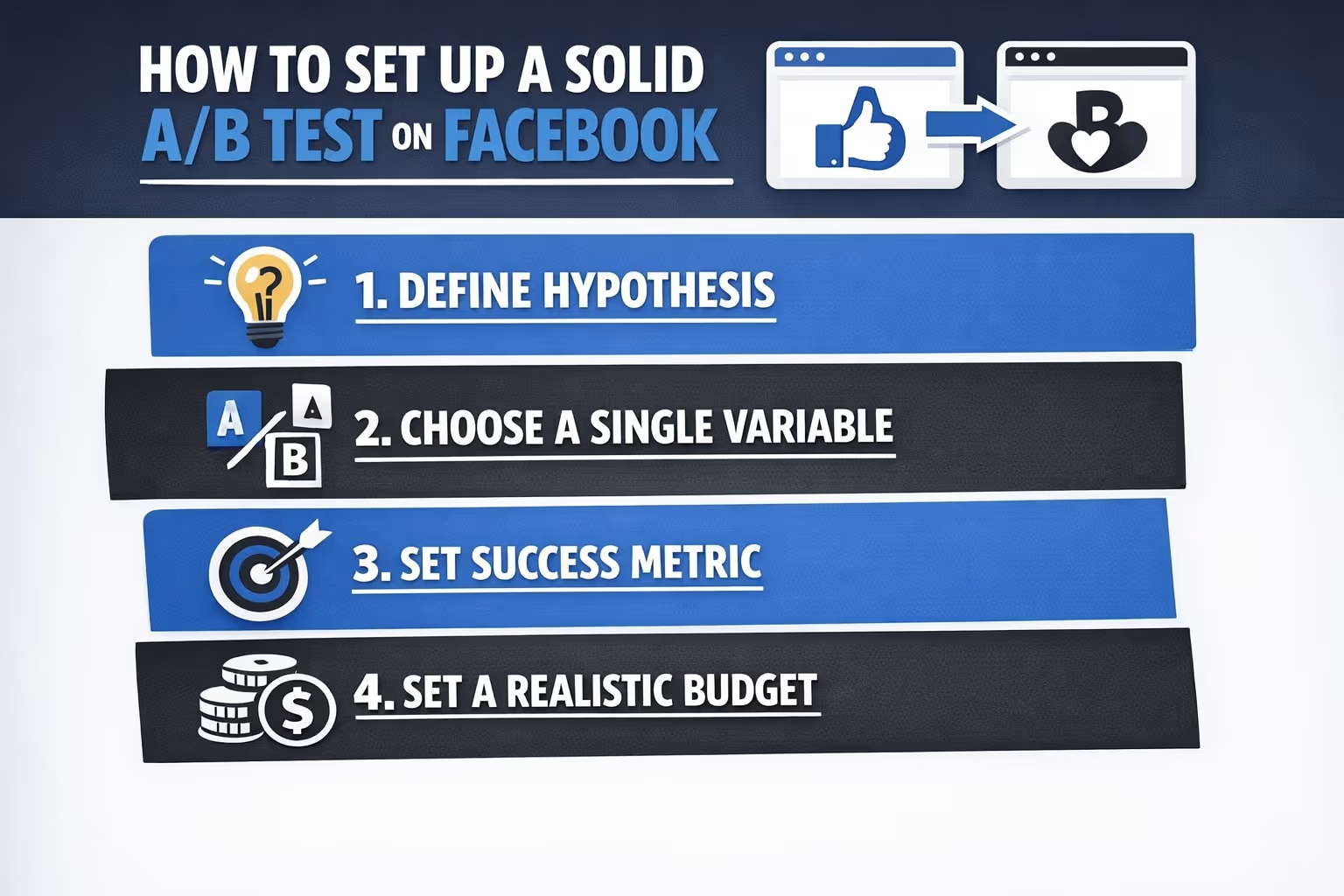

If your tests aren’t giving you clear answers, there’s a good chance the setup is off. Here’s how to do it right.

Before you set anything live, be clear on what you’re actually trying to learn. Testing without a clear question behind it tends to produce noise, not insight. For example, you might want to know whether video ads drive better engagement than static images. Or maybe you’re wondering if your warm audience converts more efficiently than your cold lookalikes. Sometimes it's as simple as testing whether a tighter, punchier line of copy will lift your click-through rate compared to a longer, more detailed version. The important thing is: start with a focused hypothesis you can act on.

This one’s non-negotiable. If you’re changing audience, placement, creative, and copy all at once, you won’t be able to trust the outcome. Stick to one change per test. If you’re testing a new CTA, don’t also change the image. If you’re targeting a new audience, leave the creative exactly as-is. Controlled testing only works when you keep everything else consistent. That’s what allows you to isolate what actually made the difference.

It’s tempting to wait and see which number “looks good,” but you’ll end up chasing vanity metrics if you don’t decide on a success measure upfront. What matters depends on your goal. If you’re looking for sales, cost per purchase or return on ad spend should lead. If you’re building awareness or testing hooks, click-through rate might be the right focus. Whatever it is, pick one main metric and don’t keep switching mid-test just because another number looks better.

Budget plays a bigger role in A/B testing than most people think. If you want results you can trust, you’ll need enough volume to reach statistical confidence. As a general rule, aim for at least 50 conversions per variation, but use a statistical calculator to confirm whether you’ve reached significance. If your product is expensive or conversion rates are low, you may need to target earlier signals like “Add to Cart” or “Landing Page View” instead. The key is to make sure each variation gets a fair shot with enough data behind it.

Patience matters here. A/B tests that only run for a few days often deliver misleading results. Facebook’s algorithm takes time to stabilize, and consumer behavior shifts throughout the week. Let your test run for at least 7 days as a baseline, or longer if needed to reach statistical significance. Don’t pause it halfway through or make mid-test tweaks just because performance dips for a day. Let the full cycle play out before you make a call – you’ll get cleaner, more reliable insights that way.

You could test anything, but not everything is worth it. Here’s how to prioritize.

Start with high-impact variables:

Avoid:

Plenty of advertisers think they’re testing, but here’s where it usually goes wrong:

When running manual tests, it’s easy to forget to exclude overlapping audience segments. The result? You end up bidding against yourself. This not only inflates your costs, but also skews your data. If both variations are hitting the same people, you won’t get a clean read on which one actually performed better.

It’s tempting to stop a test early when one version starts pulling ahead after a couple of days. But early leads can be misleading. Day 2 results often reflect random variation, not a meaningful trend. Let the test run long enough to smooth out short-term noise—otherwise, you’re just reacting to luck.

Going in without a defined metric is like driving without a destination. One day you might chase CTR, the next it’s ROAS, then maybe CPC looks interesting. If you’re just picking whatever number looks best at the moment, the whole test loses focus. Decide what matters most ahead of time and stick to it.

If you're changing your creative, tweaking your copy, and targeting a new audience all in one test, the results won’t tell you much. You won’t know which of those changes made the impact. A/B testing works when the variable is isolated. Keep the rest steady and test methodically.

Performance spikes aren’t always about your ad. External factors like a competitor’s promo ending, a viral news event, or a random inventory surge can all affect results. If you don’t account for these shifts, you might mistake a short-lived boost for ad effectiveness. Always check the bigger picture.

A test isn’t done just because you feel like it is. Here’s what to look for. At least 95% confidence in results (statistical significance). Minimum of 50-100 conversions per variant. Stable cost per result over time. No major external events skewing results (like a sudden promo spike).

If you’re not sure, let it run longer. Better to wait than call it early and base your next move on shaky data.

The best brands don’t test once a quarter. They build testing into their weekly or monthly routine.

Try this rhythm:

The market changes, algorithms evolve, and audience behavior shifts. Testing keeps you in sync.

This is where most people drop the ball. They find a winning variation, celebrate, and move on. Don’t.

Here’s what to do instead:

A/B testing Facebook ads isn’t about finding the one perfect ad. It’s about building a system that helps you learn, adjust, and improve consistently. Not every test will produce dramatic results. Some will be inconclusive. That’s fine. Keep testing. Keep learning. Over time, you’ll waste less budget, make better decisions, and build a process that works whether you’re spending $50 a day or $5,000.

Just remember: one variable at a time. Give it time. And always let the data lead.